📊 Experiments & Results

Evaluation Setup

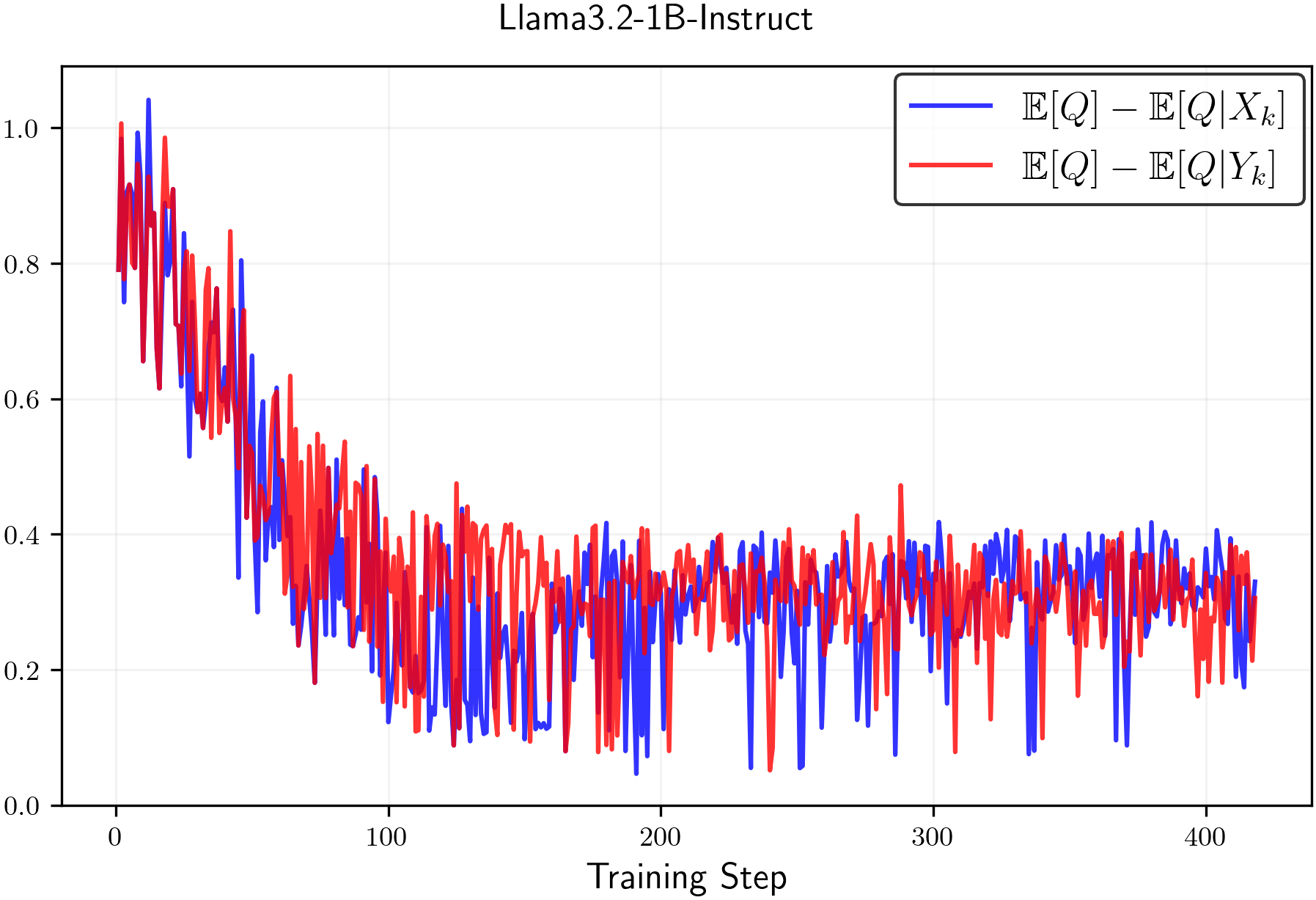

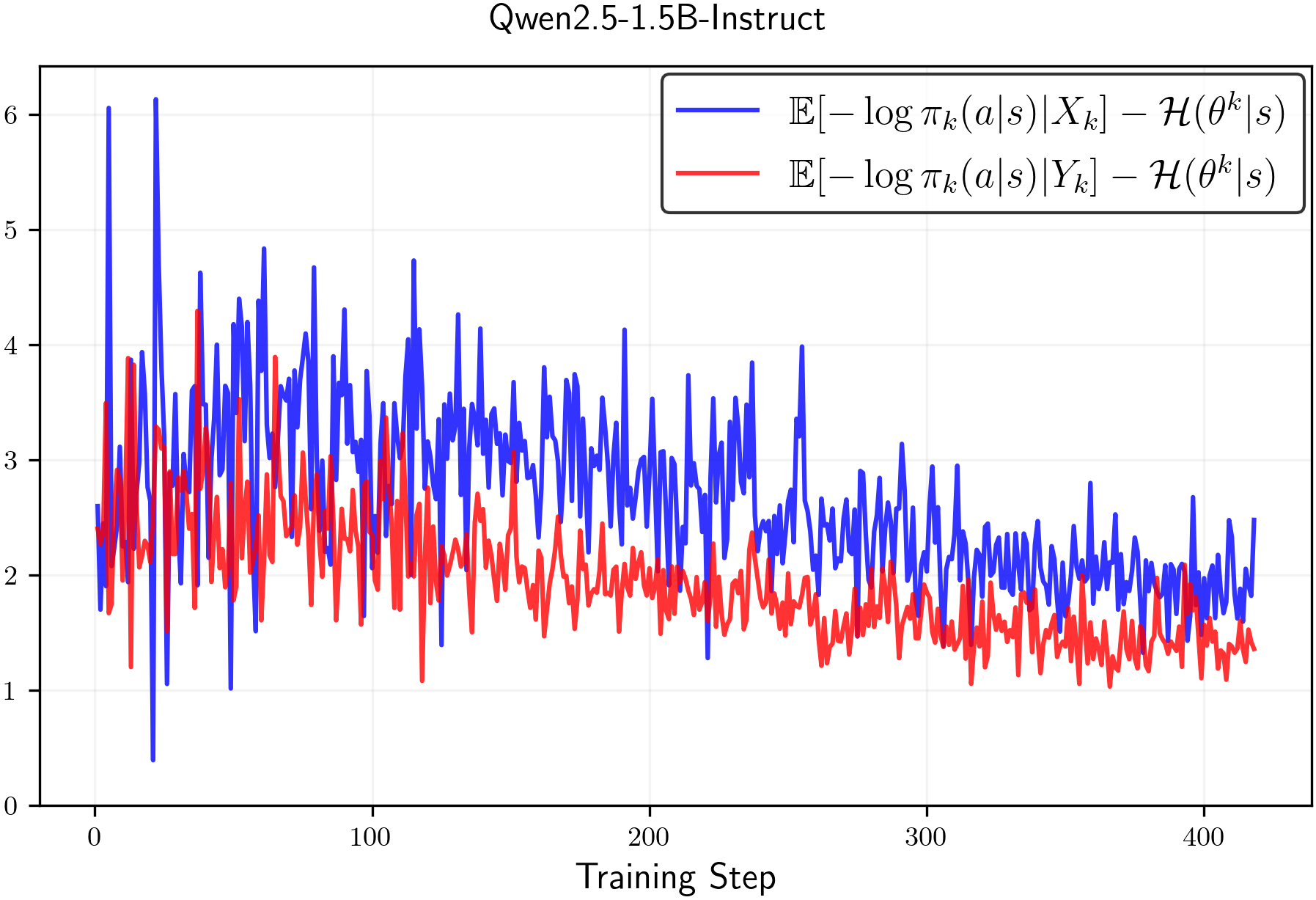

Analysis of policy entropy dynamics under RL training with random rewards

Benchmarks:

- GSM8K (Prompts only) (Mathematical Reasoning)

Metrics:

- Policy Entropy (Token-level Shannon entropy)

- Statistical methodology: Not explicitly reported in the paper

Experiment Figures

Entropy dynamics during training as clipping hyperparameters epsilon-low and epsilon-high are varied.

Policy entropy trends for Qwen, Llama, and Olmo models trained with random rewards.

Main Takeaways

- Clipping is not entropy-neutral: Standard symmetric clipping (epsilon=0.2) inherently reduces entropy because the 'clip-high' effect dominates the 'clip-low' effect.

- Lowering epsilon-low (tightening the lower clip) increases policy entropy, while lowering epsilon-high (tightening the upper clip) decreases it.

- Training with purely random rewards leads to entropy reduction across all tested model families (Qwen, Llama, Olmo), indicating that RLVR algorithms function as entropy minimizers in the absence of signal.

- Entropy collapse can be prevented mechanistically by tuning clipping thresholds (e.g., using asymmetric clipping) without needing auxiliary loss functions.