📝 Paper Summary

Online RLHF

Reinforcement Learning Theory

Sample Efficiency

SE-POPO is an online RLHF algorithm that achieves sample complexity polynomial in the reward scale by using preference-based exploration and iteratively updated samplers, overcoming the exponential scaling of prior reward-based methods.

Core Problem

Existing online RLHF algorithms rely on reward-based exploration, where sample complexity scales exponentially with the reward range (exp(R_max)) due to the sigmoid function in the Bradley-Terry preference model.

Why it matters:

- In scenarios with heavily skewed preferences (e.g., objectively correct answers where one response strictly dominates), the reward gap is large, causing the Bradley-Terry probability to saturate.

- When probabilities saturate, exponentially many samples are required to distinguish response quality using standard reward-based uncertainty, making alignment statistically inefficient.

- Prior works conjectured that this exponential dependency was unavoidable, limiting the theoretical applicability of RLHF to small reward ranges.

Concrete Example:

If an optimal response y* is significantly better than a reference response y' (large reward gap), the probability P(y* > y') approaches 1. A standard algorithm comparing these will see 'flat' gradients through the sigmoid function, requiring huge amounts of data to estimate the reward difference accurately.

Key Novelty

Self-Exploring Preference-Incentive Online Preference Optimization (SE-POPO)

- Replaces reward-based exploration (estimating absolute reward values) with preference-based exploration (estimating the probability one response is preferred to another), which avoids the gradient explosion caused by the sigmoid function.

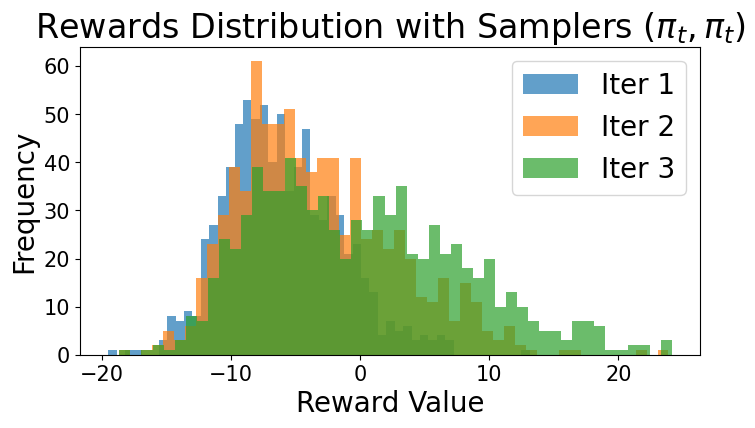

- Uses a 'self-updated sampler' mechanism: instead of comparing against a fixed reference policy, the algorithm iteratively updates the comparison policy in stages. This keeps the preference gap manageable, ensuring the algorithm always gains informative feedback.

Architecture

Pseudocode for the SE-POPO algorithm and its subroutine POPO.

Evaluation Highlights

- Achieves a theoretical sample complexity scaling of Õ(R_max^8), which is polynomial in the reward range, compared to the O(exp(R_max)) scaling of all prior online RLHF algorithms.

- Demonstrates that the preference-based regret of the POPO subroutine scales as Õ(√dT), effectively solving the exploration problem against a fixed comparator.

Breakthrough Assessment

9/10

Theoretically resolves an open problem raised by Xie et al. (2024) regarding the exponential dependence on reward scale in RLHF. Being the first to prove polynomial scaling is a significant theoretical advance.