📝 Paper Summary

Multi-step reasoning

Tree Search methods for LLMs

Efficiency in LLM reasoning

SEAG improves reasoning efficiency by only triggering tree search for low-confidence problems and merging semantically identical reasoning paths during exploration to avoid redundant computation.

Core Problem

Existing tree-search reasoning methods (like Tree of Thoughts) are computationally inefficient because they apply expensive search indiscriminately to easy problems and explore multiple paths that are semantically identical but phrased differently.

Why it matters:

- Standard tree search is too costly for widespread deployment, often requiring 100x more inference calls than simple prompting

- Current methods waste compute exploring 'different' branches that actually represent the exact same thought, leading to no gain in diversity

- Rigid search budgets fail to allocate resources where needed: wasting effort on easy queries while potentially under-exploring hard ones

Concrete Example:

In a math problem, an LLM might generate 'How many pages did Julie read?' and 'What is the number of pages Julie finished reading?'. Standard methods treat these as two distinct nodes to expand, doubling the subsequent workload. SEAG detects they entail each other and merges them into a single cluster.

Key Novelty

Semantic Exploration with Adaptive Gating (SEAG)

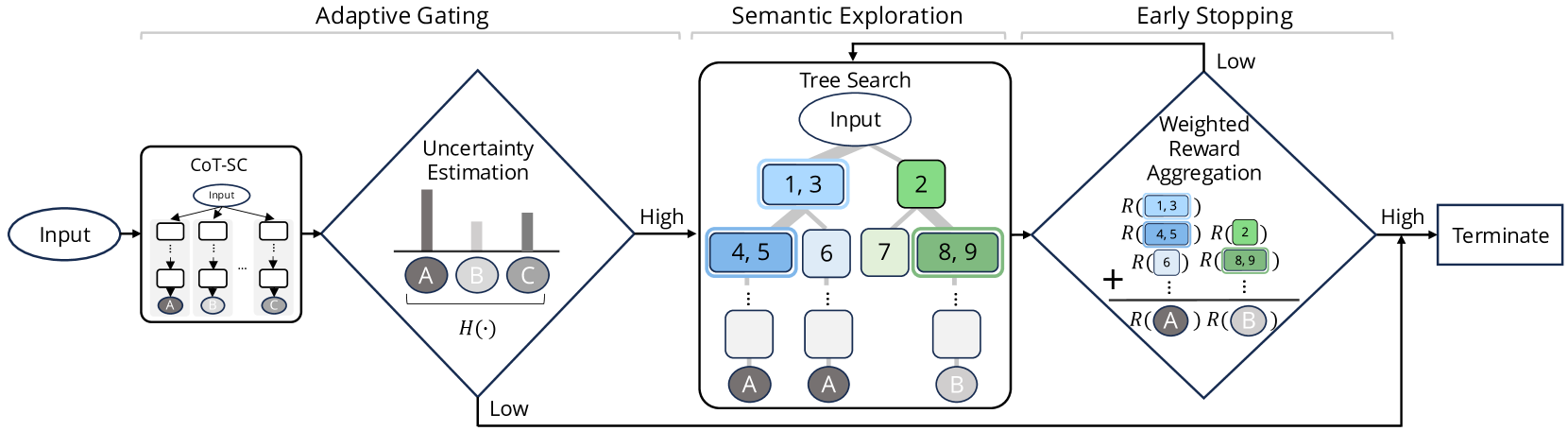

- Adaptive Gating: Uses the entropy of initial Chain-of-Thought (CoT) answers to decide whether to stick with a cheap answer or escalate to expensive tree search

- Semantic Clustering: Within the tree search, groups sibling nodes that have bi-directional textual entailment (using a small NLI model) to prevent redundant sub-tree expansion

- Semantic PUCT: Modifies the Monte Carlo Tree Search selection formula to prioritize clusters with higher aggregate probability mass (self-consistency principle) rather than just individual node probabilities

Architecture

The SEAG workflow involving Adaptive Gating followed by Semantic Exploration (MCTS with clustering).

Evaluation Highlights

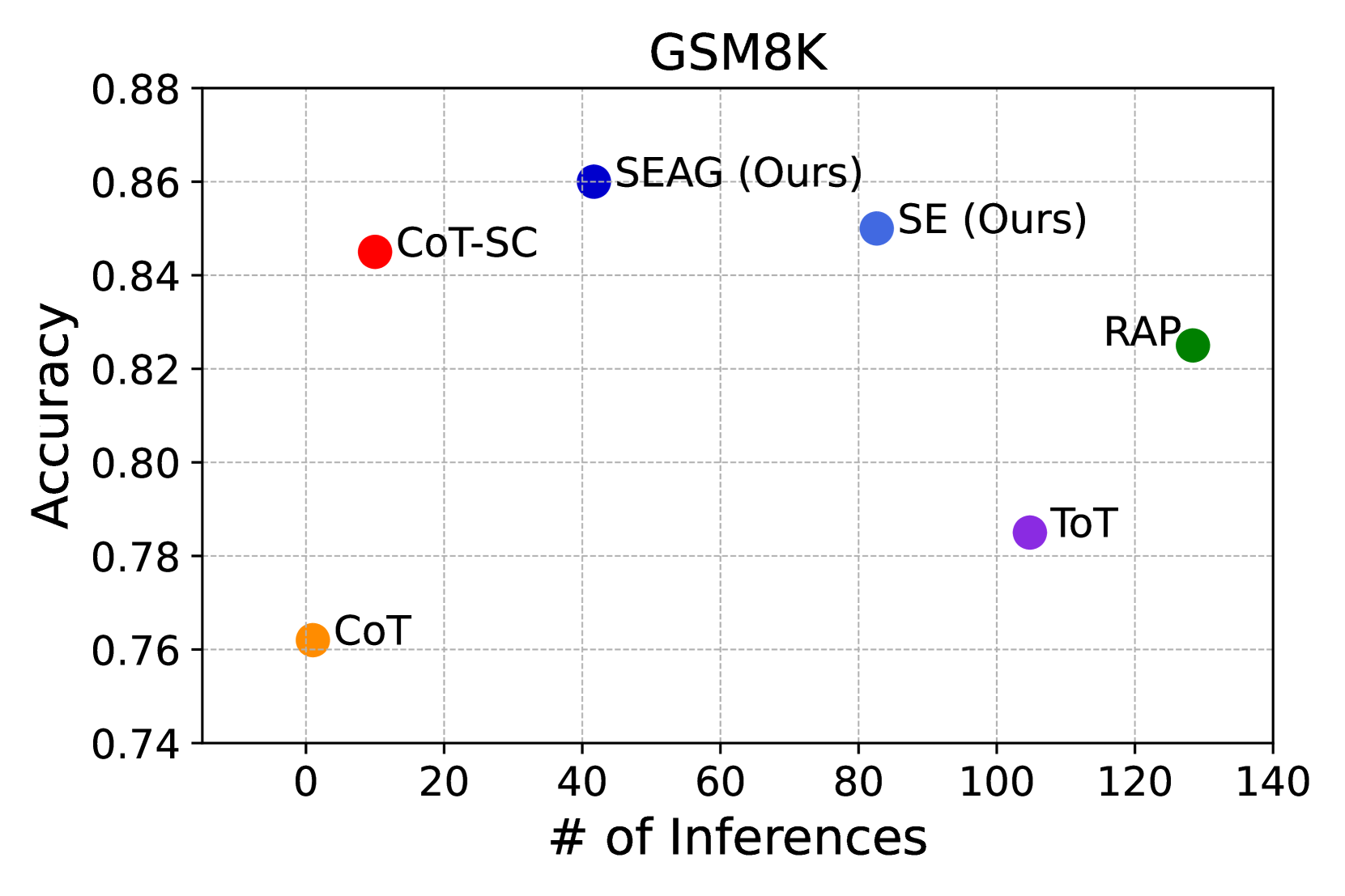

- Achieves 86.0% accuracy on GSM8K using Llama3-8B-Instruct, surpassing standard CoT-SC (84.5%) and Tree-of-Thoughts (78.5%)

- Reduces computational cost significantly: requires only ~41 inferences per problem on GSM8K compared to ~104 for Tree-of-Thoughts, while achieving higher accuracy

- Outperforms RAP (Reasoning-via-Planning) by +4.8% accuracy on average across benchmarks while using only 31% of the computational cost

Breakthrough Assessment

7/10

Strong engineering contribution improving the practicality of tree-search reasoning. While the core components (clustering, gating) exist individually in literature, their unification into a coherent, efficient MCTS framework is valuable and effective.