📝 Paper Summary

Knowledge Distillation

Chain-of-Thought (CoT) Reasoning

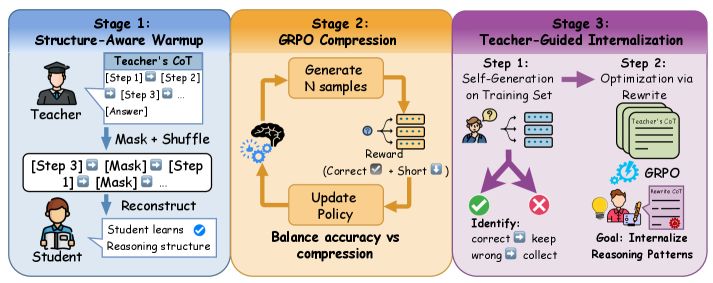

BRIDGE distills verbose reasoning from large teachers into compact students by establishing structural understanding via reconstruction, then optimizing brevity via reinforcement learning on masked tasks.

Core Problem

Distilling verbose Chain-of-Thought reasoning into small models fails because small models lack the capacity to memorize long sequences, leading to truncated or incoherent outputs.

Why it matters:

- Deploying powerful reasoning capabilities on edge devices requires compact models (e.g., 3B parameters) that remain explicit and verifiable

- Directly fine-tuning small models on teacher outputs results in repetition loops or superficial mimicry without genuine understanding due to capacity mismatch

- Existing compression methods either sacrifice interpretability (implicit reasoning) or logical integrity (heuristic pruning)

Concrete Example:

When a 3B model tries to learn from a 14B teacher's lengthy solution, it often produces truncated outputs or repetition loops because it cannot sustain the long-term dependencies required for the full chain.

Key Novelty

BRIDGE: Structure-Aware Curriculum for CoT Compression

- Teaches structural logic first by forcing the student to reconstruct shuffled and masked teacher reasoning chains, ensuring it learns dependencies rather than just copying

- Optimizes the accuracy-brevity trade-off using Group Relative Policy Optimization (GRPO) with a hierarchical reward that prioritizes correctness before efficiency

- Handles difficult failure cases by providing teacher scaffolds and asking the student to rewrite them concisely, enabling internalization of complex logic

Architecture

The three-stage BRIDGE curriculum framework

Evaluation Highlights

- +11.29% accuracy improvement on GSM8K using Qwen2.5-3B-Base compared to standard distillation baselines

- 27.4% reduction in output length while maintaining or improving reasoning accuracy

- Compresses 96.83% of teacher solutions for difficult cases when provided with structural scaffolds

Breakthrough Assessment

8/10

Significantly improves small model reasoning while reducing computational cost. The curriculum approach effectively solves the capacity mismatch problem that plagues standard distillation.