📝 Paper Summary

Reinforcement Learning from Human Feedback (RLHF)

Reward Modeling

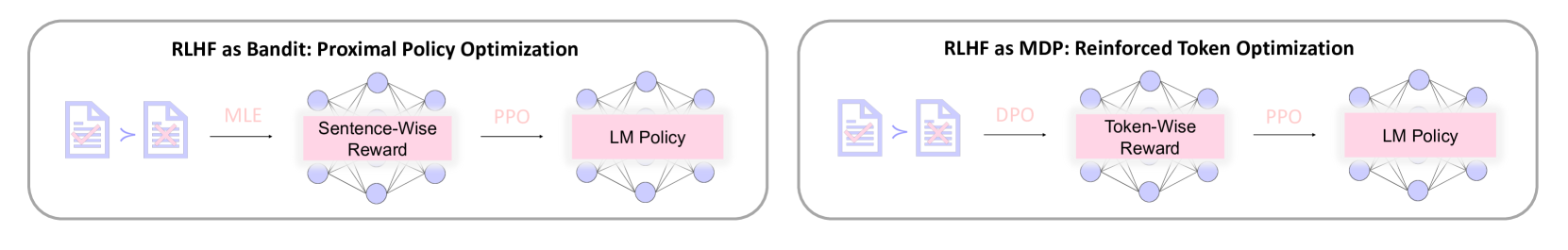

RTO improves language model alignment by deriving dense, token-wise rewards from preference data using DPO's formulation and optimizing them with PPO, treating generation as a Markov Decision Process rather than a Bandit.

Core Problem

Classical RLHF formulations treat generation as a Bandit problem with sparse sentence-level rewards, causing a mismatch with PPO (which is designed for MDPs with step-wise rewards).

Why it matters:

- Current PPO implementations typically assign the learned reward only to the final token (EOS), leading to sparse feedback signals

- Sparse rewards make PPO training unstable and sample-inefficient, limiting the performance of aligned models compared to potential theoretical optima

- While token-wise feedback is theoretically superior, collecting human annotations for every token is impractical, leaving dense reward construction under-explored

Concrete Example:

In standard implementations like OpenRLHF or TRL, the learned semantic reward is zero for all tokens except the last one. RTO replaces this with a non-zero reward for every token derived from the implicit reward function of DPO.

Key Novelty

Reinforced Token Optimization (RTO)

- Models RLHF explicitly as a Markov Decision Process (MDP) to capture fine-grained token-wise information rather than sentence-level summaries

- Leverages DPO (Direct Preference Optimization) not as a standalone policy optimizer, but as a mechanism to extract implicit token-wise rewards from offline preference data

- Injects these dense, DPO-derived token rewards into PPO training, combining the stability of trust-region methods with the dense feedback of direct preference formulations

Architecture

The pipeline of the RTO algorithm (described in text)

Evaluation Highlights

- Outperforms PPO by 7.5 points on the AlpacaEval 2 benchmark

- Surpasses PPO by 4.1 points on the Arena-Hard benchmark

- Matches PPO-level performance using only 1/8 of the training data, demonstrating superior sample efficiency

Breakthrough Assessment

8/10

Addresses a fundamental formulation mismatch in RLHF (Bandit vs. MDP) by ingeniously bridging DPO and PPO. The reported gains on major benchmarks and data efficiency claims are significant.