📝 Paper Summary

Reinforcement Learning Theory

Policy Gradient Methods

The paper proves PPO convergence by modeling it as an approximate gradient ascent method with cyclic data reuse and identifies a weighting error in truncated Generalized Advantage Estimation.

Core Problem

PPO lacks a theoretical foundation that accounts for its cyclic data reuse (epochs) and surrogate clipping, while standard truncated GAE implementations suffer from incorrect weight summation at episode boundaries.

Why it matters:

- PPO is a dominant Deep RL algorithm, yet its stability and convergence properties are largely heuristic and not grounded in rigorous theory

- Theoretical gaps prevent understanding why PPO's specific advantages (like data reuse) work without causing divergence

- The overlooked GAE truncation issue introduces systematic bias in advantage estimation, potentially degrading performance in environments with strong terminal signals

Concrete Example:

In standard truncated Generalized Advantage Estimation (GAE), the geometric weights for the advantages do not sum to 1 at the end of an episode (tail-mass collapse), effectively ignoring the remaining probability mass that should be assigned to the longest available estimator.

Key Novelty

PPO as Approximate Ascent with GAE Correction

- Reinterprets PPO not as a trust-region method (TRPO) but as a cycle of one exact gradient step followed by multiple biased surrogate steps

- Applies Random Reshuffling (RR) theory to prove that PPO's multi-epoch updates on reused data converge despite the accumulating bias of the surrogate gradient

- Proposes a weight correction for finite-horizon GAE to prevent probability mass collapse at episode boundaries

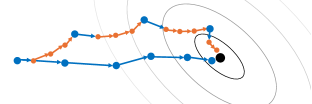

Architecture

Visualization of the PPO update cycle compared to A2C

Evaluation Highlights

- Theoretical proof: Derived explicit bias bounds for the PPO clipped surrogate gradient relative to the true policy gradient

- Theoretical proof: Established convergence guarantees for PPO's cyclic update scheme under standard smoothness assumptions

- Qualitative result: Identified that a simple weight correction for GAE yields substantial improvements in environments with strong terminal signals (e.g., Lunar Lander)

Breakthrough Assessment

7/10

Significant theoretical contribution providing missing convergence proofs for a widely used algorithm. The identification of the GAE tail-mass collapse is a practical insight, though empirical validation in the text is limited.