📝 Paper Summary

Offline Reinforcement Learning

Reinforcement Learning via Sequence Modeling (RvS)

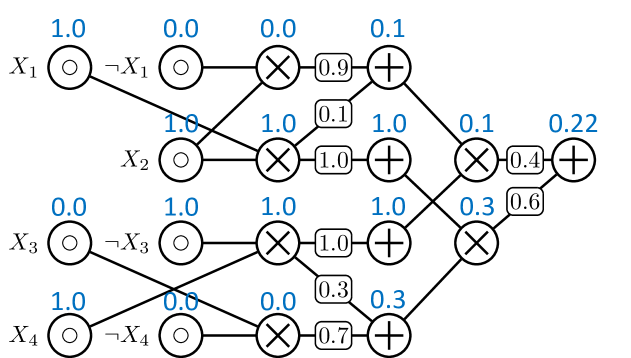

Tractable Probabilistic Models

Trifle replaces standard sequence models in offline RL with Probabilistic Circuits, enabling exact and efficient computation of high-return conditional probabilities that intractable models can only approximate.

Core Problem

Existing RvS methods use expressive but intractable models (like Transformers) that cannot efficiently or exactly compute the conditional probabilities needed to sample high-return actions during evaluation.

Why it matters:

- Sequence models often learn useful information during training but fail to elicit it during evaluation due to approximation errors in conditional generation

- Standard beam search or sampling approximations undermine the benefits of expressive models, leading to suboptimal actions even when the model 'knows' better

- Handling stochastic environments requires marginalizing over future states, which is computationally intractable for autoregressive models like GPTs

Concrete Example:

When the labeled return-to-go (RTG) is suboptimal, an agent must estimate the expected return of a new action sequence. An autoregressive model must sample many future trajectories to approximate this expectation (high variance/cost), whereas Trifle computes it exactly via marginalization.

Key Novelty

Trifle (Tractable Inference for Offline RL)

- Replaces the standard Transformer backbone in RvS with a Probabilistic Circuit (PC), a class of generative models that supports exact probabilistic queries

- Leverages the tractability of PCs to exactly compute the conditional probability of actions given a target high return, rather than relying on heuristic approximations

- Enables exact marginalization over future states, allowing accurate value estimation even in stochastic environments where standard models struggle

Architecture

Illustration of Probabilistic Circuits (PCs) compared to neural networks. A PC consists of sum nodes (mixtures) and product nodes (factorizations), leading to tractable leaves.

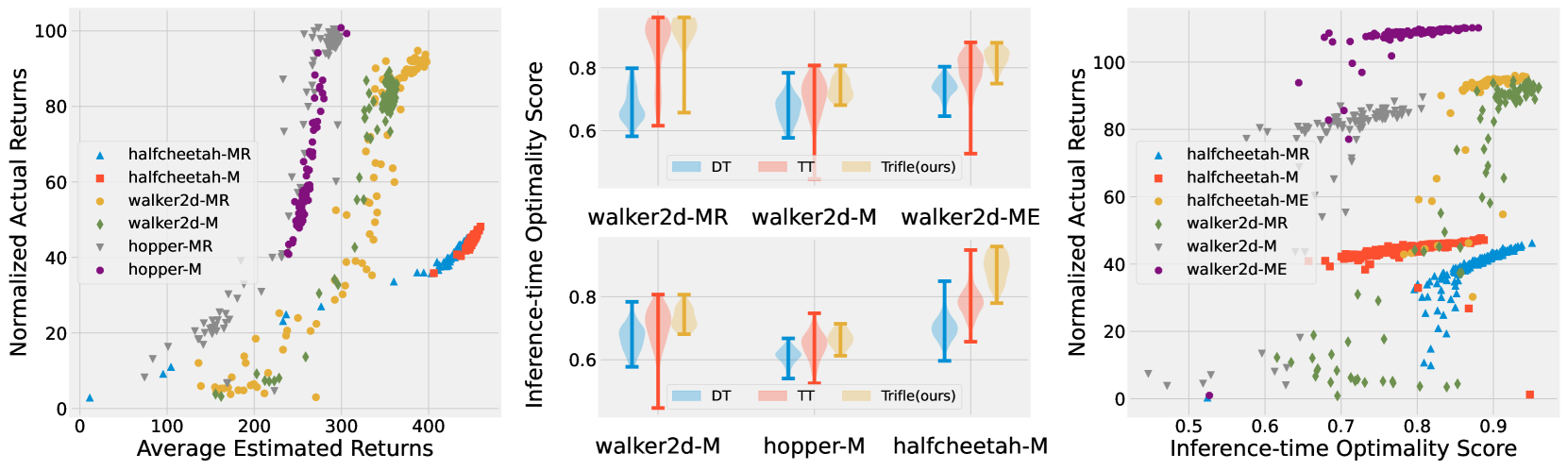

Evaluation Highlights

- Achieves state-of-the-art scores on 7 out of 9 Gym-MuJoCo benchmarks, outperforming strong baselines like Decision Transformer and IQL

- Significant performance gains in stochastic environments (up to +70% improvement over Decision Transformer in Stochastic Hopper)

- Demonstrates superior safety in constrained RL tasks by exactly enforcing safe action constraints during inference without retraining

Breakthrough Assessment

8/10

Identifies a fundamental inference bottleneck in sequence-modeling RL and successfully applies Tractable Probabilistic Models to solve it, yielding SOTA results. Bridges two distinct subfields effectively.