📝 Paper Summary

Sim2Real Transfer

Model-Based Reinforcement Learning (MBRL)

Bi-level Optimization

A bi-level reinforcement learning framework that adapts simulator parameters by differentiating through the in-simulation policy gradient to directly maximize real-world performance.

Core Problem

Policies trained in simulation often fail in the real world (Sim2Real gap) because current methods optimize proxies like simulator accuracy or robustness rather than real-world policy performance.

Why it matters:

- Standard prediction accuracy metrics for simulators do not correlate with the performance of the policy trained on them, leading to 'objective mismatch'.

- Robustness approaches (e.g., domain randomization) improve stability but do not guarantee optimality, often sacrificing peak performance for safety.

- Perfectly replicating complex real-world stochastic distributions in simulation is theoretically impossible, requiring methods that find optimal policies despite imperfect models.

Concrete Example:

Consider a robot trained in a simulator that perfectly predicts physics (high accuracy) but mismodels friction noise. The agent might learn a policy that relies on precise movements which fail under real-world noise. Conversely, a less 'accurate' simulator tuned via this method might exaggerate friction, forcing the agent to learn a robust gait that actually performs better in reality.

Key Novelty

Bi-level Policy Gradient for Sim2Real (Bi-level RL)

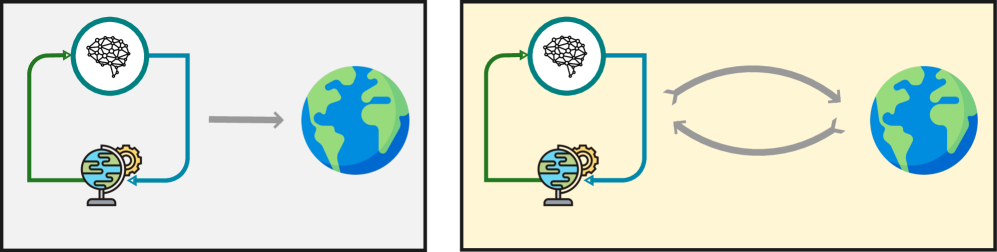

- Frames Sim2Real as a bi-level optimization: the inner loop trains a policy in simulation, while the outer loop updates simulator parameters based on that policy's real-world performance.

- Derives the sensitivity of the in-simulation policy parameters with respect to simulator parameters using the Implicit Function Theorem on the Stochastic Policy Gradient (SPG) condition.

- Allows the outer loop to update simulator dynamics and rewards to maximize real-world returns directly, rather than just matching real-world data observations.

Architecture

Conceptual flow of the Bi-level RL framework

Breakthrough Assessment

7/10

Strong theoretical contribution deriving the gradients needed for bi-level optimization in Sim2Real without assuming specific policy structures (unlike prior work). However, the paper lacks extensive empirical validation in the provided text.