📝 Paper Summary

LLM Post-Training

Reinforcement Learning from Human Feedback (RLHF)

Policy Optimization

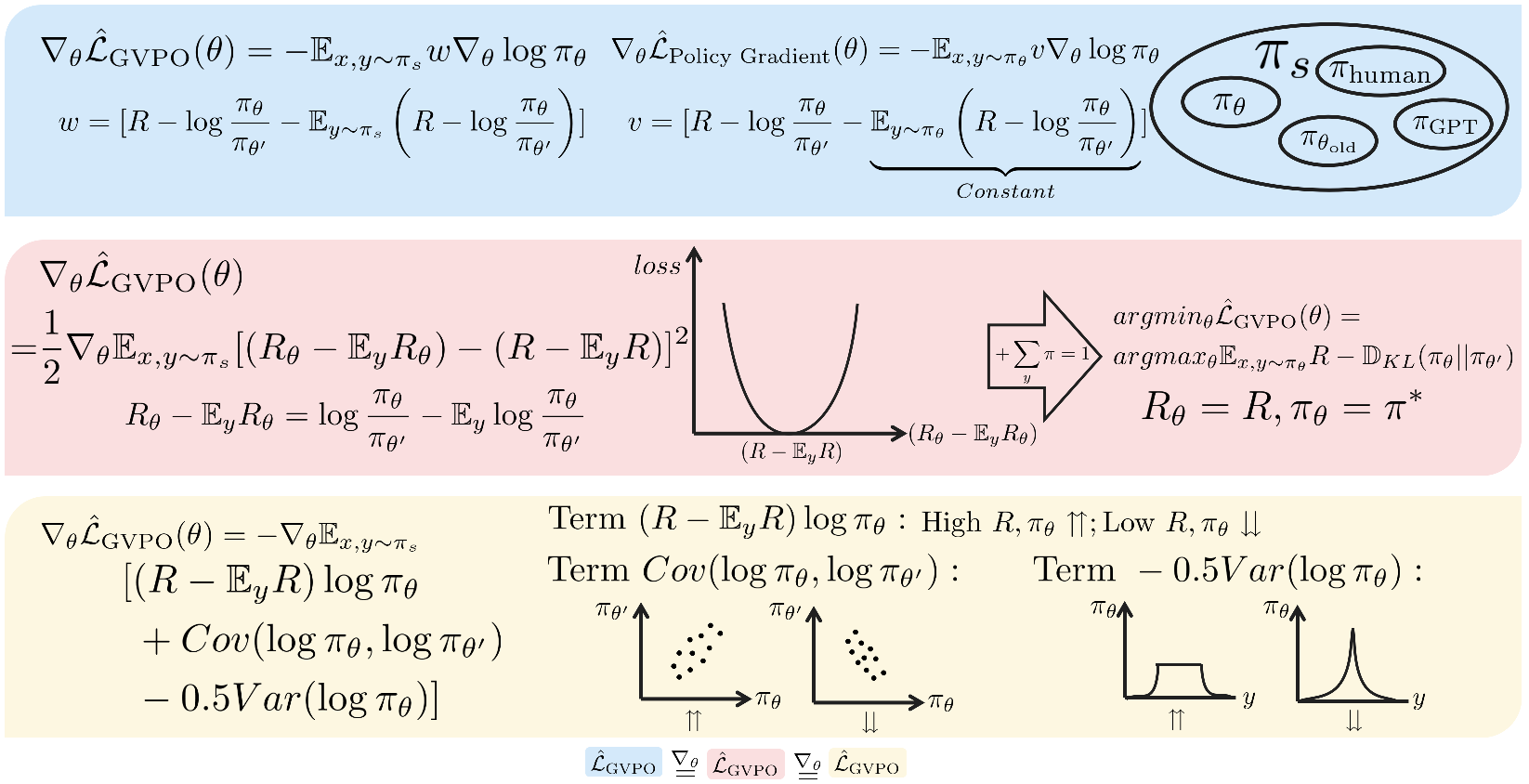

GVPO is a post-training algorithm that optimizes policies using a variance-based loss derived from the closed-form solution of KL-constrained reward maximization, enabling stable off-policy training without importance sampling.

Core Problem

Existing post-training methods like GRPO suffer from training instability due to sensitivity to hyperparameters and issues with importance sampling when the policy deviates from the reference.

Why it matters:

- GRPO is highly sensitive to clip thresholds and KL coefficients, limiting robustness.

- On-policy methods are sample inefficient, while standard off-policy methods risk gradient explosion via unbounded importance weights.

- DPO often fails to converge to the true optimal policy due to inherent flaws in the Bradley-Terry model.

Concrete Example:

In GRPO, if the current policy deviates significantly from the old policy, the importance weight (ratio of probabilities) becomes excessively large or small, causing gradient explosion. GVPO avoids this ratio entirely.

Key Novelty

Group Variance Policy Optimization (GVPO)

- Uses a zero-sum weighting scheme within prompt groups to cancel out the intractable partition function from the optimal policy's closed-form solution.

- Interprets the gradient as minimizing the mean squared error between the central distance of implicit rewards and actual rewards.

- Decouples the sampling distribution from the learned policy, allowing off-policy training without importance sampling weights.

Architecture

Conceptual illustration of GVPO's gradient computation and loss decomposition.

Evaluation Highlights

- Outperforms PPO and GRPO on mathematical reasoning tasks (GSM8K) and general chat benchmarks (MT-Bench, AlpacaEval 2).

- Achieves higher win rates against GPT-4 compared to DPO and IPO on the HH-RLHF dataset.

- Demonstrates superior training stability and lower variance in gradients compared to GRPO.

Breakthrough Assessment

8/10

Offers a theoretically grounded solution that unifies the benefits of DPO (closed-form optimality) and PPO/GRPO (explicit reward maximization) while solving the partition function problem. The off-policy capability without importance sampling is a significant structural advantage.