📝 Paper Summary

Reasoning Consistency

Symbolic Reasoning

Analogical Learning

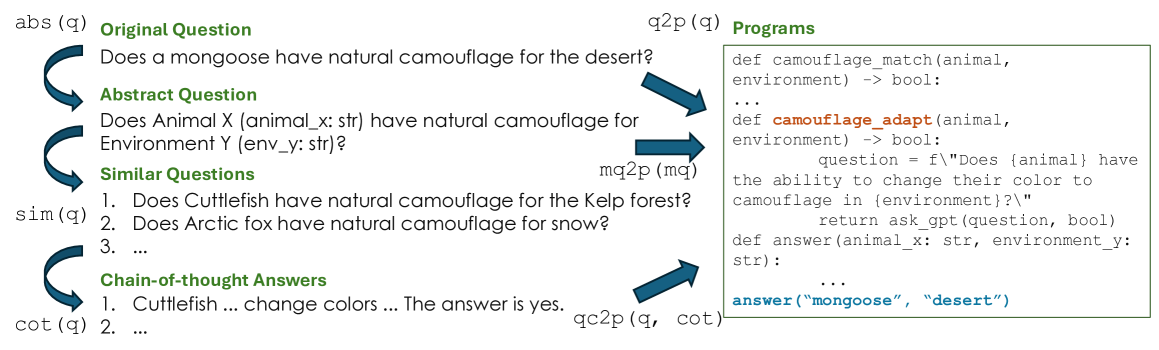

Sal improves reasoning consistency by training models to generate abstract symbolic programs from self-generated analogous questions, transferring successful reasoning patterns from familiar to rare scenarios.

Core Problem

Large language models suffer from reasoning inconsistency, failing on unfamiliar questions even when they can solve structurally identical questions involving common entities.

Why it matters:

- Inconsistency prevents deployment in mission-critical tasks like medical chatbots requiring trustworthy decision-making

- Even advanced models like OpenAI o1 fail on rare cases despite knowing the relevant facts, showing that memorization doesn't equal robust reasoning capability

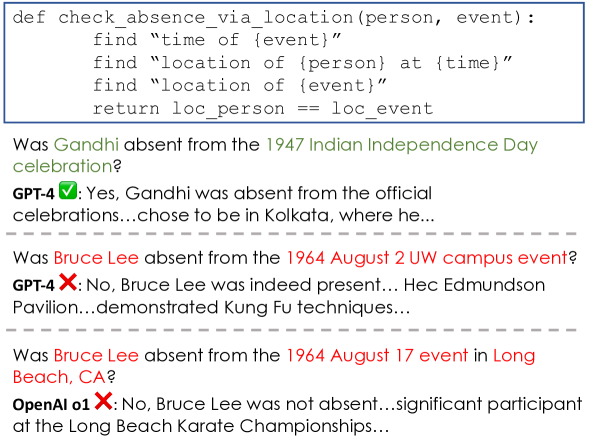

Concrete Example:

A model correctly answers 'Is Donald Trump in New York?' (common entity) but fails on 'Is [Less Common Person] in [Location]?' even though it knows the person's location, simply because the entity combination is rare.

Key Novelty

Self-supervised Analogical Learning (Sal)

- Conceptualization: Generates abstract versions of a hard question, creates many easier similar questions, solves them to get symbolic programs, and uses those programs as supervision for the original hard question.

- Simplification: Decomposes complex math problems into simpler sub-questions, iteratively building a 'known conditions' set to generate high-quality symbolic programs for self-supervision.

Architecture

The Conceptualization extraction pipeline.

Evaluation Highlights

- Outperforms base language models and Chain-of-Thought baselines by 2% to 20% across StrategyQA, GSM8K, and HotpotQA benchmarks.

- Simplification method increases the yield of high-confidence self-supervision programs from 25.3% to 44.3% on GSM8K math questions.

- Demonstrates improved generalizability and controllability due to the use of symbolic programmatic solutions.

Breakthrough Assessment

8/10

Strong conceptual contribution in using self-generated analogies for supervision. Addresses a critical LLM weakness (consistency) with significant empirical gains (up to 20%) without requiring external human labels.