📝 Paper Summary

Financial Time Series Forecasting

Mixture Density Networks (MDN)

Volatility Modeling

A pretraining method that initializes a complex Recurrent Mixture Density Network by first training only its linear components helps avoid bad local minima and numerical instability.

Core Problem

Recurrent Mixture Density Networks (RMDNs) are notoriously difficult to train, often getting stuck in bad local minima or failing due to numerical instability (persistent NaN values).

Why it matters:

- Financial time series exhibit complex dynamics like heteroskedasticity and fat tails that simple linear models cannot fully capture

- Advanced non-linear models like RMDNs frequently fail to outperform their simpler linear counterparts (GARCH) due to optimization difficulties

- The 'persistent NaN problem' makes training these networks unreliable for practical financial forecasting

Concrete Example:

When training an RMDN on stock returns without pretraining, the loss function frequently evaluates to NaN (Not a Number) or converges to a solution worse than a standard GARCH model because the optimization gets stuck.

Key Novelty

Linear Pretraining for ELU-RMDN

- Initialize the neural network architecture such that it contains a nested linear model (equivalent to GARCH) within its hidden layers

- Freeze all non-linear nodes and train only the linear nodes first to reach a stable baseline performance

- Unfreeze the non-linear nodes to allow the model to learn complex patterns starting from this stable, linear solution

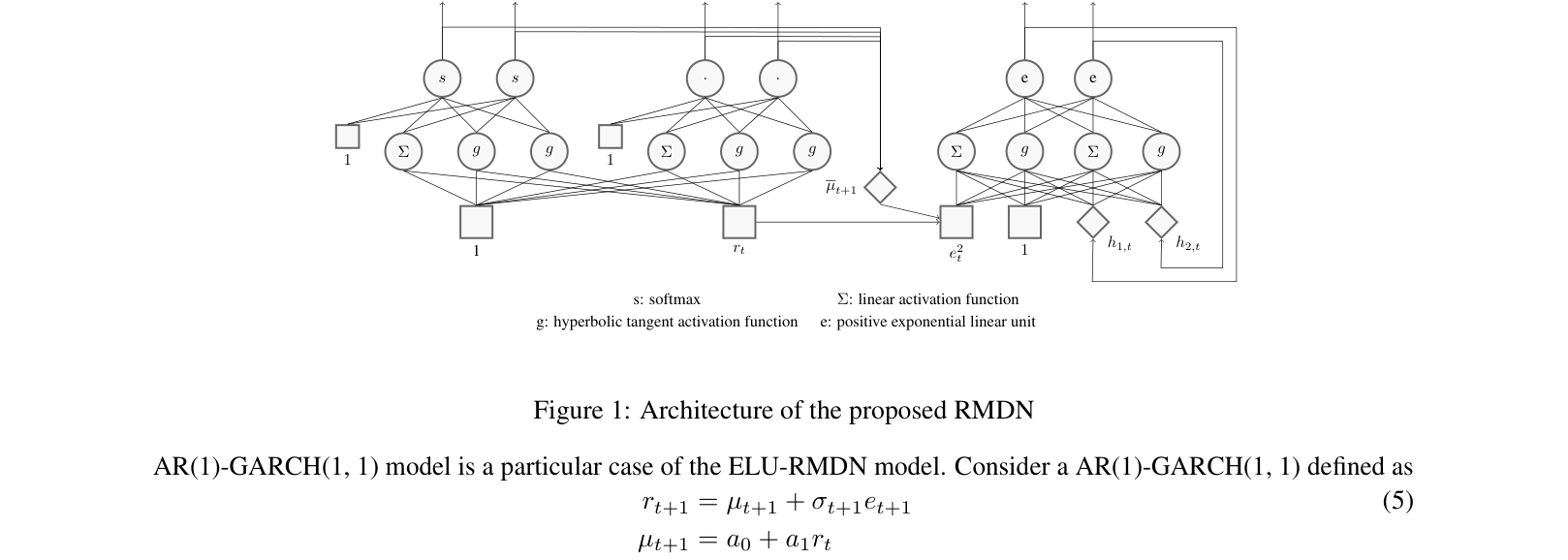

Architecture

The architecture of the proposed ELU-RMDN, detailing the connections for mean, variance, and mixture weights.

Evaluation Highlights

- 100% convergence rate for the pretrained model across 10 stocks, compared to only 31% for the standard training method

- Achieves lower negative log-likelihood (better fit) than GARCH baseline for 9 out of 10 stocks tested

- Completely eliminates the 'persistent NaN' numerical instability problem observed in the baseline RMDN

Breakthrough Assessment

4/10

A practical engineering fix for a specific model class (RMDN) in finance. While effective for stability, it is an incremental improvement on existing architectures rather than a fundamental theoretical breakthrough.