📝 Paper Summary

Decision Foundation Models

Offline Reinforcement Learning

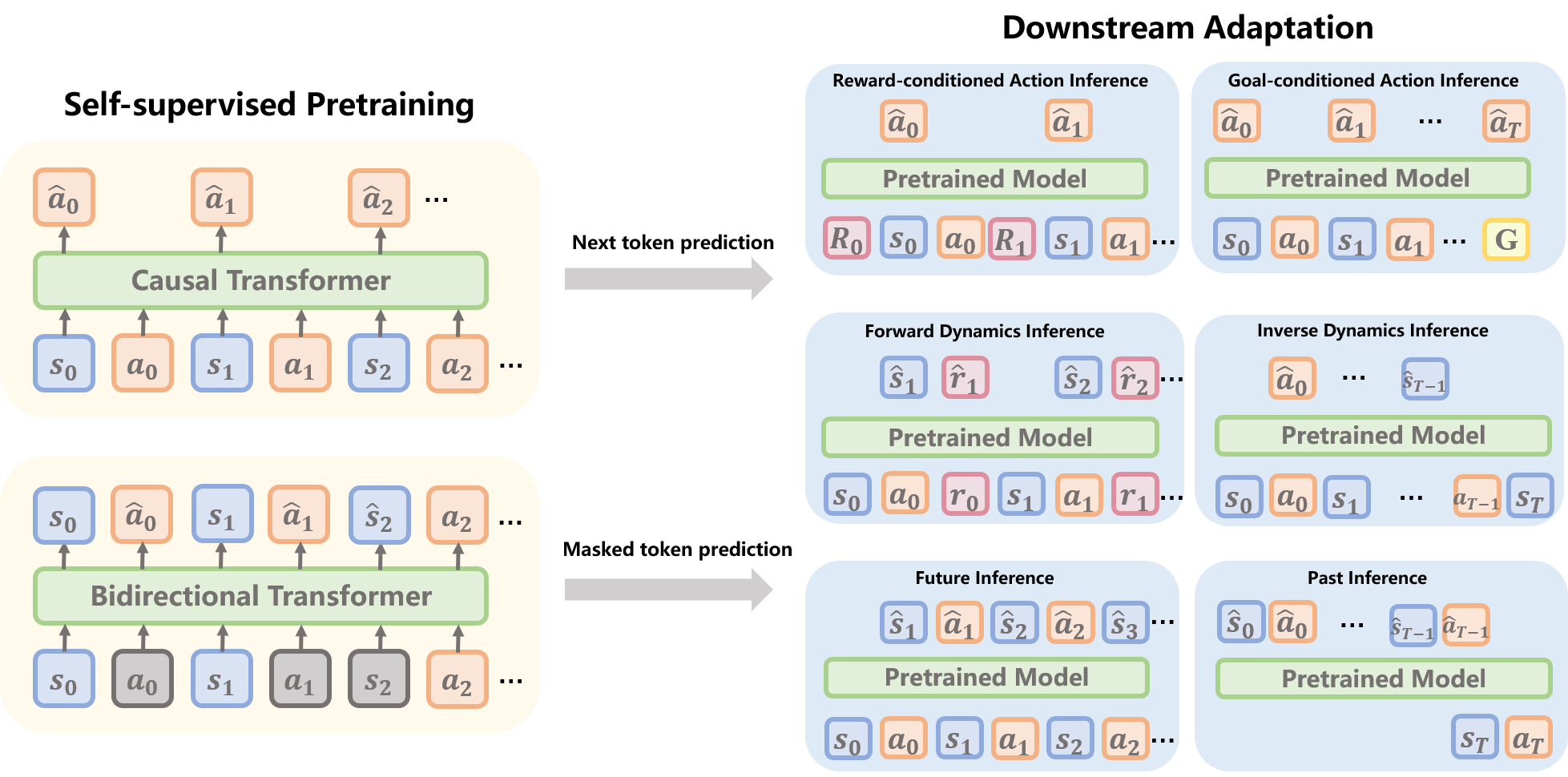

The paper formulates a 'Pretrain-Then-Adapt' pipeline for decision-making, where Transformer-based models learn generic representations from diverse offline trajectories via self-supervision to improve downstream sample efficiency.

Core Problem

Traditional RL agents are task-specific and sample-inefficient, requiring millions of interactions to learn from scratch, while offline RL struggles with error propagation and lacks flexibility across diverse tasks.

Why it matters:

- Real-world decision tasks (robotics, traffic control) are expensive to simulate or interact with online, making sample efficiency critical.

- Current agents lack generalization; a model trained on one Atari game cannot play another, unlike NLP/CV models that generalize zero-shot.

- Vast amounts of sub-optimal, unlabeled offline trajectory data exist but are underutilized by reward-dependent RL algorithms.

Concrete Example:

In Atari games, a standard RL agent must learn visual features and game dynamics from scratch for every new game (e.g., Breakout vs. Pong). The proposed pipeline would pretrain on a massive dataset of diverse game logs to learn physics and causality (e.g., 'ball bounces off paddle'), allowing the agent to adapt to a new game with few-shot demonstrations.

Key Novelty

Pretrain-Then-Adapt Pipeline for Decision Foundation Models

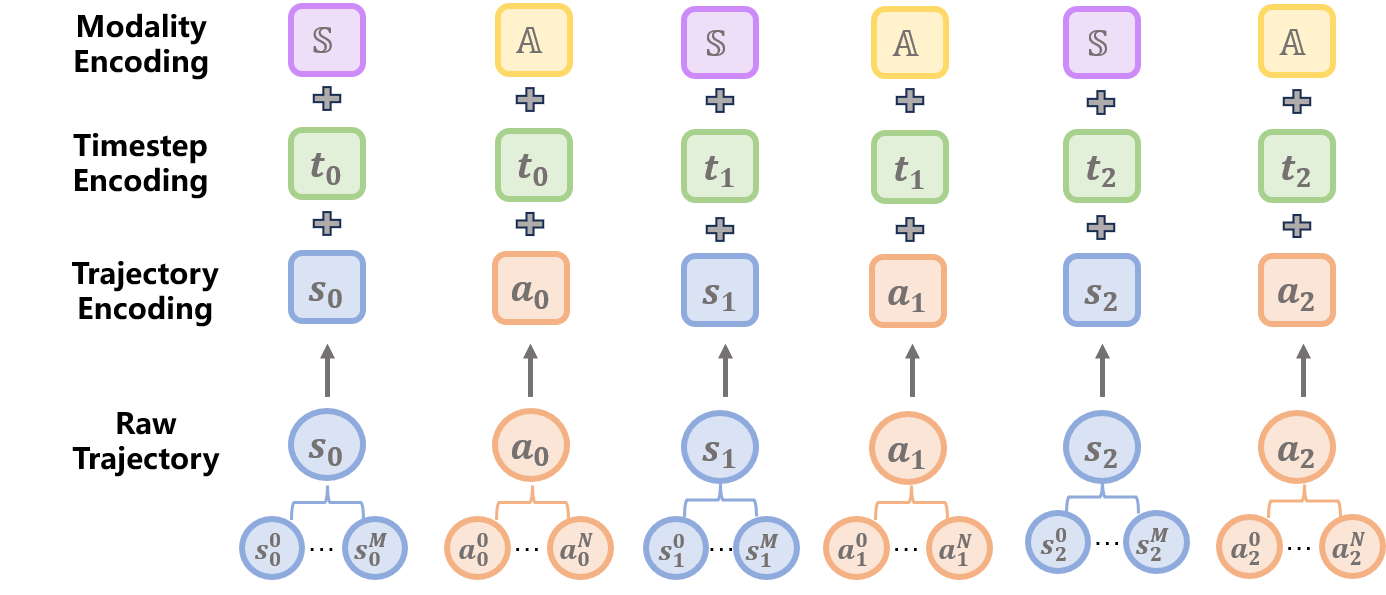

- Formalizes decision-making as a sequence modeling problem where a representation function maps raw trajectories (states, actions, rewards) to latent embeddings.

- Decouples generic knowledge acquisition (temporal dynamics, causality) via self-supervised pretraining from task-specific policy learning.

- Unifies diverse tokenization strategies (modality-level vs. dimension-level) and objectives (next-token vs. masked-prediction) into a single framework.

Architecture

The 'Pretrain-Then-Adapt' pipeline for Decision Foundation Models.

Breakthrough Assessment

4/10

A structured survey and position paper that systematizes the emerging field of Decision Foundation Models. It organizes existing literature well but does not present a new model or empirical breakthrough itself.