📝 Paper Summary

Language Model Pretraining

Synthetic Data Generation

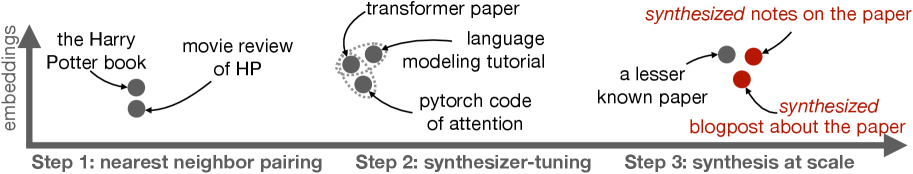

SBP improves pretraining by learning a conditional synthesizer from the data itself to generate new documents based on related pairs, capturing latent conceptual connections missed by standard independent modeling.

Core Problem

Standard pretraining treats documents as independent samples, ignoring rich semantic correlations between related texts (e.g., a paper and its code) and hitting a 'scaling wall' as high-quality data depletes.

Why it matters:

- High-quality internet text is rapidly depleting, creating a bottleneck for scaling frontier models

- Current methods miss the 'inter-document' signal (how one document relates to or inspires another), which contains valuable structural and logical information

- Existing synthetic data methods often rely on external 'teacher' models (distillation), which is not viable when the goal is self-improvement on a fixed corpus

Concrete Example:

A research paper on Transformers and a Python script implementing the attention mechanism are treated as unrelated data points. SBP explicitly pairs them, learning to synthesize the code given the paper (or vice versa), thereby abstracting the underlying concept.

Key Novelty

Self-Bootstrapped Conditional Synthesis

- Identifies related document pairs within the pretraining corpus using approximate nearest neighbor search

- Trains a conditional 'synthesizer' model on these pairs to predict one document given the other

- Generates a massive synthetic corpus by sampling seed documents and running the synthesizer, effectively multiplying the data with learned variations

Architecture

The three-step workflow of Synthetic Bootstrapped Pretraining (SBP).

Evaluation Highlights

- Closes up to 60% of the performance gap between a data-constrained model and an 'oracle' model trained on 20x more unique data

- Consistently outperforms a strong repetition baseline (looping over existing data) across 3B and 6B parameter scales

- Qualitative analysis shows synthesized documents abstract core concepts rather than just paraphrasing (e.g., applying a concept to a new genre)

Breakthrough Assessment

8/10

Proposes a statistically principled way to scale data without external teachers. The finding that self-synthesized data captures latent concepts better than simple repetition is significant for the 'data wall' problem.