📝 Paper Summary

Neural Code Generation

Chain-of-Thought (CoT) Reasoning

Lightweight Language Models (LLMs)

COTTON enables lightweight language models (<10B parameters) to generate high-quality Chain-of-Thought reasoning steps that significantly improve code generation performance without relying on massive server-grade models.

Core Problem

Lightweight models (<10B parameters) fail to generate high-quality Chain-of-Thought (CoT) reasoning independently, while existing CoT methods require manual effort or computationally expensive 100B+ parameter models.

Why it matters:

- Deploying 100B+ models is financially and computationally impractical for individual users or resource-constrained environments (e.g., single GPU)

- Lightweight models can execute code generation but lack the reasoning capability to plan complex logic zero-shot

- Current CoT techniques are not optimized for smaller models, leaving a gap in accessible software engineering automation

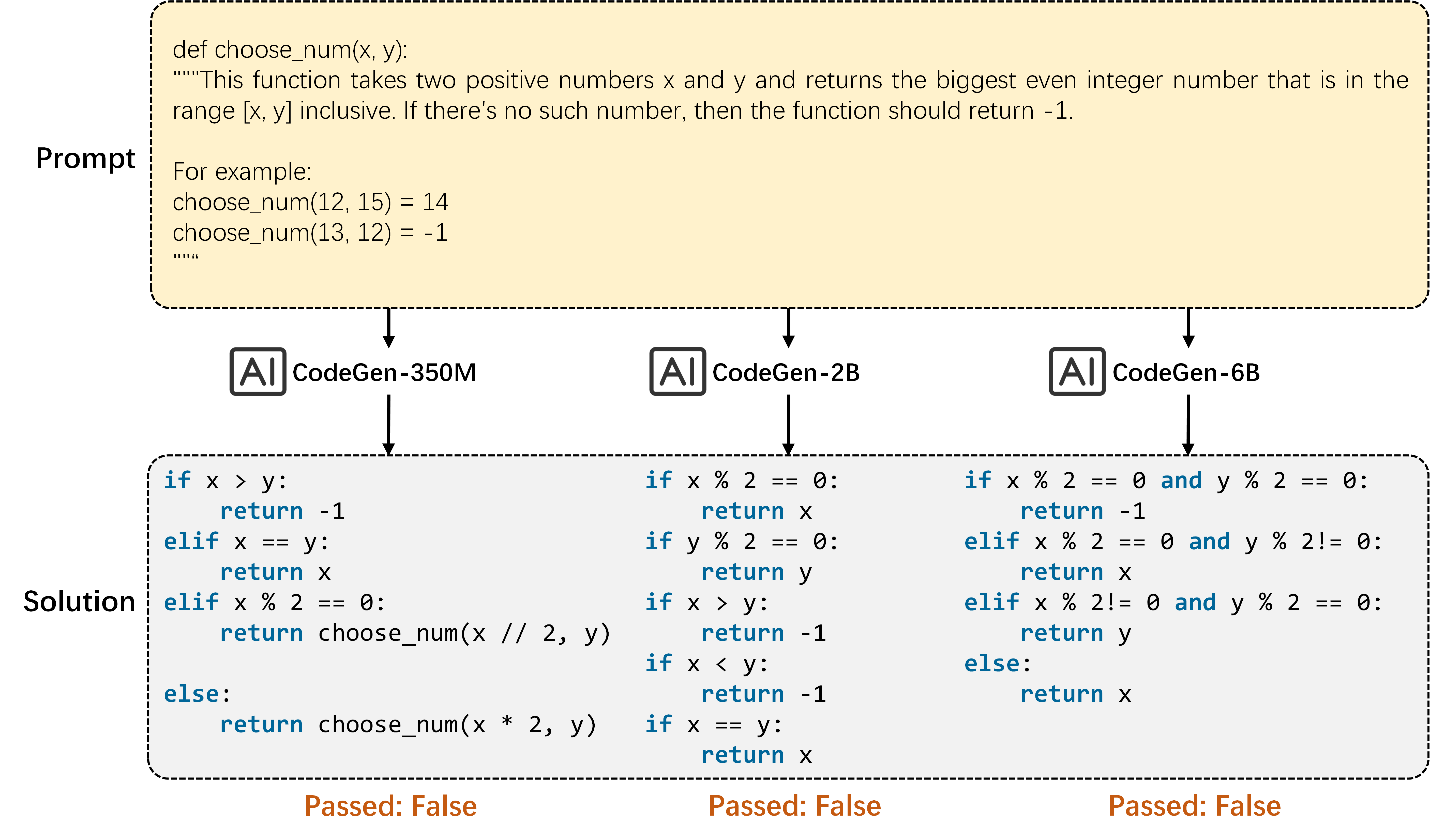

Concrete Example:

In the 'choose_num' task (finding the largest even integer in an interval), CodeGen-350M/2B/6B fail to generate correct code with a standard prompt. However, when provided with a specific CoT (Step 1: initialize variable; Step 2: loop details; Step 3: return), they successfully generate the correct solution.

Key Novelty

COTTON (ChainOfThoughTcOde geNeration)

- Distills CoT generation capabilities into a lightweight model (CodeLlama-7B) using a synthesized dataset (CodeCoT-9k) created via multi-agent alignment with ChatGPT

- Decouples reasoning from coding: a dedicated lightweight CoT model generates a plan, which then guides a separate (or the same) lightweight code model to generate the solution

- Demonstrates that small models can't *create* good CoT zero-shot but can *use* them effectively if they are generated by a specialized peer model

Architecture

A motivation example comparing standard prompting vs. CoT prompting for a lightweight model (CodeGen) on the 'choose_num' task.

Evaluation Highlights

- Boosts CodeT5+ 6B pass@1 accuracy on HumanEval-plus from 26.83% to 43.90%, outperforming the gains provided by 130B parameter models like ChatGLM

- Improves CodeT5+ 6B pass@1 on the newly constructed OpenEval benchmark from 20.22% to 35.39%

- StarCoder-7B guided by COTTON outperforms the larger StarCoder-16B in zero-shot scenarios

Breakthrough Assessment

7/10

Strong practical contribution for resource-constrained code generation. Successfully enables small models to perform reasoning tasks typically reserved for giants, though the underlying technique (LoRA fine-tuning on synthetic data) is standard.