📝 Paper Summary

Theoretical Computability of LLMs

Mechanistic Interpretability

Chain of Thought prompting enables autoregressive Transformers to approximate recurrent computation, allowing them to overcome their architectural constant-depth limitations and solve tasks requiring sequential reasoning.

Core Problem

Transformers utilize parallel attention mechanisms that eliminate recurrent connections, theoretically limiting their computational depth to O(1) and making them incapable of solving inherently sequential tasks like counting or parity.

Why it matters:

- Basic reasoning tasks like multiplication and string reversal remain difficult for even advanced Large Language Models (LLMs) due to these architectural constraints.

- Current understanding of Chain of Thought (CoT) is largely psychological; a mathematical framework is needed to explain why it unlocks capabilities previously thought impossible for Transformers.

Concrete Example:

Calculating the n-th Fibonacci number requires the previous state (n-1). An autoregressive model seeing only the output token 'True' (partial observation) lacks the full hidden state information needed to compute the next step, whereas a recurrent model maintains the full computational state.

Key Novelty

Chain of Thought as Approximated Recurrence

- Formalizes the distinction between Autoregression (generating from partial observations) and Recurrence (generating from full hidden states).

- Proposes that Chain of Thought (CoT) acts as a bridge, allowing Transformers to simulate recurrence by outputting intermediate computational steps into the context window.

- Introduces 'Recurrence-Completeness' to evaluate whether model architectures (like Linear Transformers or RWKV) can truly simulate recurrent state machines.

Architecture

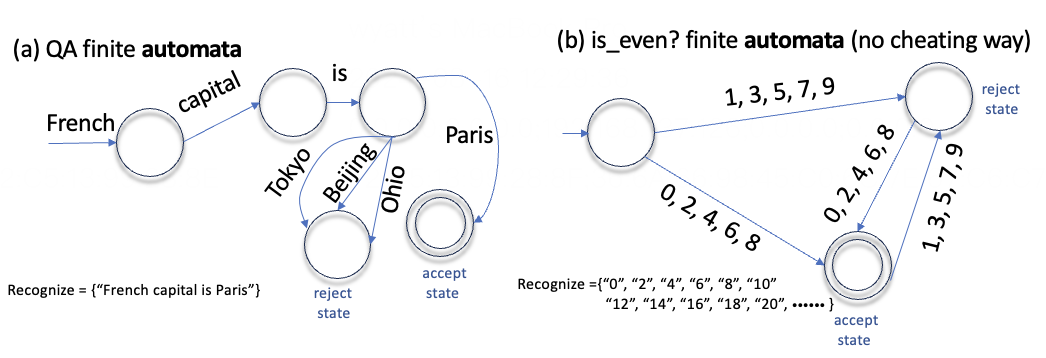

A conceptual diagram of a Finite Automata (State Machine) processing an input string.

Breakthrough Assessment

7/10

Provides a foundational theoretical explanation for why Chain of Thought works, linking it to automata theory and computational complexity, though the provided text lacks empirical validation.