📝 Paper Summary

Prompt Engineering

Reasoning

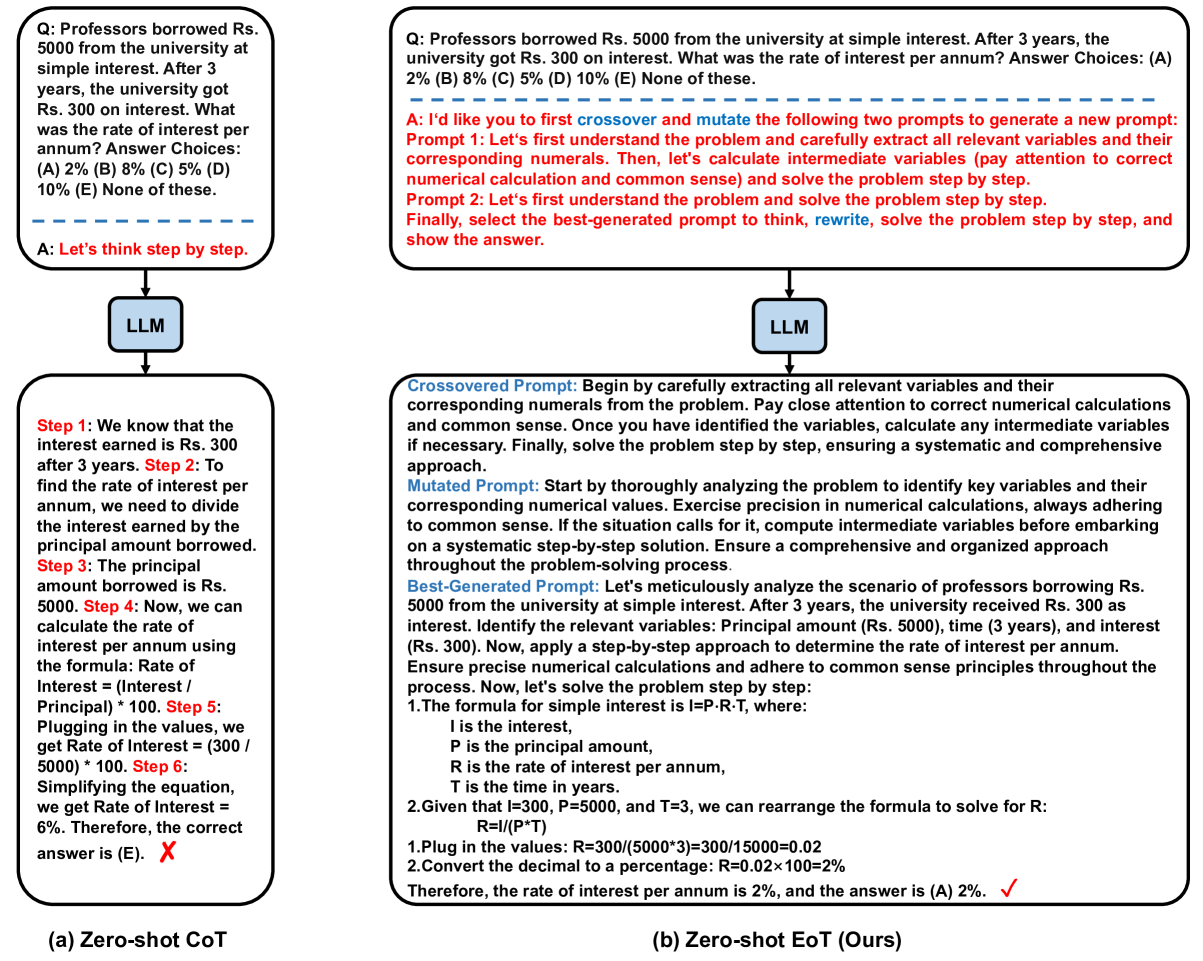

Zero-shot EoT improves LLM reasoning by dynamically evolving and selecting problem-specific prompts using evolutionary algorithms (crossover and mutation) and applying prompt-guided problem rewriting.

Core Problem

Existing Zero-Shot Chain-of-Thought (CoT) methods use a uniform prompt (e.g., 'Let's think step by step') for all task instances, ignoring that optimal prompts often vary depending on the specific input problem.

Why it matters:

- Pre-trained LLMs are sensitive to linguistic nuances; a static prompt may work well for some instances but fail for others due to prefix evolution during pre-training

- Using identical prompts across diverse problems limits the model's ability to adapt its reasoning strategy to specific problem complexities

Concrete Example:

For a math word problem about interest rates, a generic 'Let's think step by step' prompt might yield a shallow analysis. In contrast, an evolved prompt selected specifically for this problem might instruct the model to 'Identify relevant variables: Principal, time, interest' and rewrite the question to explicitly list these, leading to a correct solution.

Key Novelty

Zero-shot Evolutionary-of-Thought (EoT) Prompting

- Treats prompts as an evolving population: uses the LLM itself to perform 'crossover' (combining two prompts) and 'mutation' (modifying a prompt) to generate a diverse set of reasoning instructions

- Performs instance-specific adaptation: instead of a global prompt, the LLM selects the most suitable prompt from the evolved pool for the specific current problem

- Enhances comprehension via rewriting: uses the selected prompt to rewrite the original question, forcing the model to re-process and clarify the problem details before solving

Architecture

The complete pipeline of Zero-shot EoT Prompting

Evaluation Highlights

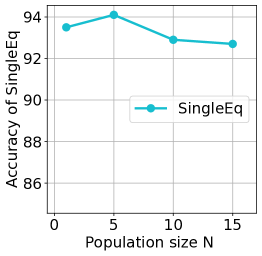

- Surpasses Few-shot Manual-CoT on 6 out of 6 arithmetic reasoning datasets using GPT-3.5-turbo, including a +21.8% accuracy gain on GSM8K (78.3% vs 56.5%)

- Outperforms standard Zero-shot CoT on SVAMP (+5.3% accuracy) and AQuA (+5.5% accuracy) benchmarks

- Achieves 99.0% accuracy on MultiArith, outperforming both Zero-shot CoT (94.0%) and Zero-shot Plan-and-Solve (97.3%)

Breakthrough Assessment

7/10

Demonstrates that zero-shot methods can outperform few-shot baselines by dynamically optimizing prompts per instance. The integration of evolutionary operators via LLMs is a novel mechanism for inference-time adaptation.