📝 Paper Summary

Chain of Thought (CoT) Prompting

In-Context Learning (ICL)

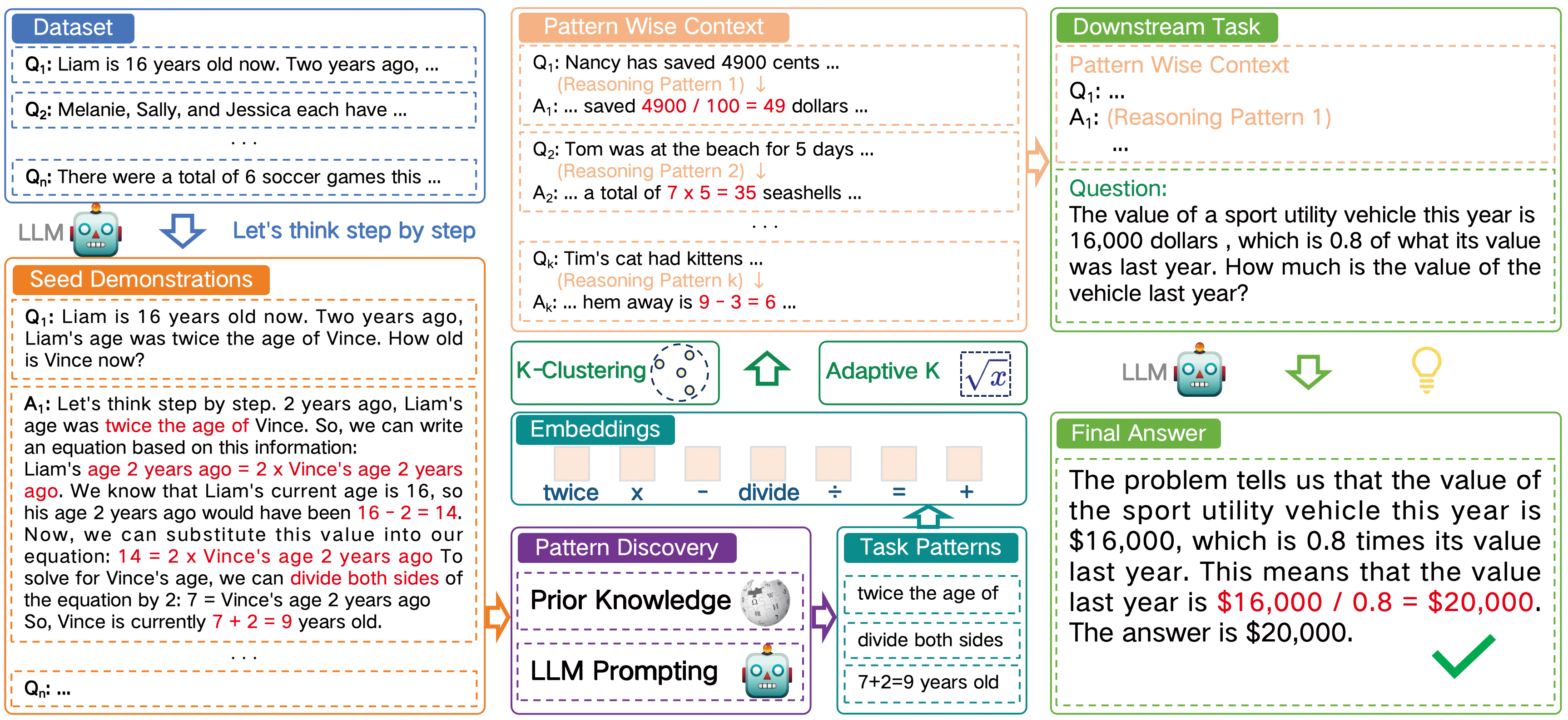

Pattern-CoT enhances reasoning by selecting demonstrations based on diverse logical reasoning patterns (operational sequences) rather than semantic similarity, mitigating noise and improving interpretability.

Core Problem

Existing unsupervised CoT methods select demonstrations based on semantic similarity of questions, which introduces irrelevant noise and obscures the actual logical steps needed for reasoning.

Why it matters:

- Semantic similarity often fails to capture the underlying logical structure required to solve complex reasoning tasks (e.g., math problems)

- Current selection methods lack interpretability, making it difficult to understand why specific demonstrations work or fail

- Heuristic selection of the number of demonstrations ($k$) is inefficient; too many examples don't always help, and too few miss key patterns

Concrete Example:

In a case study, Auto-CoT selects demonstrations based on question semantics (e.g., 'buying apples'), but the logic required is subtraction. Because the semantic match is superficial, the model gets distracted by irrelevant context and produces a wrong answer. Pattern-CoT selects a demonstration with the correct 'subtraction pattern' regardless of topic, leading to the correct result.

Key Novelty

Pattern-CoT (Pattern-based Chain of Thought)

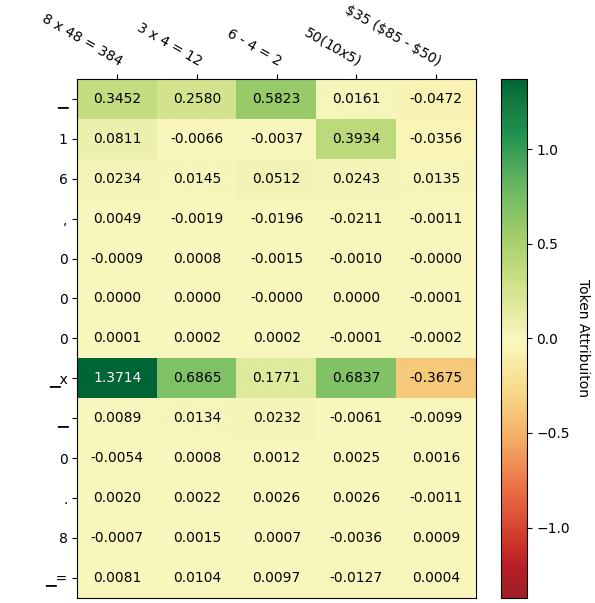

- Extracts 'reasoning patterns' (sequences of operation tokens like +, -, *) from rationales instead of embedding the full text

- Clusters these patterns to identify distinct logical strategies available in the data, ensuring the demonstration set covers diverse reasoning types

- Determines the optimal number of demonstrations dynamically based on the number of unique operation types found in the task

Architecture

The overall workflow of Pattern-CoT. It illustrates the transition from Rationales -> Pattern Discovery -> Pattern Clustering -> Demonstration Selection -> Final Inference.

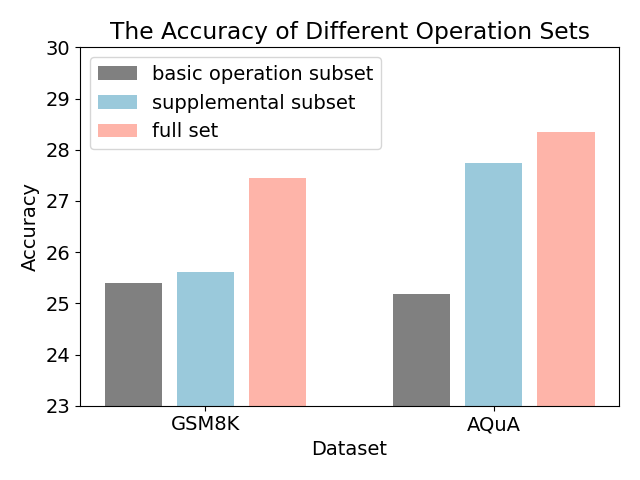

Evaluation Highlights

- Outperforms Auto-CoT by +3.9% on MultiArith and +6.0% on SVAMP using LLaMA-2-7B

- Achieves superior performance on Coin-Flip (+7.0% vs Auto-CoT) where operation patterns are not explicitly defined but discovered via LLM

- Demonstrates robustness across model sizes, improving LLaMA-2-13B performance on AddSub by +2.5% over Auto-CoT

Breakthrough Assessment

7/10

Offers a logical, interpretable shift from semantic to pattern-based selection in CoT. While the gains are consistent, the method relies on identifying 'operations' which may be harder for non-algorithmic tasks.