📝 Paper Summary

Text-to-SQL

In-Context Learning (ICL)

Chain-of-Thought (CoT) Prompting

ACT-SQL improves Text-to-SQL performance by automatically generating reasoning steps for prompt exemplars based on schema similarity, eliminating manual labeling while using only a single API call.

Core Problem

Standard few-shot prompting fails to elicit complex reasoning for SQL generation, while existing Chain-of-Thought (CoT) methods require expensive manual labeling or multiple costly API calls per query.

Why it matters:

- Manual labeling of reasoning chains for CoT exemplars is time-consuming and non-scalable

- Previous state-of-the-art ICL methods like DIN-SQL require multiple LLM calls (decomposition, generation, correction), making them slow and expensive for real-time applications

- Zero-shot LLMs often struggle with complex schema linking without explicit reasoning guidance

Concrete Example:

For a question like 'Find the package choice... of the TV channel that has high definition TV', a standard model might include redundant columns like 'Hight_definition_TV' in the SELECT clause. ACT-SQL's auto-generated thought process explicitly links 'high definition TV' to the WHERE clause, preventing the error.

Key Novelty

Auto-CoT via Inverse Schema Linking

- Generates reasoning chains automatically by mapping SQL components back to the natural language question using semantic similarity, simulating a human's 'schema linking' process

- Replaces the need for manually written reasoning steps in few-shot exemplars

- Uses a single-pass generation (CoT + SQL) rather than multi-stage pipelines, reducing cost

Architecture

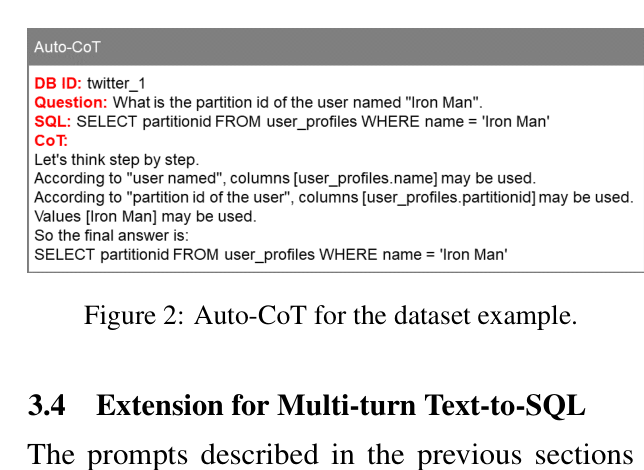

Example of an Automatically-Generated Chain-of-Thought (Auto-CoT) prompt.

Evaluation Highlights

- Achieves 62.7% Exact Match accuracy on Spider Dev (GPT-3.5-turbo), surpassing the previous SOTA in-context learning method DIN-SQL (GPT-4) which scored 60.1%

- Reduces computational cost by using only 1 API call per SQL generation, compared to 4 API calls for DIN-SQL

- Outperforms finetuned baseline Graphix-3B+PICARD on Spider-DK Execution Accuracy (68.2% vs ~66%) due to LLM domain knowledge

Breakthrough Assessment

7/10

Significant for making CoT practical in Text-to-SQL by removing manual labeling and high API costs, though primarily an engineering optimization of prompting rather than a new architecture.