📝 Paper Summary

Phonological Reasoning

Prompt Engineering

Chain-of-Thought (CoT)

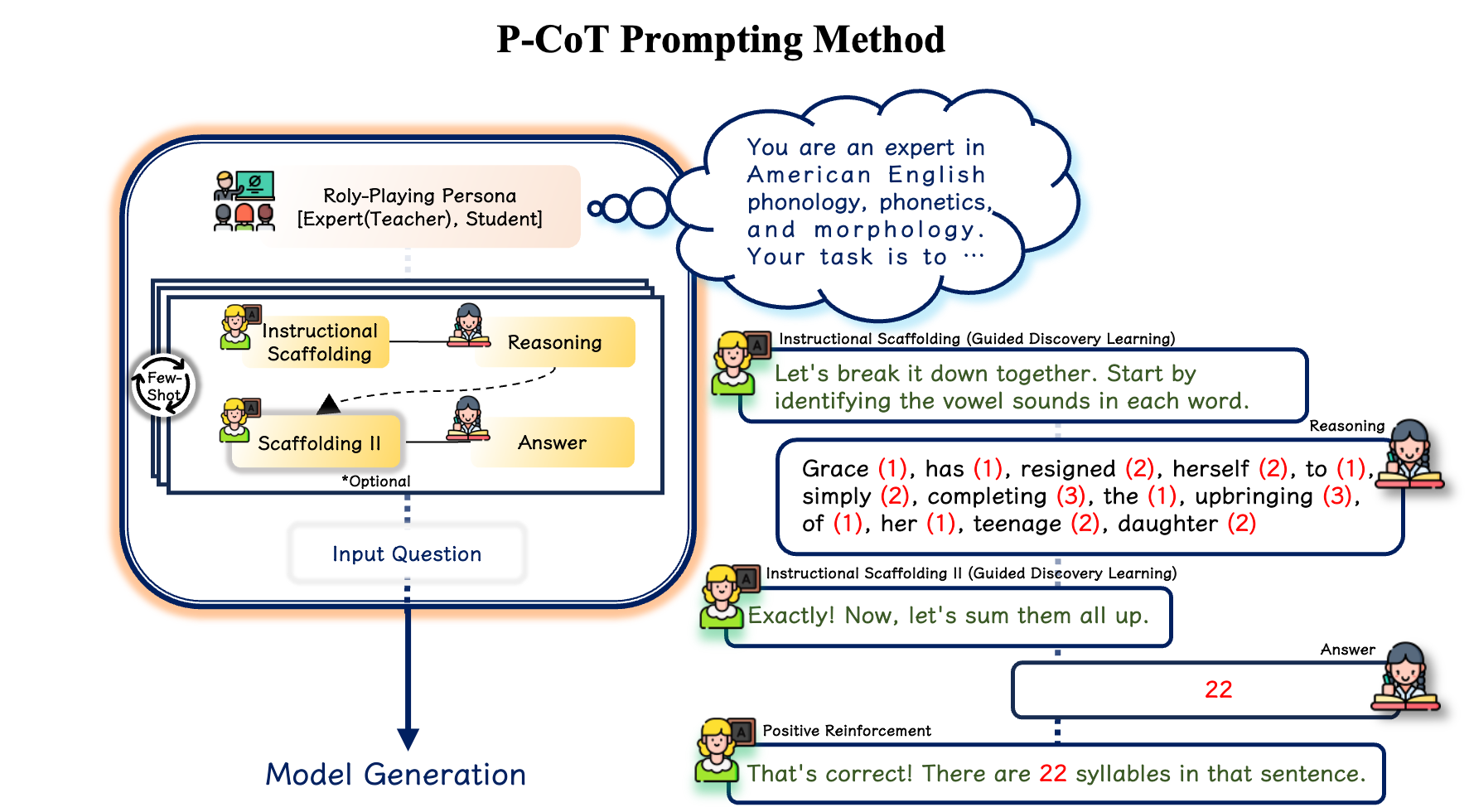

P-CoT improves LLM phonological reasoning by simulating a teacher-student dialogue where the prompt scaffolds the task via definitions and sub-problems, allowing the model to 'discover' the answer.

Core Problem

Text-based LLMs struggle with explicit phonological tasks like rhyming and syllable counting despite having latent phonological knowledge, and standard few-shot prompting yields inconsistent or degraded performance.

Why it matters:

- Phonology is critical for generating natural prosody in speech synthesis and accurately modeling dialectal variations.

- Current text-based models exhibit a significant gap compared to human performance on phonological benchmarks.

- Few-shot learning is unreliable for these tasks, with some models (e.g., Llama, Qwen series) showing performance declines compared to zero-shot baselines.

Concrete Example:

In syllable counting, baseline GPT-3.5-turbo achieves only 16.0% accuracy. Few-shot prompting provides inconsistent gains, sometimes degrading performance, as the model fails to internalize the phonological rules from examples alone.

Key Novelty

Pedagogically-motivated Participatory Chain-of-Thought (P-CoT)

- Integrates 'Discovery Learning' and 'Scaffolding' educational theories into prompt design.

- Uses role-playing where the prompt acts as a 'Teacher' providing definitions and sub-tasks (scaffolding), and the model acts as a 'Student' guided through the reasoning process.

- Decomposes complex phonological tasks into concrete sub-problems (e.g., identifying vowel sounds before counting syllables) to help the model traverse its Zone of Proximal Development (ZPD).

Architecture

Conceptual diagram of the P-CoT framework showing the integration of pedagogical theories (Scaffolding, Discovery Learning) into the prompt design to bridge the gap between latent capability and explicit performance.

Evaluation Highlights

- Claude 3.5 Haiku improves syllable counting accuracy from 21.1% (baseline) to 57.4% using P-CoT.

- GPT-3.5-turbo improves syllable counting accuracy from 16.0% (baseline) to 48.8% using P-CoT.

- Ministral-8B-Instruct-2410 demonstrates a ~52 percentage point increase over baseline in common rhyme word generation.

Breakthrough Assessment

7/10

Significant performance gains on specific phonological tasks where standard methods fail. Novel application of educational theory to prompting, though evaluated on a niche domain (phonology).