📝 Paper Summary

In-Context Learning (ICL)

Chain-of-Thought (CoT)

Mechanistic Interpretability

Synthetic Datasets

CoT-ICL Lab is a synthetic framework that generates tokenized reasoning tasks with controllable causal structures, revealing that Chain-of-Thought prompts accelerate learning transitions and help shallow models match deeper ones.

Core Problem

Current studies on In-Context Learning (ICL) and Chain-of-Thought (CoT) rely on either overly simple numeric toy tasks (linear regression) or uncontrolled natural language tasks, preventing precise isolation of reasoning mechanisms.

Why it matters:

- Existing numeric toy tasks lack the discrete, compositional nature of language, limiting their relevance to real LLM behavior

- Natural language benchmarks conflate world knowledge with reasoning ability, making it hard to measure pure algorithmic learning

- We lack a unified testbed to systematically vary complexity factors like vocabulary size, chain length, and causal graph sparsity to understand what drives CoT emergence

Concrete Example:

In standard linear regression ICL tasks, models predict a scalar output from a scalar input. This fails to capture the complexity of multi-step reasoning where intermediate tokens (CoT) are causally required to derive the final answer, unlike CoT-ICL Lab which simulates these discrete dependencies via DAGs.

Key Novelty

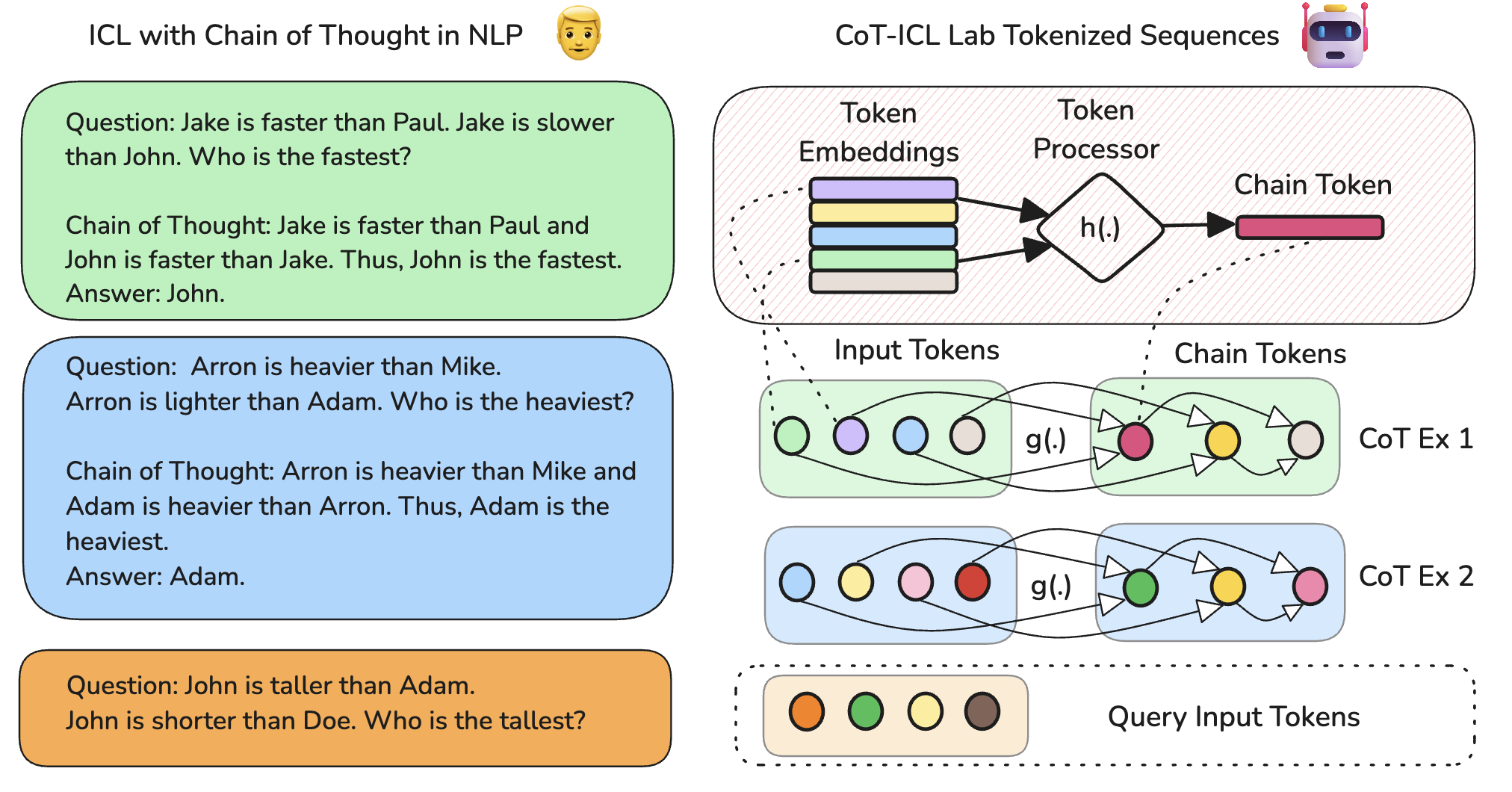

Synthetic Tokenized Reasoning Framework (CoT-ICL Lab)

- Decouples the 'reasoning structure' (a Directed Acyclic Graph defining dependencies) from the 'token processing' (MLPs transforming embeddings), allowing independent control of structural vs. functional complexity

- Uses a discrete vocabulary and embedding space to mimic language, unlike continuous-valued regression tasks common in theoretical ICL work

- Supports multi-input, multi-step chain generation where intermediate tokens act as parents for subsequent tokens, formally modeling Chain-of-Thought

Architecture

The data generation process for CoT-ICL Lab. It visualizes how a DAG defines dependencies between tokens and how an MLP processes these tokens to generate the next token in the chain.

Evaluation Highlights

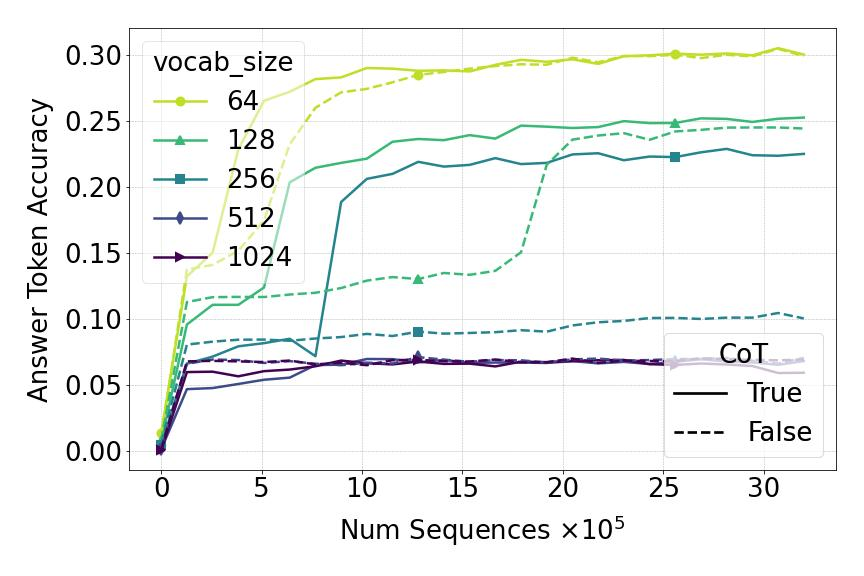

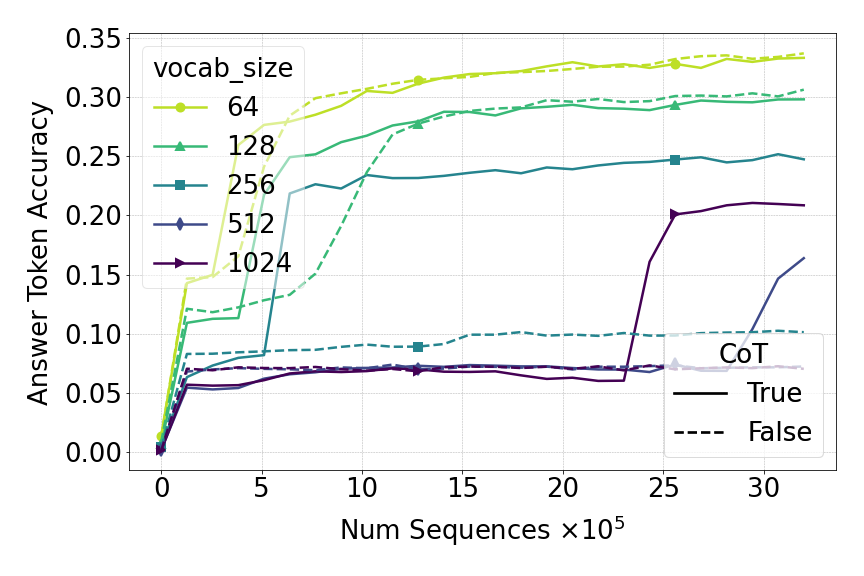

- CoT prompting accelerates the phase transition in accuracy, allowing models to reach high performance with fewer training steps compared to standard ICL (No-CoT)

- Deeper models (12 layers) significantly outperform shallow models (2 layers) on complex reasoning tasks, but CoT allows shallow models to bridge this gap when given more examples

- Restricting the diversity of token processing functions (fewer unique MLPs) allows models to learn the underlying causal structure (DAG) much faster

Breakthrough Assessment

7/10

A strong methodological contribution that bridges the gap between toy ICL theory and practical CoT. It provides a clean testbed for scaling laws and interpretability, though it remains a synthetic proxy rather than a solution to real-world reasoning.