📝 Paper Summary

Mental Health Analysis

Chain-of-Thought Reasoning

Clinical NLP

A structured Chain-of-Thought prompting framework that guides Large Language Models through emotion analysis, binary classification, and causal reasoning to accurately estimate depression severity scores.

Core Problem

Standard LLM-based depression detection lacks interpretability and struggles to identify nuanced linguistic cues like anhedonia (e.g., negated positive affect), often conflating symptom identification with severity assessment.

Why it matters:

- Current end-to-end classification models fail to meet medical standards for auditability because they lack explicit reasoning steps.

- Holistic text processing overlooks subtle diagnostic markers, such as implied expectations in phrases like 'Family gatherings should be fun, yet they feel meaningless.'

- Clinical practice requires distinct processes for symptom detection (binary) and severity assessment (regression), which current models often merge.

Concrete Example:

A phrase like 'I try to enjoy hobbies but feel nothing' contains subtle anhedonia cues (implicit expectation of joy + contextual negation). A standard model might miss this or treat it generally negatively, whereas the proposed method explicitly dissects the negated positive affect before assigning a depression score.

Key Novelty

Emotion-to-Reasoning Chain-of-Thought (CoT) Framework

- Decomposes the diagnostic process into four clinical stages: Emotion Analysis, Binary Classification, Causal Reasoning, and Severity Assessment.

- Mimics the workflow of mental health professionals by first identifying symptoms and root causes before calculating quantitative severity scores (PHQ-8).

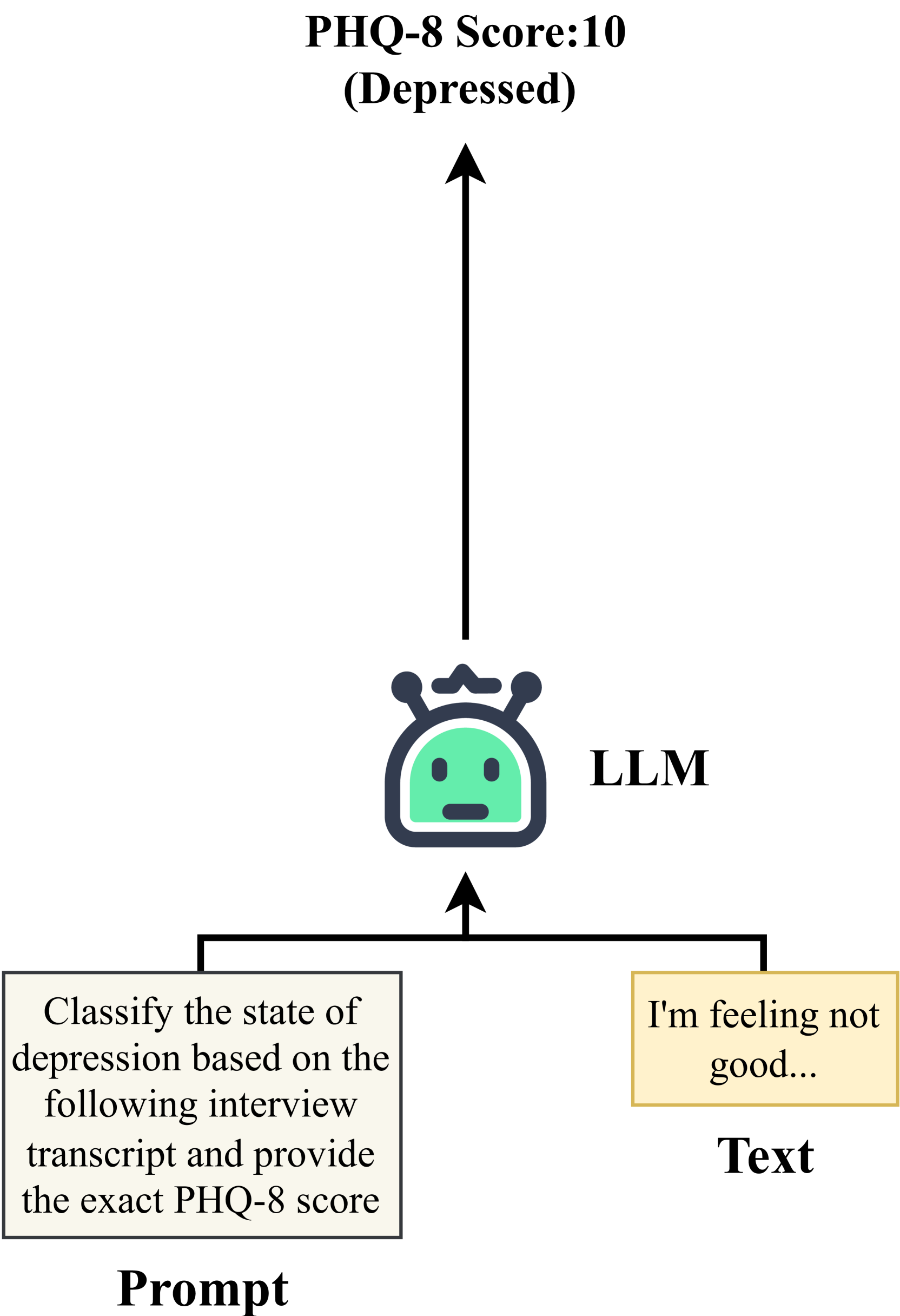

Architecture

The four-stage Chain-of-Thought prompting framework pipeline.

Evaluation Highlights

- Achieves 0.732 CCC (Concordance Correlation Coefficient) with GPT-4o using the proposed CoT strategy, outperforming the standard GPT-4o baseline (0.696 CCC).

- Improves QwQ-32b-preview's performance significantly, raising CCC from 0.597 to 0.705 and reducing Mean Absolute Error (MAE) from 4.23 to 3.55.

- Surpasses traditional multimodal deep learning baselines like CubeMLP (0.583 CCC) using only text input.

Breakthrough Assessment

7/10

Significant improvement in interpretability and accuracy for mental health estimation by aligning LLM reasoning with clinical workflows. Demonstrates that structured prompting can unlock performance gains even in smaller models.