📊 Experiments & Results

Evaluation Setup

Survey of benchmarks used in literature

Benchmarks:

- GSM8K (Mathematical reasoning)

- CSQA (Commonsense reasoning)

- Last Letter Concatenation (Symbolic reasoning)

- ProofWriter (Logical reasoning)

- ScienceQA (Multi-modal reasoning)

Metrics:

- Accuracy

- Statistical methodology: Not explicitly reported in the paper

Experiment Figures

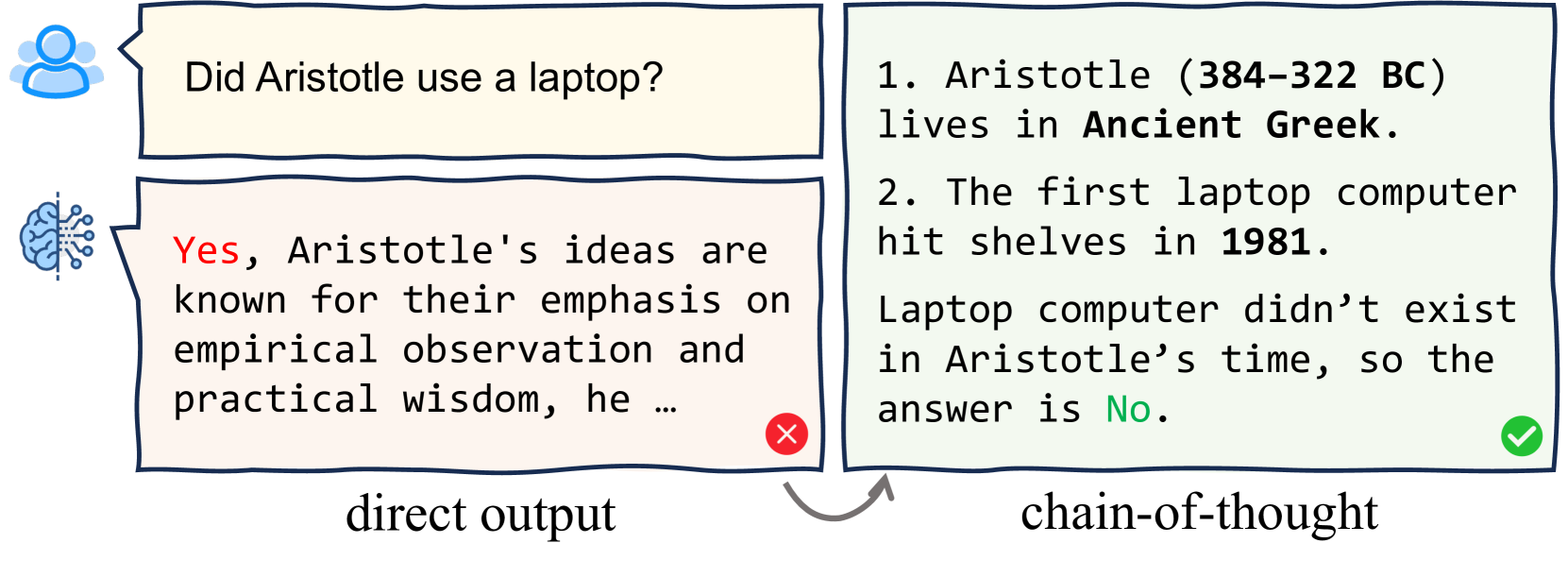

Comparison between Standard Prompting and Chain-of-Thought Prompting on a math word problem.

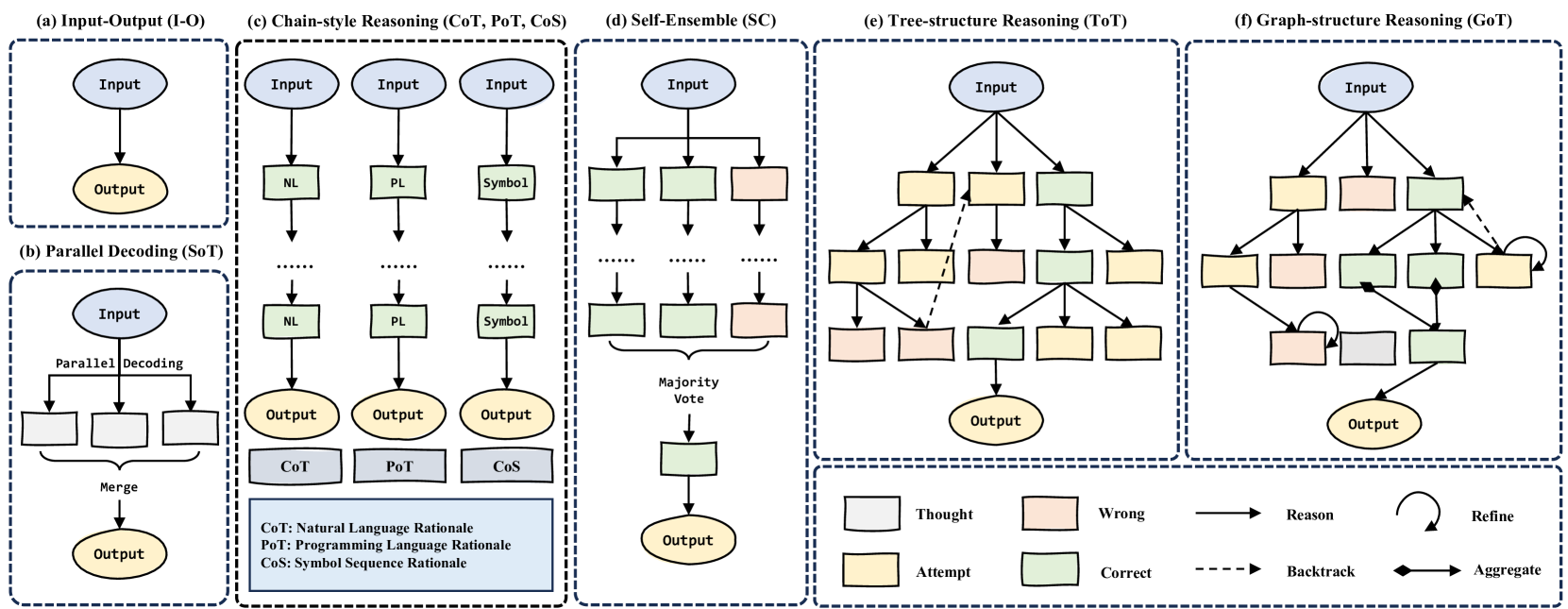

Visual comparison of topological variants: (a) Input-Output, (b) CoT (Chain), (c) CoT-SC (Multiple Chains), (d) ToT (Tree), (e) GoT (Graph).

Main Takeaways

- CoT prompting significantly boosts LLM reasoning, especially for complex tasks, by decomposing problems into manageable steps.

- Topological complexity improves performance but hurts generalization: Graph/Tree structures solve harder problems but require specific decomposition designs that may not apply generally.

- There is a trade-off between cost and performance: Manual prompting yields better results but is expensive; Automatic prompting is cheap but unstable.

- Faithfulness remains a challenge: LLMs hallucinate during reasoning, necessitating verification modules and knowledge enhancement.