📝 Paper Summary

Chain-of-Thought (CoT) Optimization

Causal Reasoning in LLMs

Data Pruning / Dataset Construction

The authors propose a causal framework that quantifies the probability of necessity and sufficiency for individual reasoning steps, enabling the automated pruning of redundant steps and addition of missing ones to create efficient, high-quality Chain-of-Thought data.

Core Problem

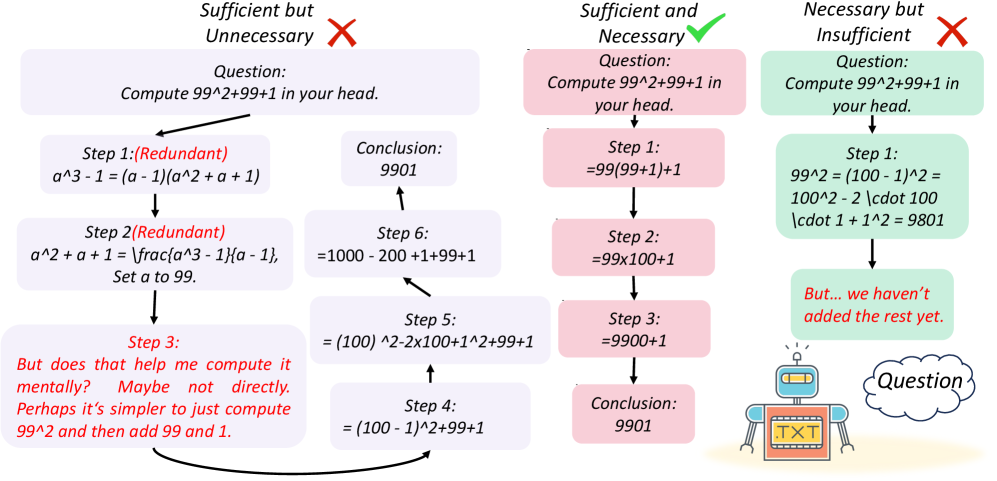

Current Chain-of-Thought (CoT) reasoning suffers from two main issues: 'overthinking' (generating redundant, unnecessary steps) and logical gaps (missing steps required for the conclusion).

Why it matters:

- Redundant steps increase computational cost and token usage without improving accuracy

- Existing pruning methods rely on correlations (e.g., attention weights) rather than true causal impact, often keeping frequent but irrelevant steps

- Logical gaps lead to 'hallucinated' correct answers that aren't actually supported by the reasoning chain

Concrete Example:

In a GSM-8k problem, a model might correctly calculate an answer but include an irrelevant step like 'I will now double-check the calculation' which doesn't causally contribute to the result. Conversely, it might jump to a conclusion without the necessary intermediate arithmetic, failing the sufficiency test.

Key Novelty

Probability of Necessity and Sufficiency (PNS) for CoT

- Adapts Pearl's causal definitions to reasoning chains: a step is 'sufficient' if it guarantees the answer, and 'necessary' if removing it changes the correct answer to an incorrect one

- Uses 'counterfactual rollouts': the system temporarily deletes or alters a reasoning step and lets the model continue generating; if the answer flips from correct to incorrect, the step was causally necessary

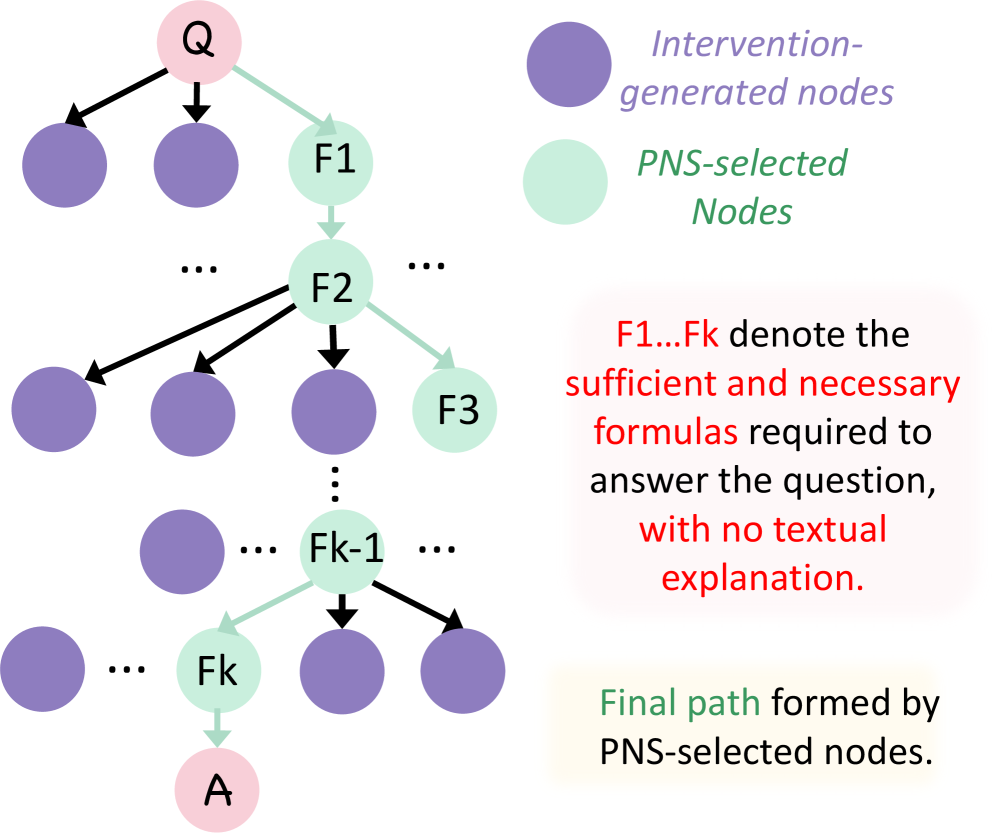

Architecture

The PNS-based evaluation and optimization pipeline.

Evaluation Highlights

- Reduced average token count by ~45% (from 171.8 to 93.4) on GSM-8k while maintaining or improving accuracy

- Improved accuracy by +3.4% on AIME 2024 using PNS-optimized CoT data for fine-tuning compared to standard CoT

- Achieved 67.2% accuracy on MATH-500 with Qwen2.5-7B-Instruct (SFT), outperforming the base model's 58.8%

Breakthrough Assessment

8/10

Strong theoretical grounding in causal inference applied to CoT. The method successfully reduces token costs while boosting accuracy, a rare 'win-win' in efficiency/performance trade-offs.