📝 Paper Summary

Mechanistic Interpretability

Chain-of-Thought (CoT) Reasoning

Mechanistic analysis reveals that Transformers with Chain-of-Thought learn robust state tracking algorithms by implementing Finite State Automata via late-layer MLP neurons, distinguishing them from standard Transformers and implicit CoT models.

Core Problem

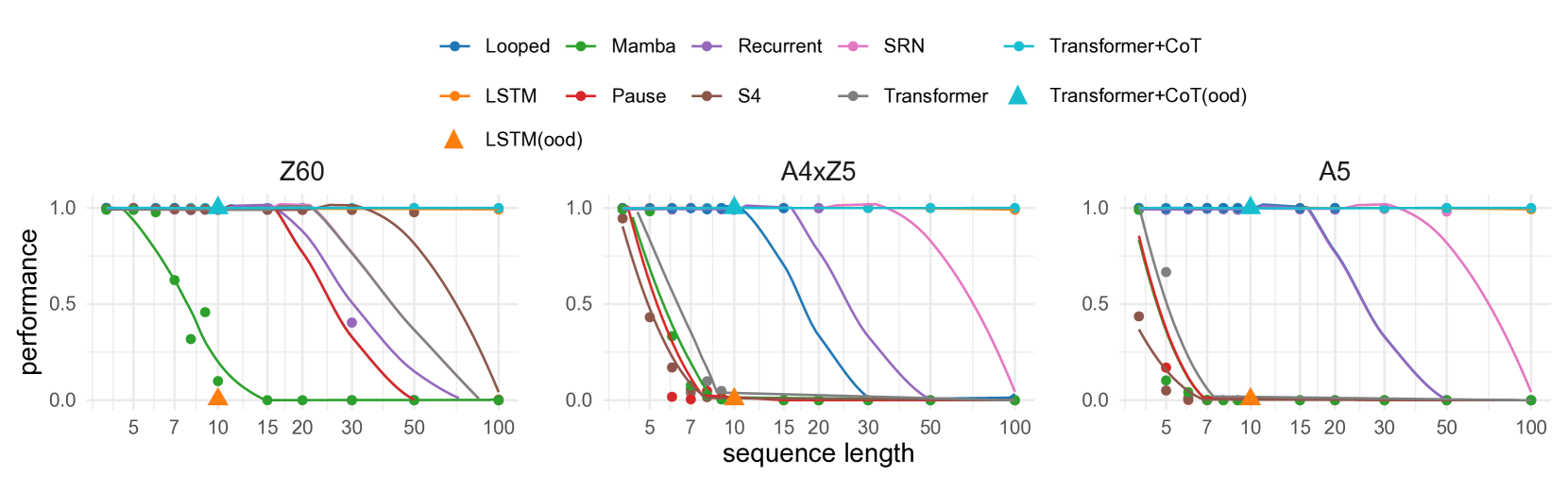

Standard Transformers and state-space models fail to learn state tracking for complex groups (like A5) or generalize to arbitrary lengths, often learning statistical shortcuts rather than true world models.

Why it matters:

- Theoretical expressiveness does not guarantee practical learnability; models often fail to converge on tasks they can theoretically represent

- Understanding if CoT actually recovers a world model (FSA) or just learns shortcuts is crucial for trusting generative models in complex reasoning

- State tracking is foundational for downstream tasks like entity tracking, navigation, and mathematical reasoning

Concrete Example:

In the parity problem (Z2 group), a standard Transformer might try to count the number of 1s in a sequence to determine evenness. This shortcut fails when the sequence length exceeds the training distribution. A true FSA approach tracks the current state (Even/Odd) step-by-step, which standard Transformers fail to learn efficiently without CoT.

Key Novelty

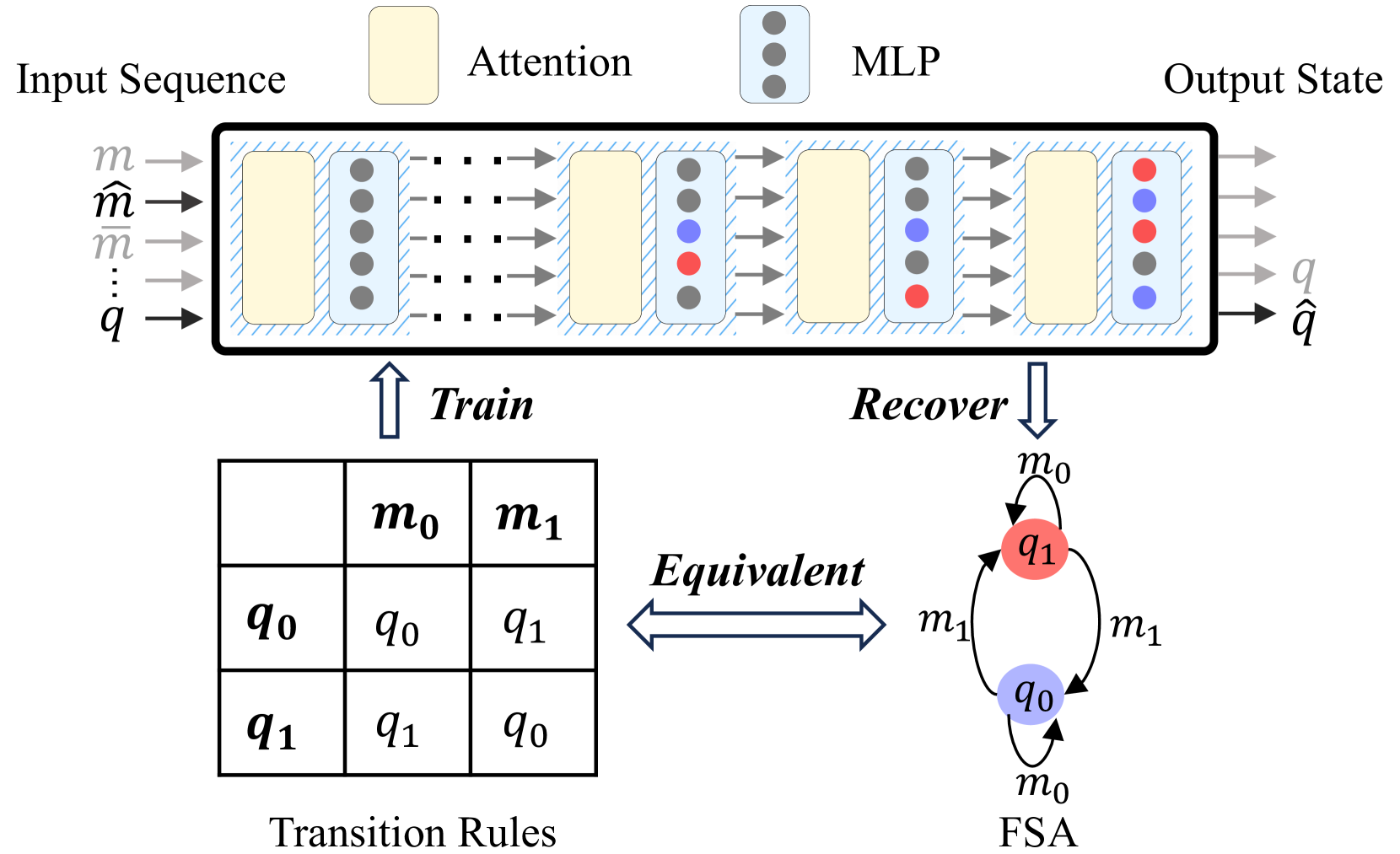

Mechanistic Confirmation of FSA Formulation in CoT

- Identifies that late-layer MLP neurons are the specific circuit responsible for representing states in the Chain-of-Thought process

- Proposes two interpretability metrics, 'compression' and 'distinction', to mathematically prove the model groups diverse input histories into discrete state representations

- Demonstrates that CoT allows the model to learn 'non-solvable' groups (A5) that standard Transformers and State Space Models cannot handle

Architecture

Conceptual illustration of the Z2 (parity) State Tracking problem and its corresponding Finite State Automaton (FSA).

Evaluation Highlights

- Transformer+CoT achieves nearly 100% accuracy on 'compression' and 'distinction' metrics, proving it internally represents discrete FSA states

- Transformer+CoT is the only architecture (compared to Mamba, S4, RNN, Standard Transformer) to successfully learn state tracking for the non-solvable group A5 on arbitrary lengths

- Demonstrates robustness by maintaining state tracking capabilities even when intermediate steps are skipped or noise is introduced

Breakthrough Assessment

8/10

Strong mechanistic evidence linking CoT to FSA formation. Bridges the gap between theoretical expressiveness and practical learnability, offering a clear explanation for why CoT works on sequential reasoning.