📝 Paper Summary

Chain of Thought (CoT)

Latent Reasoning

Search and Planning

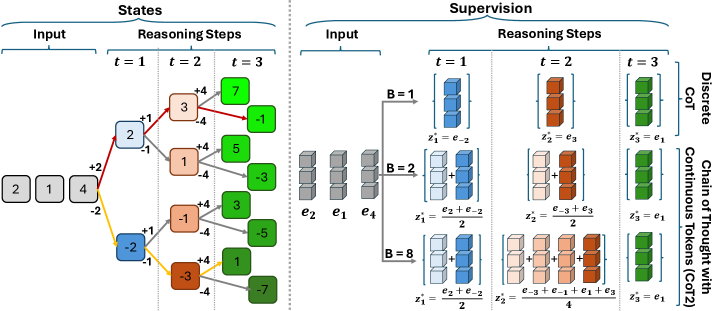

CoT2 replaces discrete tokens with continuous vectors to enable tracking multiple reasoning paths in parallel within a single trace, constrained by embedding dimension rather than vocabulary size.

Core Problem

Standard Chain-of-Thought relies on discrete token sampling, which forces early commitment to single reasoning paths and limits information flow to log(v) bits per step.

Why it matters:

- Discrete sampling causes 'snowballing errors' where early mistakes derail the entire reasoning chain, preventing exploration of alternatives without expensive multi-path decoding

- The information bottleneck of discrete tokens wastes the high-dimensional capacity of modern embeddings (d >> log v), limiting how much context or state can be passed forward

Concrete Example:

In the Minimum Non-Negative Sum (MNNS) task, a discrete model must commit to a specific + or - sign for a number at each step. If it picks the wrong sign early, it cannot recover. CoT2 maintains a superposition of both possibilities in a continuous vector until the final decision.

Key Novelty

Chain of Thought with Continuous Tokens (CoT2)

- Instead of sampling one token, the model outputs a weighted sum (convex combination) of token embeddings, effectively superposing multiple potential thoughts

- Introduces 'budget-constrained supervision' (CSFT) where the model is trained to match the distribution of the top-B best reasoning trajectories, enabling parallel exploration

- Proposes Multi-Token Sampling (MTS) for inference, which averages K discrete tokens to approximate the ideal continuous state, bridging deterministic and generative reasoning

Architecture

Illustration of the supervision strategy comparing Discrete CoT (single path) vs. CoT2 (superposition of multiple paths).

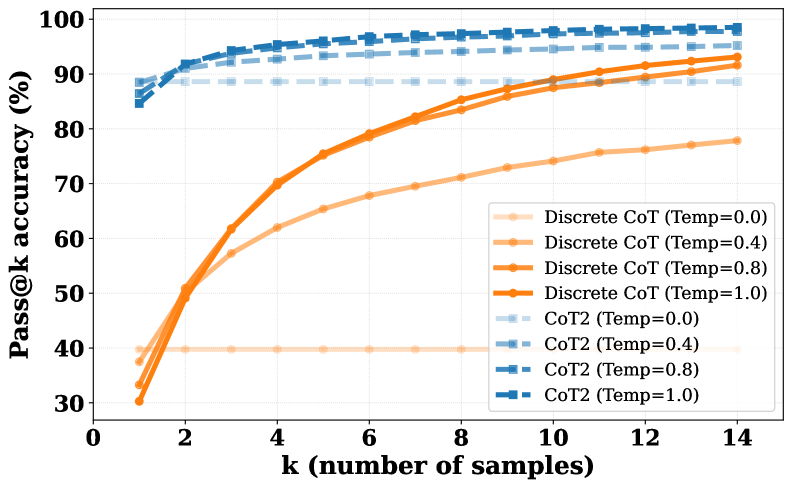

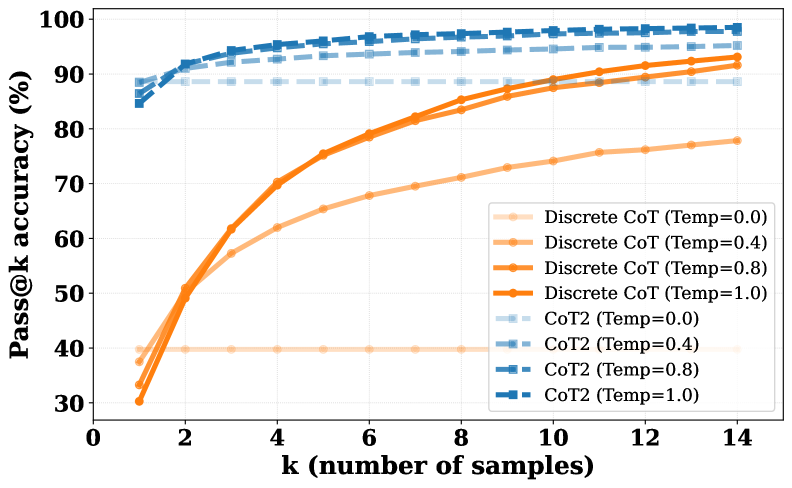

Evaluation Highlights

- CoT2 with full trajectory budget achieves near 100% accuracy on MNNS (Minimum Non-Negative Sum), significantly outperforming Discrete CoT which struggles with early commitments

- Pass@1 performance of CoT2 matches or exceeds Pass@k of discrete baselines, effectively emulating parallel search within a single forward pass

- Policy optimization (RL) with CoT2 further improves accuracy over supervised baselines on logical reasoning tasks like ProntoQA and ProsQA

Breakthrough Assessment

8/10

Offers a theoretically grounded and empirically effective method to overcome the discrete branching bottleneck of LLMs, enabling 'single-trace' parallel search.