📝 Paper Summary

Theoretical expressiveness of Transformers

Chain of Thought (CoT) reasoning

Theoretically proves that while standard transformers are limited to parallelizable problems, adding Chain of Thought steps empowers them to solve inherently serial problems by acting as a general-purpose sequential computer.

Core Problem

Standard decoder-only transformers are theoretically limited to solving problems that can be computed in parallel (low circuit depth), making them incapable of solving inherently serial tasks regardless of size.

Why it matters:

- Explains why LLMs struggle with math and logic puzzles without step-by-step prompting despite massive scale

- Current theoretical bounds for transformers are loose, often assuming unrealistic precision or failing to explain the specific utility of intermediate steps

- Understanding the mechanism of CoT is crucial for designing better architectures for complex reasoning

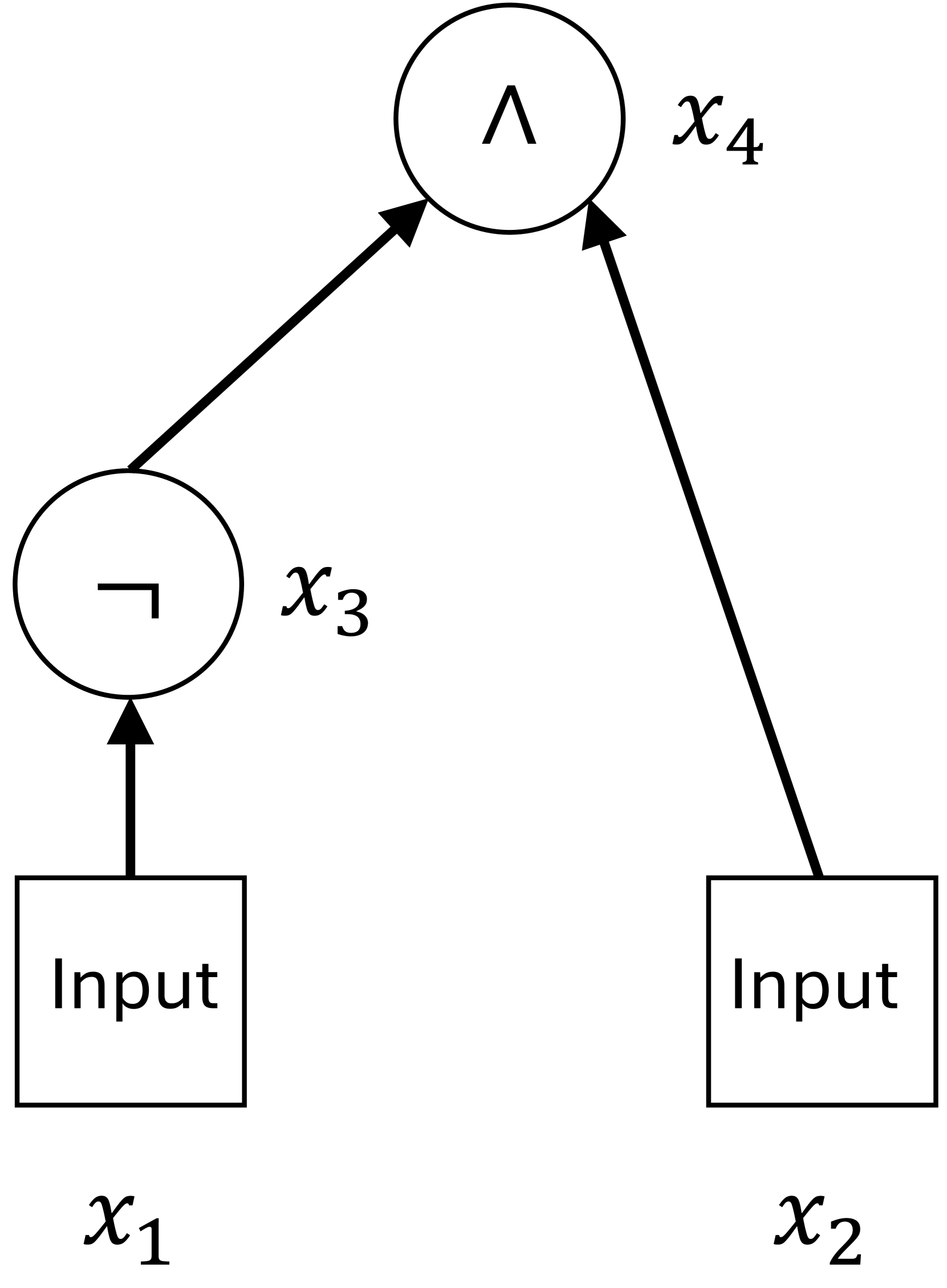

Concrete Example:

A standard transformer cannot solve the 'permutation composition' problem (calculating the result of applying multiple permutations in sequence, e.g., f(g(h(x)))) because it requires tracking the state sequentially, which a parallel circuit cannot do efficiently. With CoT, it can output the result of h(x), then g(h(x)), and finally f(g(h(x))).

Key Novelty

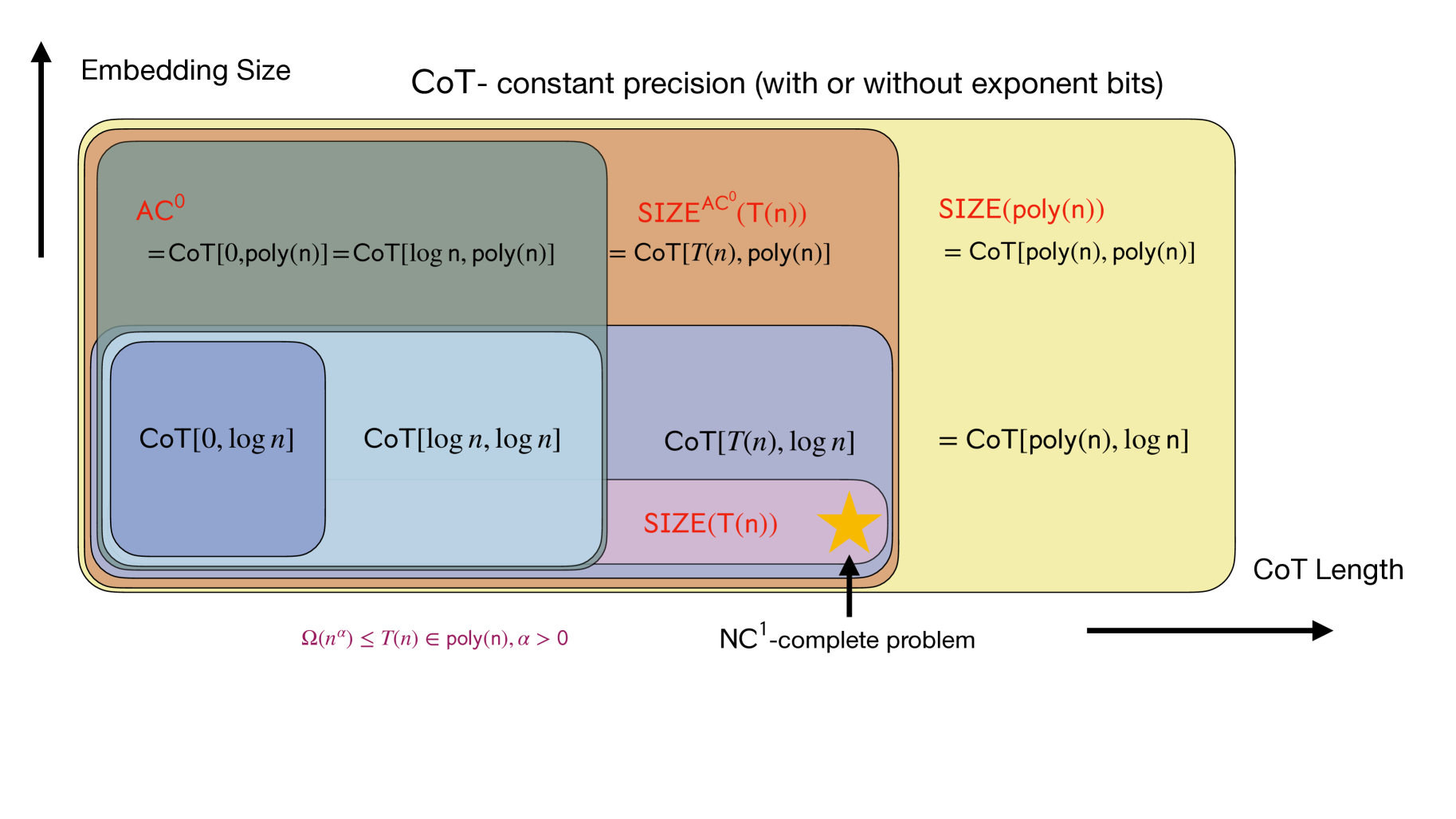

CoT as a Serial Computing Mechanism

- Establishes a tighter upper bound for standard constant-precision transformers, proving they are limited to the complexity class AC0 (simpler than previously thought TC0)

- Proves that with T steps of CoT, these transformers can simulate any Boolean circuit of size T, effectively enabling general serial computation

- Demonstrates that polynomial steps of CoT allow transformers to solve any problem in P/poly (polynomial size circuits), a massive jump in expressiveness

Architecture

A conceptual diagram contrasting Standard Transformers vs. CoT Transformers mapped to complexity classes

Evaluation Highlights

- Transformers with CoT achieve >90% accuracy on 5-element permutation composition tasks where standard transformers fail completely (<10% accuracy) even with depth 16

- On modular addition (a parallelizable task), standard transformers solve it easily (100% accuracy) with depth 1, validating the theory that CoT is only needed for serial tasks

- CoT enables solving the Circuit Value Problem (tracking logic gate values) with near 100% accuracy, whereas standard models remain at random guessing (~50%) regardless of depth

Breakthrough Assessment

9/10

Provides a fundamental theoretical justification for why CoT works. It bridges the gap between empirical success and circuit complexity theory, offering rigorous proofs for the serial vs. parallel nature of Transformers.