📝 Paper Summary

LLM Interpretability

Theoretical Deep Learning

Reasoning

Bounded-depth Transformers cannot theoretically solve basic math or dynamic programming tasks directly due to circuit complexity limitations, but Chain-of-Thought enables them to succeed by serving as a recursive loop that increases effective depth.

Core Problem

Autoregressive Transformers struggle to directly generate correct answers for complex tasks involving mathematics, logic, or reasoning, despite their success in other NLP areas.

Why it matters:

- The underlying mechanism of why Chain-of-Thought (CoT) dramatically improves performance remains elusive and mysterious

- It is unclear if LLMs have inherent limitations in solving math problems directly or if it's merely a training issue

- Understanding these mechanisms is crucial for justifying the reliability of LLMs in tackling complex real-world decision-making tasks

Concrete Example:

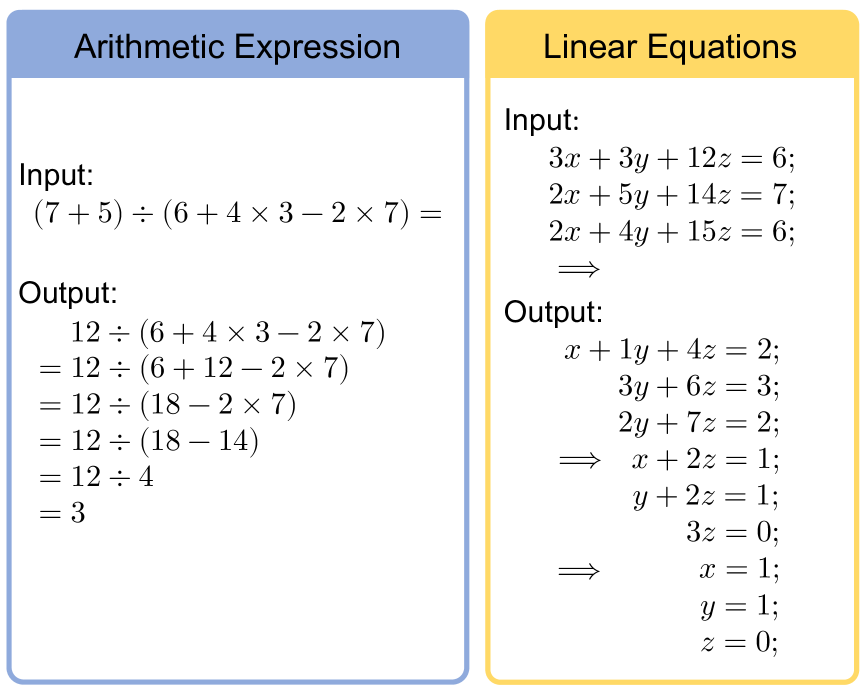

When asked to solve a system of linear equations directly, a standard Transformer might output a wrong answer immediately. However, with CoT, it generates the Gaussian elimination steps (e.g., 'Step 1: Eliminate x from eq 2...') before outputting the correct solution.

Key Novelty

Circuit Complexity Analysis of CoT

- Models the Transformer as a circuit with bounded depth and analyzes the complexity class of target problems (e.g., Arithmetic is NC1-complete)

- Proves that direct solution is impossible for bounded-depth Transformers (TC0) unless size is super-polynomial

- Demonstrates by construction that generating CoT derivations effectively increases the circuit depth, allowing constant-size Transformers to solve these problems

Architecture

Illustration of the input/output format for Arithmetic and Equation tasks using Chain-of-Thought (CoT).

Evaluation Highlights

- Proved theoretically: Bounded-depth log-precision Transformers cannot solve Arithmetic or Linear Equations directly (impossible unless size grows super-polynomially)

- Proved theoretically: A Transformer with depth L=5 and constant size is sufficient to solve Arithmetic tasks using CoT

- Proved theoretically: A Transformer with depth L=4 and constant size is sufficient to solve Linear Equation tasks using CoT

Breakthrough Assessment

9/10

Provides a rigorous theoretical foundation for why Chain-of-Thought is necessary, moving beyond empirical observation to mathematical proof using complexity theory.