📝 Paper Summary

Non-autoregressive generation

Reasoning and Planning

Multi-Granularity Diffusion Modeling outperforms autoregressive models on complex planning tasks by prioritizing difficult subgoals via adaptive token-level reweighting during the diffusion process.

Core Problem

Autoregressive models struggle with tasks requiring complex reasoning and long-term planning because they fail to handle 'subgoal imbalance'—where specific intermediate steps are significantly harder to predict than others.

Why it matters:

- Current LLMs (like GPT-4) still struggle with tasks demanding consistent global coherence, such as advanced logic puzzles or long-horizon planning

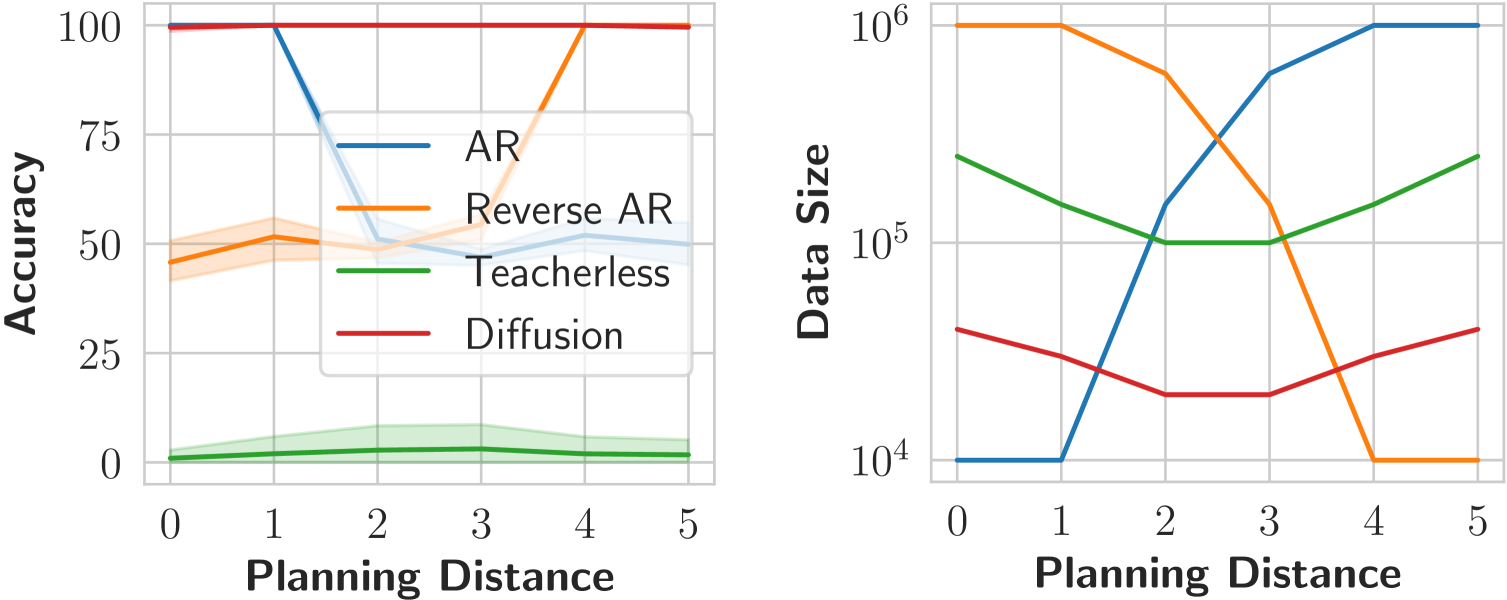

- Autoregressive models require exponentially more data to learn 'hard' subgoals that involve long planning distances, making them data-inefficient for reasoning

- Standard approaches like backtracking or tree search at inference time are computationally expensive and slow

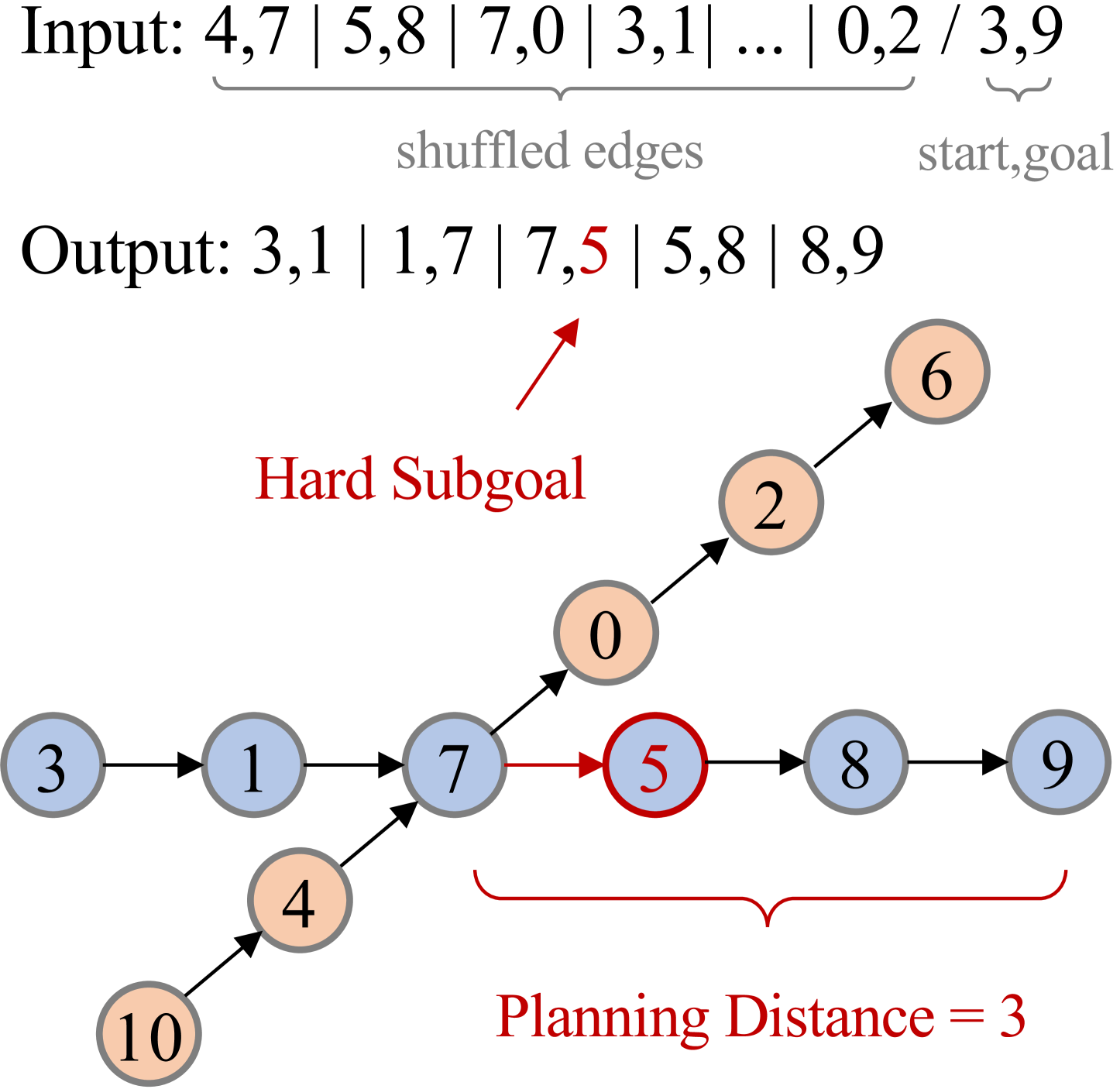

Concrete Example:

In a graph path-finding task, if the goal is Node 9 and the current location is Node 7, the model must look 3 steps ahead (Planning Distance) to choose Node 5 over Node 0. Autoregressive models, seeing only past tokens, fail to look ahead sufficiently and choose the wrong next node, while diffusion models can utilize global context.

Key Novelty

Multi-Granularity Diffusion Modeling (MGDM)

- Identifies that diffusion models naturally handle 'hard' subgoals better by decomposing them into multiple 'views' via the iterative noise-addition process

- Introduces an adaptive token-level reweighting mechanism during training that assigns higher importance to tokens that are difficult for the model to predict

- Employs an 'easy-first' decoding strategy during inference to resolve simpler parts of the sequence before tackling the harder constraints

Architecture

Comparison of loss values between AR and Diffusion models on a 'hard' subgoal (Planning Distance = 3).

Evaluation Highlights

- 100% accuracy on Sudoku puzzles using MGDM, compared to only 20.7% for the autoregressive baseline

- 91.5% accuracy on the Countdown arithmetic reasoning task, significantly outperforming the autoregressive model's 45.8%

- Demonstrates that autoregressive models require exponentially scaling data to solve hard planning subgoals, whereas diffusion models solve them with significantly less data

Breakthrough Assessment

8/10

Strong empirical evidence showing diffusion models fundamentally overcoming the planning limitations of autoregressive models on logic tasks without external search.