📊 Experiments & Results

Evaluation Setup

Feature steering on reasoning benchmarks

Benchmarks:

- AIME 2024 (Math Olympiad problems)

- MATH-500 (Math problems)

- GPQA Diamond (Graduate-level science QA)

Metrics:

- maj@4 Accuracy (Majority vote of 4)

- Average number of tokens in reasoning trace

- Statistical methodology: Not explicitly reported in the paper

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| Steering experiments demonstrate that amplifying specific reasoning features identified by ReasonScore improves performance on reasoning benchmarks compared to the base model. | ||||

| MATH-500 | maj@4 Accuracy | 53.2 | 55.4 | +2.2 |

| GPQA Diamond | maj@4 Accuracy | 33.0 | 37.0 | +4.0 |

| AIME 2024 | maj@4 Accuracy | 8.6 | 10.0 | +1.4 |

| Steering experiments also show that activating reasoning features causes the model to generate significantly longer reasoning traces, suggesting a causal mechanism. | ||||

| MATH-500 | Avg Token Count | 9.9 | 12.0 | +2.1 |

Experiment Figures

Bar chart showing the percentage of identified reasoning features present at different stages of model training (Base -> Base+Reasoning Data -> Reasoning Model+Data).

Main Takeaways

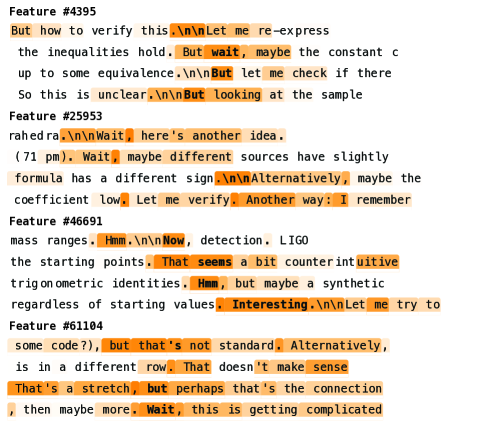

- Identified 46 interpretable features corresponding to Uncertainty, Exploration, and Reflection.

- Model diffing reveals that 60% of these reasoning features are absent in the base model and only emerge after fine-tuning on both reasoning data and the reasoning model itself.

- Steering features identified by ReasonScore consistently increases the length of reasoning traces, indicating the model is engaging in more extensive 'thinking'.

- Performance gains from steering are observed across multiple benchmarks (AIME, MATH, GPQA), validating the functional importance of these features.