📝 Paper Summary

LLM Reasoning

Prompt Engineering

Neuro-symbolic AI

This survey categorizes emerging techniques for enhancing Large Language Model reasoning into prompting strategies, architectural innovations, and learning paradigms to address limitations in logical inference and factual consistency.

Core Problem

While Large Language Models (LLMs) exhibit high fluency and factual recall, they struggle with systematic reasoning, often failing at multi-step logical transformations, mathematical proofs, and maintaining consistency.

Why it matters:

- Reliability is critical for high-stakes domains like law, medicine, and scientific discovery, where plausible-sounding but logically flawed hallucinations are dangerous

- Current statistical learning paradigms (pattern matching) often fail to replicate the structured, multi-step deductive and inductive reasoning capabilities found in classical symbolic AI

Concrete Example:

In complex mathematical problem-solving, a standard LLM might provide a direct, incorrect answer based on surface patterns. In contrast, reasoning-augmented approaches force the model to generate intermediate logical steps, exposing errors before the final conclusion.

Key Novelty

Taxonomy of Reasoning Enhancement Methods

- Categorizes reasoning improvements into three distinct domains: Prompting Strategies (guiding thought processes), Architectural Innovations (integrating external knowledge/logic), and Learning Paradigms (specialized fine-tuning)

- Synthesizes the integration of classical symbolic AI (logic, rules) with modern deep learning (neuro-symbolic models) to improve explainability and robustness

Architecture

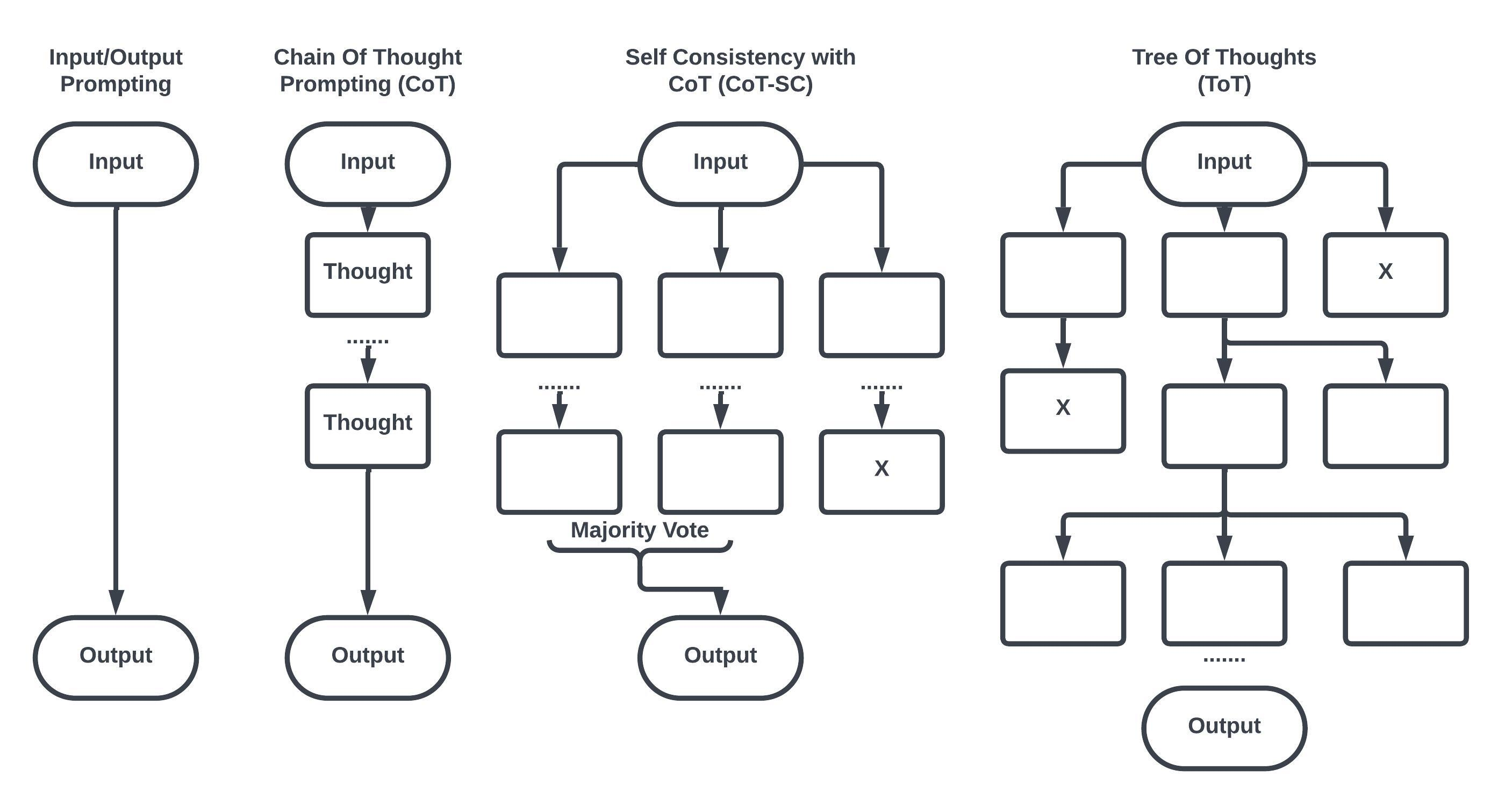

A conceptual taxonomy of prompting-based reasoning techniques (Chain-of-Thought, Self-Consistency, Tree-of-Thought)

Breakthrough Assessment

4/10

A comprehensive survey that organizes existing literature effectively but does not propose a novel method or provide new experimental benchmarks.