📝 Paper Summary

Chain-of-Thought (CoT) Efficiency

Prompt Engineering

Data Selection for Fine-tuning

SPIRIT improves reasoning efficiency by using perplexity to identify and remove or merge unnecessary Chain-of-Thought steps that do not contribute to model confidence.

Core Problem

Chain-of-Thought reasoning incurs high computational costs due to long generation sequences, often including unnecessary steps that do not contribute to the final answer.

Why it matters:

- Detailed reasoning processes increase generation time and computational cost for LLMs

- It is unclear which reasoning steps are truly essential for a specific model, leading to redundant processing

- Simply removing steps randomly can disrupt coherence and degrade accuracy, especially for smaller models like LLaMA3-8B

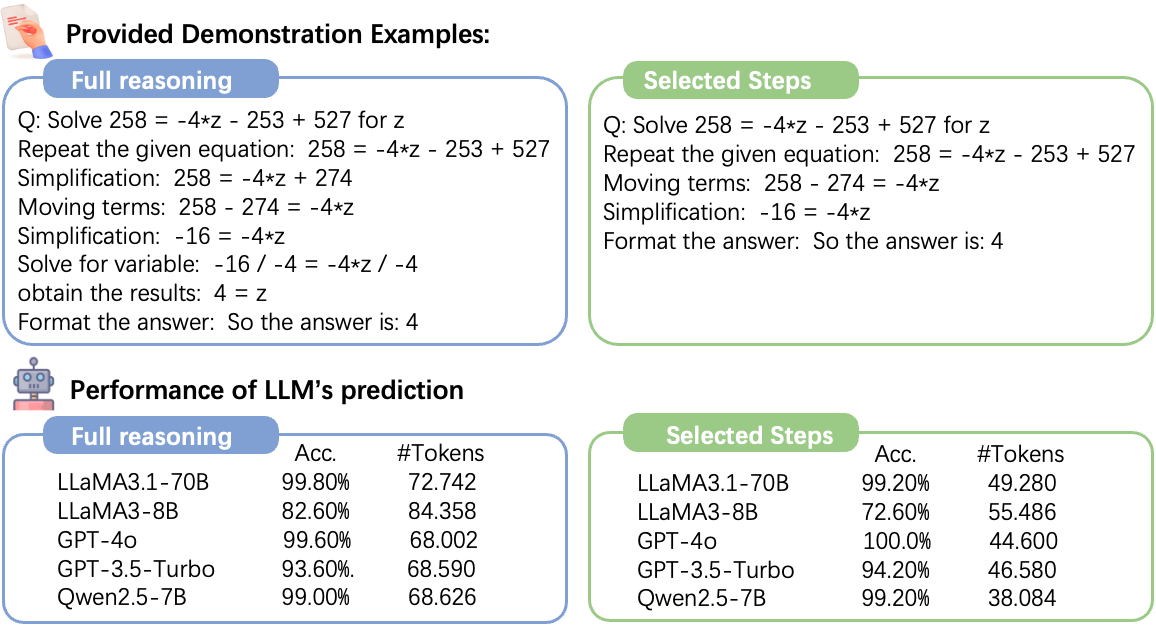

Concrete Example:

In a math problem requiring intermediate calculations (e.g., '40 - 4 = 36' followed by '36 * 3/4 = 27'), removing the first step causes the value '36' to appear abruptly in the second step. This lack of context confuses the model, whereas a merged step like '(40-4)*3/4 = 27' maintains coherence.

Key Novelty

Stepwise Perplexity-Guided Refinement (SPIRIT)

- Uses Perplexity (PPL) as a proxy for step importance: if removing a reasoning step does not significantly increase PPL, the step is deemed unnecessary

- Introduces a 'Merge' operation alongside 'Remove' to fix coherence issues where a step is redundant but contains values needed for the next step

- Separates strategies for Few-Shot CoT (using a calibration set to measure PPL) and Fine-Tuning (measuring PPL directly on training data)

Architecture

The logical flow of the SPIRIT algorithm for identifying and processing reasoning steps.

Breakthrough Assessment

7/10

Proposes a logical, metric-driven method (Perplexity) to optimize CoT length. Addresses the specific issue of 'coherence' during pruning via merging. Efficacy depends on missing quantitative results.