📝 Paper Summary

Financial Large Language Models

Reasoning Models

Reinforcement Learning for LLMs

Fin-R1 is a 7-billion parameter financial LLM trained via supervised fine-tuning and Group Relative Policy Optimization on a distilled high-quality reasoning dataset, achieving strong performance with low deployment costs.

Core Problem

General-purpose reasoning models struggle in finance due to fragmented data sources, lack of transparency (black box nature) required for compliance, and weak transferability to specific business scenarios.

Why it matters:

- Financial tasks require integrating heterogeneous knowledge (legal, economic, quantitative) which general models often fail to do coherently

- Regulatory environments demand traceability and explainability, but most models output answers without transparent reasoning paths

- Existing financial models typically rely on pre-training or simple fine-tuning, lacking the deep reasoning capabilities needed for complex tasks like risk pricing

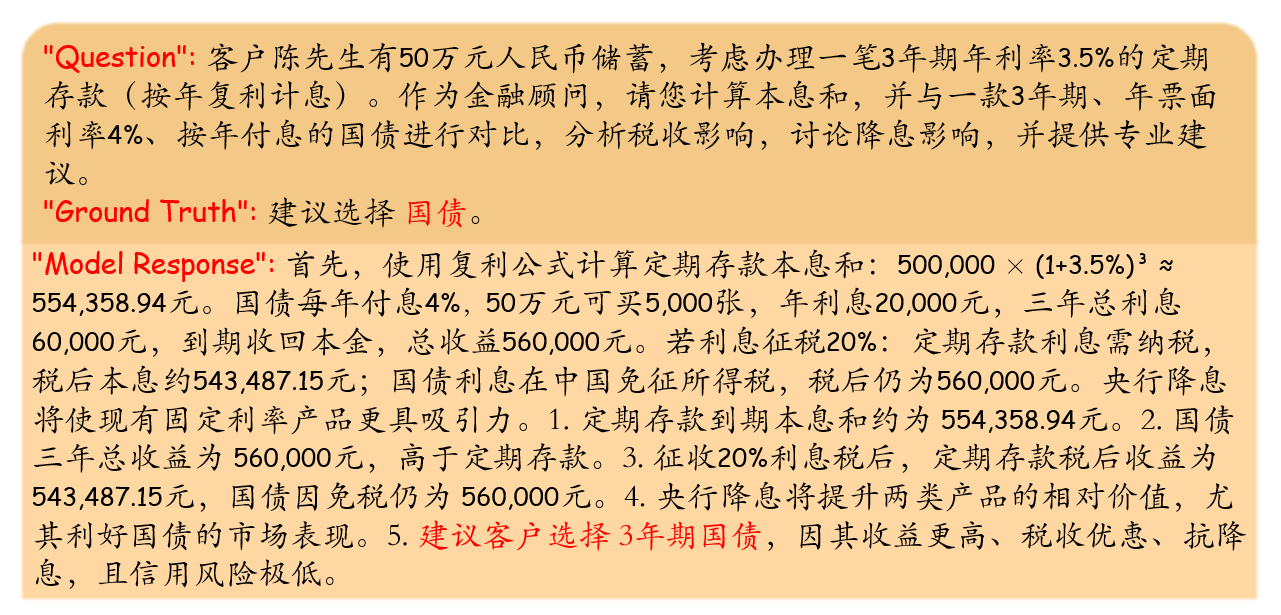

Concrete Example:

Financial data often contains contradictory signals across dispersed sources (e.g., contractual terms vs. market signals). A standard model might memorize training examples, failing to generalize when these signals conflict in new scenarios, whereas a reasoning model needs to explicitly weigh the evidence.

Key Novelty

Two-stage Financial Reasoning Post-Training

- Constructs a specialized reasoning dataset (Fin-R1-Data) by distilling reasoning traces from DeepSeek-R1 and filtering them with Qwen2.5-72B-Instruct

- Applies Supervised Fine-Tuning (SFT) followed by Group Relative Policy Optimization (GRPO) to enforce both correctness and structured, interpretable reasoning chains in a small 7B model

Architecture

The overall two-stage framework pipeline: Data Construction (Distillation & Filtering) and Model Training (SFT + RL).

Evaluation Highlights

- Achieved an average score of 75.2 on established financial reasoning benchmarks

- Outperformed existing state-of-the-art models of the same 7B scale by more than 17 points

- Ranked second overall among tested models, surpassing many larger general-purpose models

Breakthrough Assessment

8/10

Successfully adapts the 'reasoning model' paradigm (like o1/DeepSeek-R1) to a specific vertical (finance) using a 7B model, demonstrating that smaller domain-specific models can achieve high reasoning performance via specialized RL.