📝 Paper Summary

Causal Reasoning in LLMs

Retrieval-Augmented Generation (RAG)

The paper argues that LLMs primarily perform shallow causal retrieval (Level-1) rather than genuine reasoning (Level-2), and proposes a new fresh-corpus benchmark and a goal-oriented RAG method to bridge this gap.

Core Problem

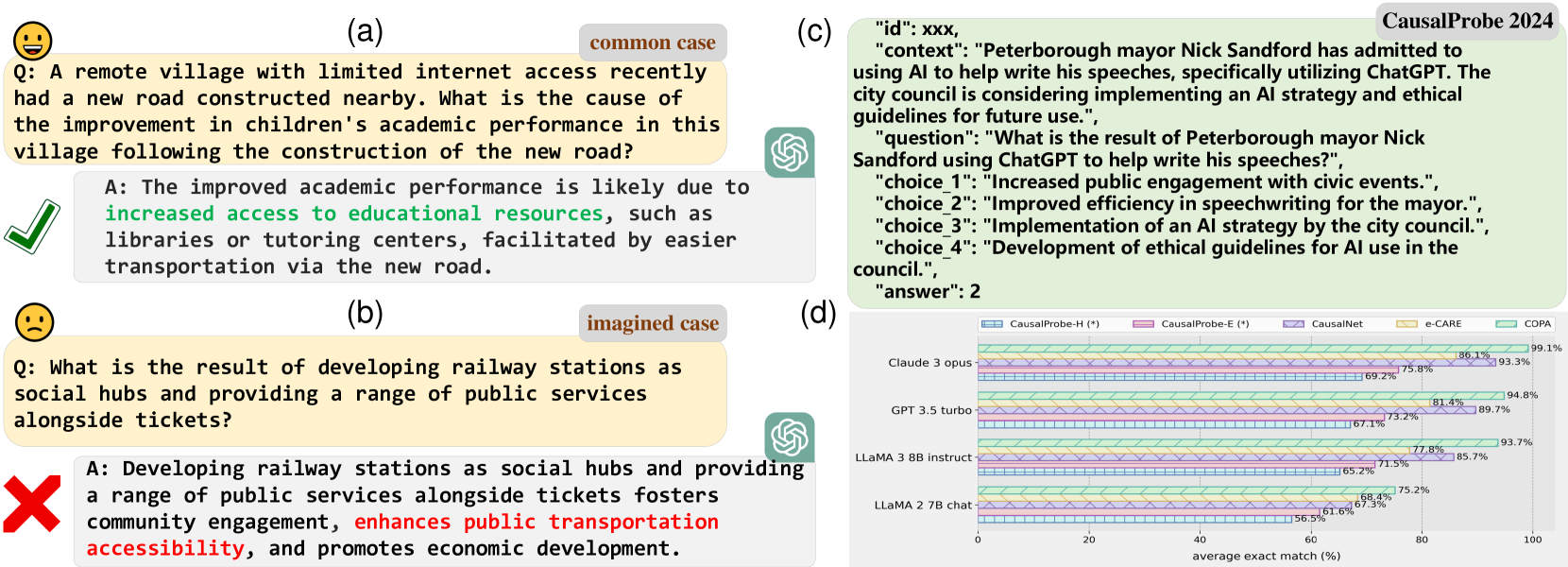

LLMs perform well on causal tasks involving common knowledge but fail on novel or counterfactual scenarios, suggesting they rely on memorized correlations (Level-1) rather than understanding underlying causal mechanisms (Level-2).

Why it matters:

- Current evaluations using older benchmarks (COPA, e-CARE) are contaminated because their data is likely in LLM training sets, creating an illusion of competence.

- Autoregressive next-token prediction is not inherently causal; 'A follows B' in text does not strictly imply 'A causes B', leading to logical errors in unfamiliar contexts.

- Genuine causal reasoning is a prerequisite for strong AI, yet models struggle with discovering new causal knowledge or estimating causal quantities.

Concrete Example:

When asked about the effect of an unusual, imagined scenario like 'developing railway stations as social hubs,' an LLM might hallucinate an irrelevant answer ('enhance public transportation accessibility') rather than reasoning through the specific social implications, whereas it answers common knowledge questions correctly.

Key Novelty

Distinction between Level-1 (Memorized) vs. Level-2 (Genuine) Reasoning & G2-Reasoner Framework

- Introduces a theoretical distinction: Level-1 reasoning retrieves causal patterns from parameters/context (fast, memorized), while Level-2 derives new causal knowledge via sophisticated mechanisms (slow, genuine).

- Proposes G2-Reasoner: A prompt framework that mimics human reasoning by explicitly incorporating 'General Knowledge' (via RAG) and 'intended Goals' to guide the model's deduction process.

Architecture

Conceptual contrast between Level-1 (Memorized) and Level-2 (Genuine) reasoning, and the high-level logic of the G2-Reasoner.

Evaluation Highlights

- On the new CausalProbe-2024 Hard benchmark (fresh news data), LLaMA 2 7B Chat achieves only ~50% accuracy, significantly lower than its performance on older benchmarks.

- Claude 3 Opus, a state-of-the-art model, drops to <70% accuracy on CausalProbe-2024 Hard, exposing the gap between memorization and genuine reasoning.

- The proposed G2-Reasoner framework significantly improves performance on fresh/counterfactual tasks compared to standard prompting, consistent across open and closed-source models.

Breakthrough Assessment

7/10

Strong contribution in exposing the 'memorization vs. reasoning' conflation via a fresh benchmark. The proposed solution (G2-Reasoner) is a logical RAG application but less methodologically novel than the benchmarking insight.