📝 Paper Summary

Mathematical Reasoning

Robustness

Generalization

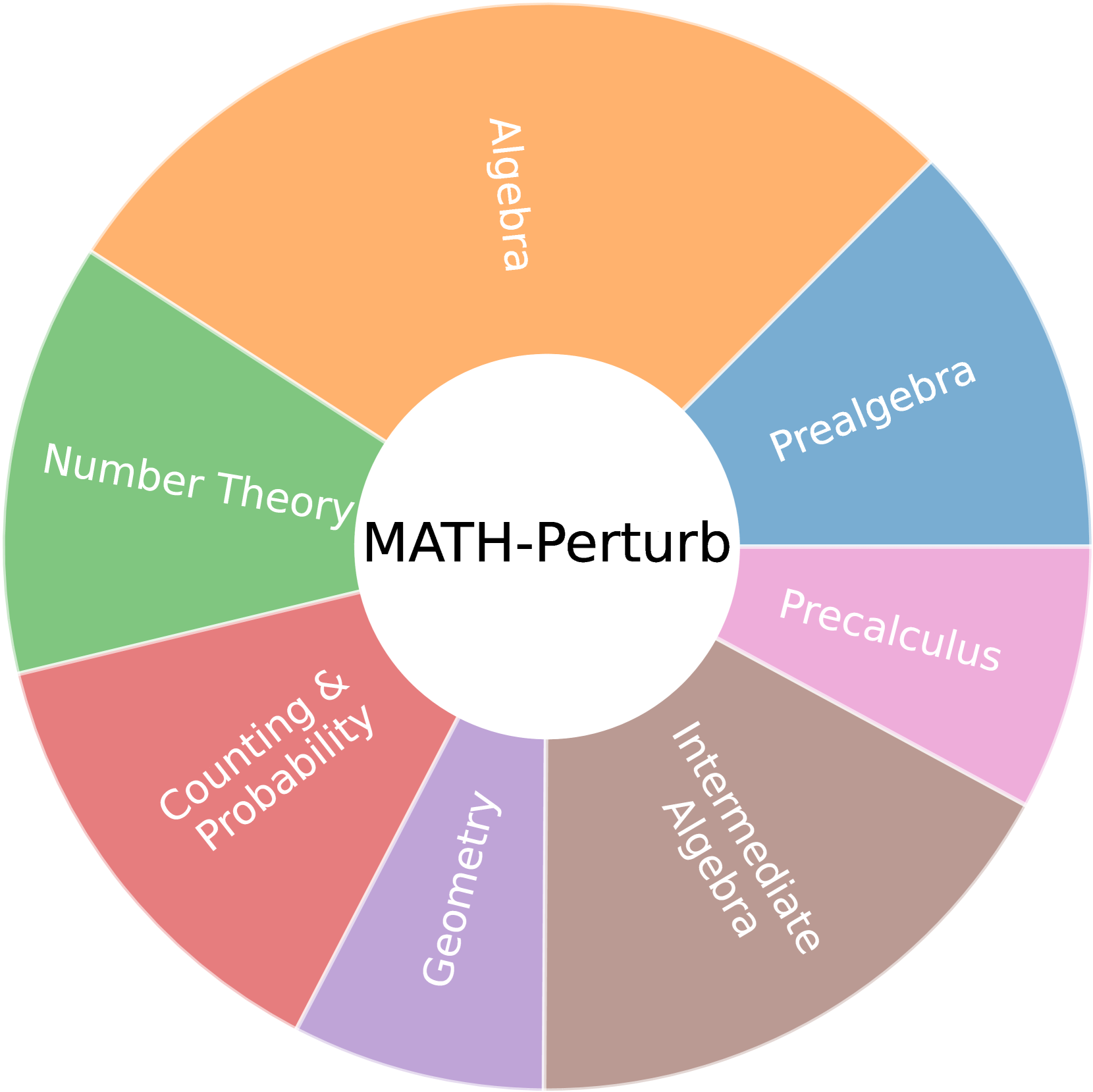

MATH-Perturb introduces a benchmark of 'hard' problem variations—where original solution methods no longer apply—revealing that top LLMs rely heavily on memorized reasoning patterns rather than genuine understanding.

Core Problem

LLMs achieve high scores on math benchmarks, but it is unclear if they truly reason or merely memorize solution templates, as existing robustness tests only use simple numerical perturbations that preserve original reasoning paths.

Why it matters:

- High benchmark scores may be artificial due to data contamination or over-representation of specific problem types in training data

- Models that rely on 'bag-of-heuristics' reasoning fail when problem conditions change fundamentally, making them unreliable for real-world applications where contexts shift

- Current evaluations underestimate the fragility of reasoning models because they do not test 'hard perturbations' that invalidate the memorized solution steps

Concrete Example:

A model might solve a geometry problem correctly using a symmetry argument. When the problem is modified (hard perturbation) such that symmetry no longer holds, the model may blindly apply the original symmetry-based solution anyway, producing a wrong answer (as seen in Figure 5).

Key Novelty

Distinguishing 'Hard' vs. 'Simple' Reasoning Perturbations

- Introduces MATH-P-Hard, a dataset where problems are textually similar to the original but fundamentally altered so that original solution paths are invalid (e.g., breaking a symmetry assumption)

- Contrasts this with MATH-P-Simple, where only non-essential values change, allowing the assessment of whether a model understands the *why* or just memorizes the *how*

Architecture

Conceptual comparison between Simple Perturbation and Hard Perturbation applied to a math problem.

Evaluation Highlights

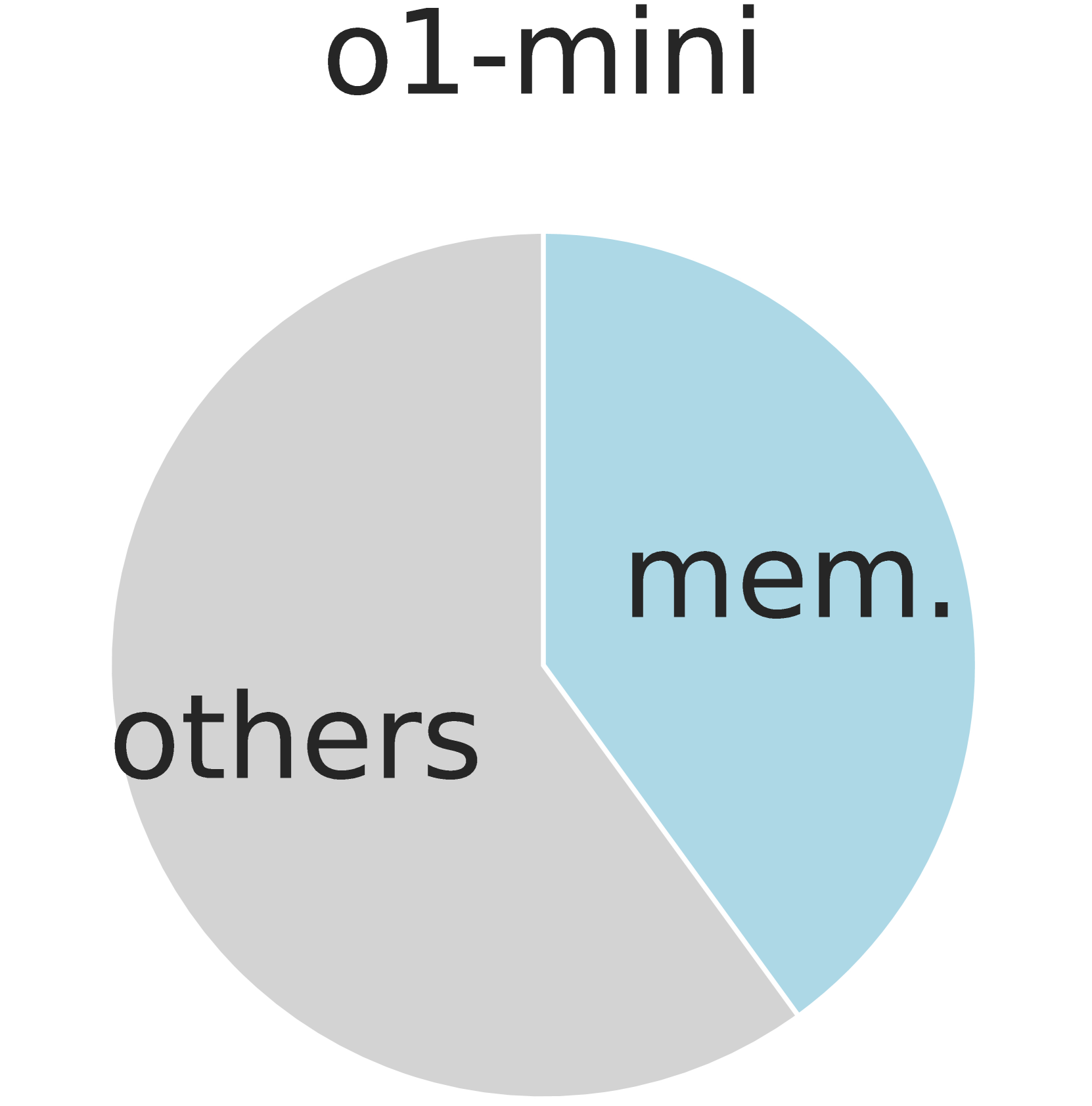

- o1-mini performance drops by 16.49% on MATH-P-Hard compared to original problems, indicating reliance on specific reasoning patterns

- gemini-2.0-flash-thinking suffers a 12.9% accuracy drop on MATH-P-Hard, showing vulnerability even in strong reasoning models

- In-context learning with original problems hurts performance on MATH-P-Hard for large models (18%-40% of correct answers become wrong) due to misleading demonstrations

Breakthrough Assessment

8/10

Significant contribution to evaluation methodology. Exposes a critical weakness (solution template memorization) in SOTA reasoning models that standard benchmarks miss.