📝 Paper Summary

Efficient Reasoning

Reasoning Models (Chain-of-Thought)

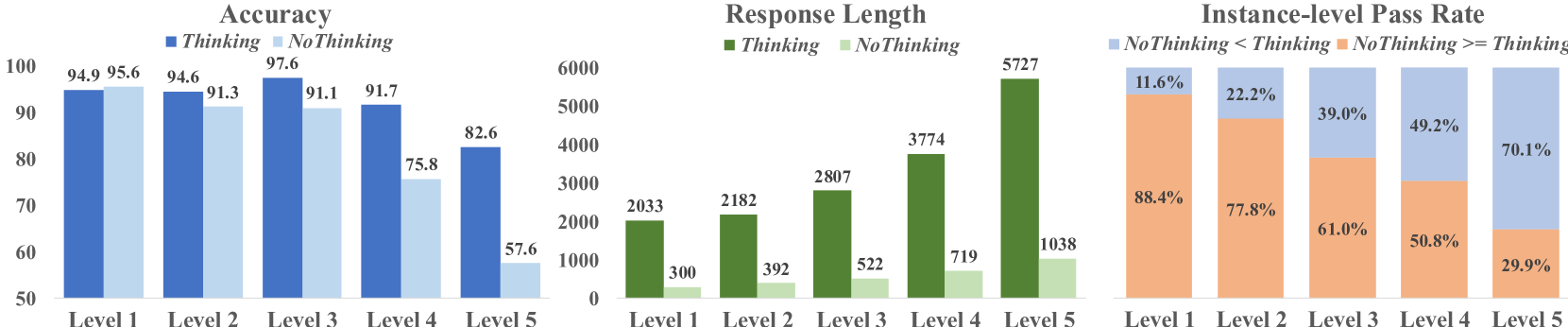

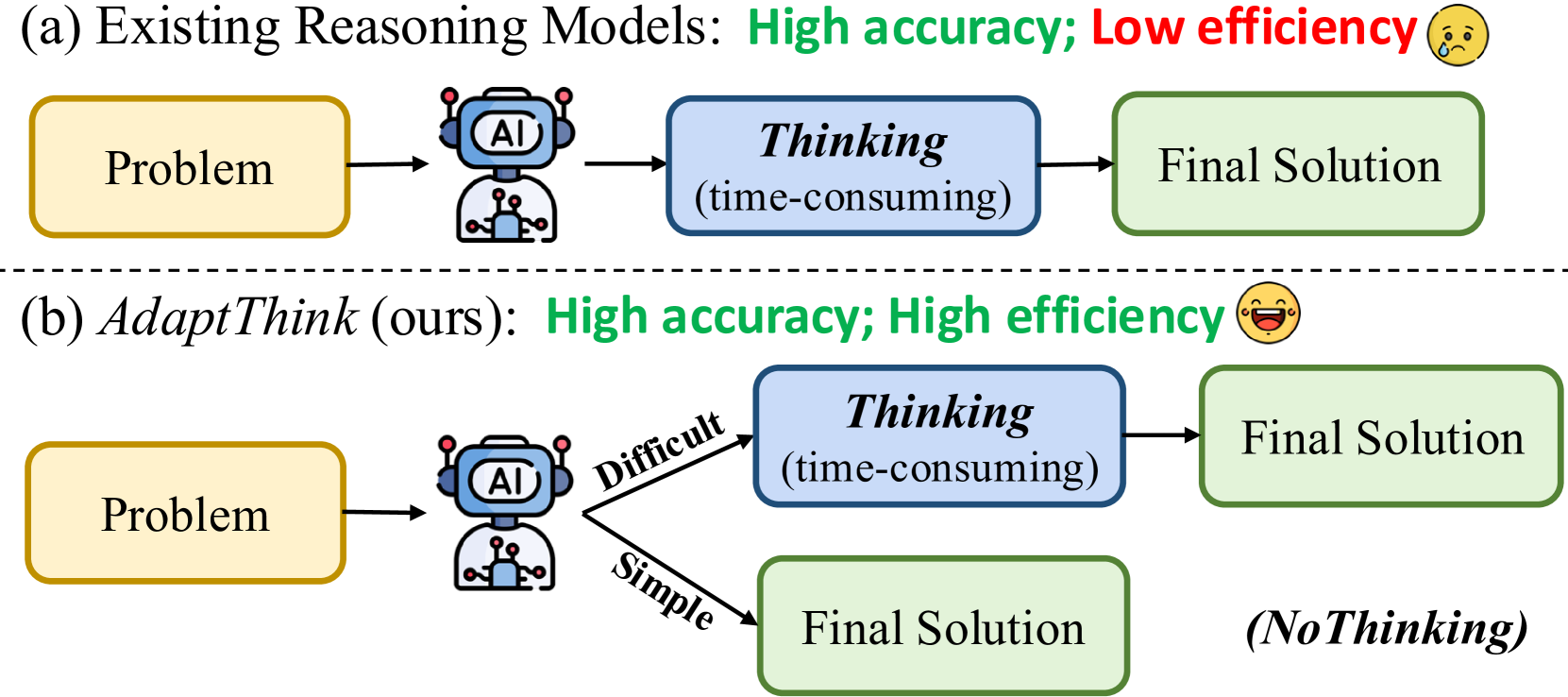

AdaptThink uses reinforcement learning to teach reasoning models to skip the lengthy thinking process for simple problems while retaining it for complex ones, optimizing both efficiency and accuracy.

Core Problem

Large reasoning models like DeepSeek-R1 apply lengthy chain-of-thought processes to every query, including simple ones where such overhead is unnecessary and degrades user experience.

Why it matters:

- Thinking processes substantially increase inference overhead and latency, creating bottlenecks for real-time applications.

- Simple queries (e.g., those solvable by standard LLMs) receive excessively detailed, redundant responses when forced through reasoning models.

- Current efficiency methods (length penalties, merging) still force thinking on all instances rather than deciding *whether* to think.

Concrete Example:

For a simple math problem like '2+2', a reasoning model might generate a long trace verifying the properties of addition before answering '4'. AdaptThink detects this simplicity and outputs '4' immediately, saving tokens.

Key Novelty

Difficulty-Adaptive Thinking Mode Selection via RL

- Teaches the model to switch between 'Thinking' (long CoT) and 'NoThinking' (direct answer) based on problem difficulty.

- Uses a constrained optimization objective that penalizes 'Thinking' unless it provides a significant accuracy gain over the reference model.

- Introduces importance sampling during training to mix Thinking and NoThinking trajectories, overcoming the 'cold start' problem where models initially always think.

Architecture

Pseudocode for the AdaptThink RL algorithm, detailing the importance sampling and gradient update steps.

Evaluation Highlights

- Reduces average response length of DeepSeek-R1-Distill-Qwen-1.5B by 53% on three math datasets while improving accuracy by 2.4%.

- On GSM8K, reduces average response length by 50.9% while improving accuracy by 4.1% compared to the base model.

- On MATH500, reduces average response length by 63.5% while improving accuracy by 1.4%.

Breakthrough Assessment

8/10

Effective, practical solution to the efficiency bottleneck of reasoning models. The 'NoThinking' simplification and adaptive switching mechanism show strong empirical gains in both speed and accuracy.