📊 Experiments & Results

Evaluation Setup

Zero-shot evaluation on logic puzzles and math benchmarks after RL training

Benchmarks:

- Knights and Knaves (K&K) (Logic Puzzles) [New]

- AIME 2021-2024 (Math Competition)

- AMC 2022-2023 (Math Competition)

Metrics:

- Accuracy

- Pass@1

- Memorization Score (Local Inconsistency-based)

- Statistical methodology: Not explicitly reported in the paper

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| Logic Puzzles Training | Training Speed (relative) | 1.0 | 2.38 | +1.38 |

| Logic Puzzles Training | Response Length (Tokens) | 500 | 2000 | +1500 |

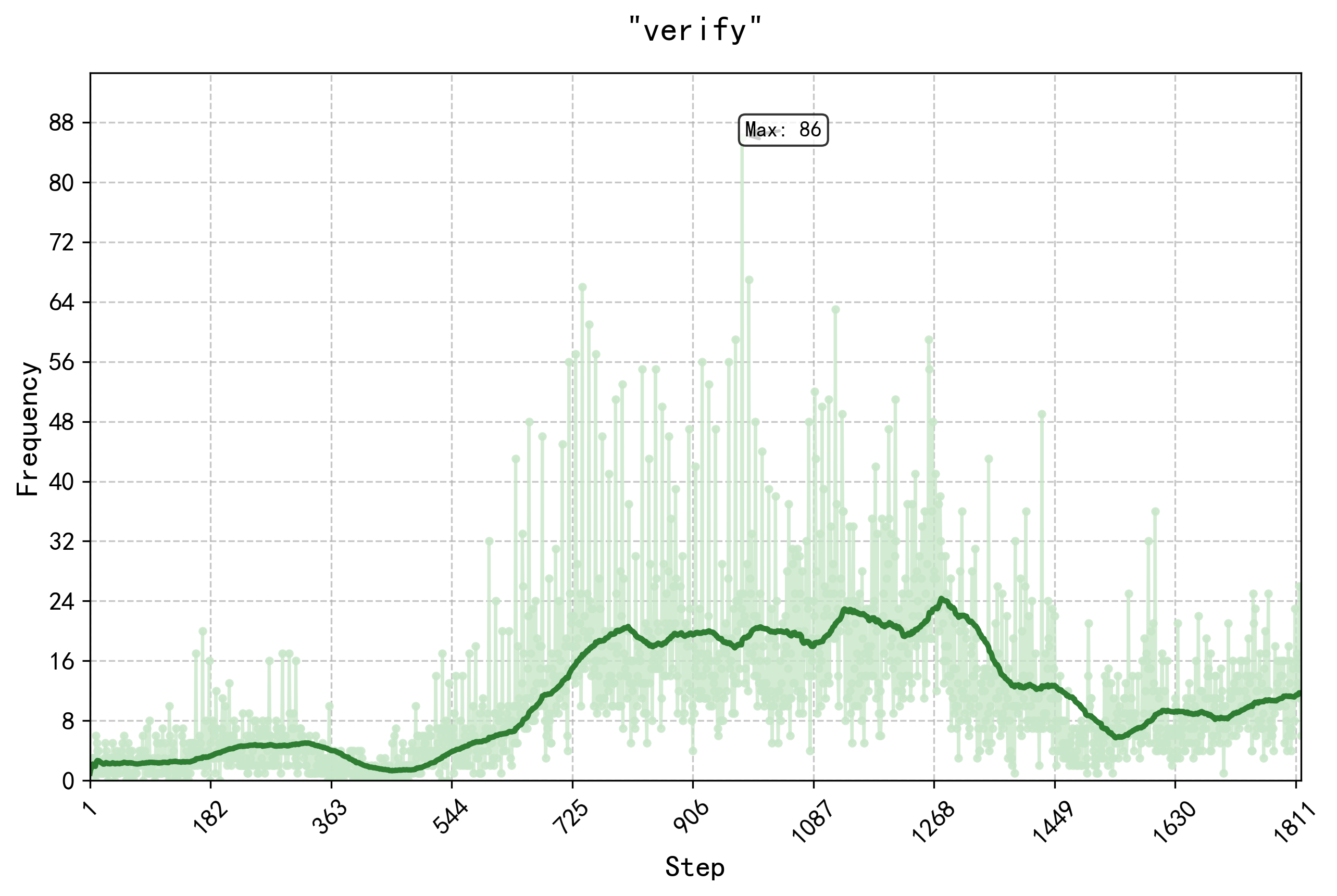

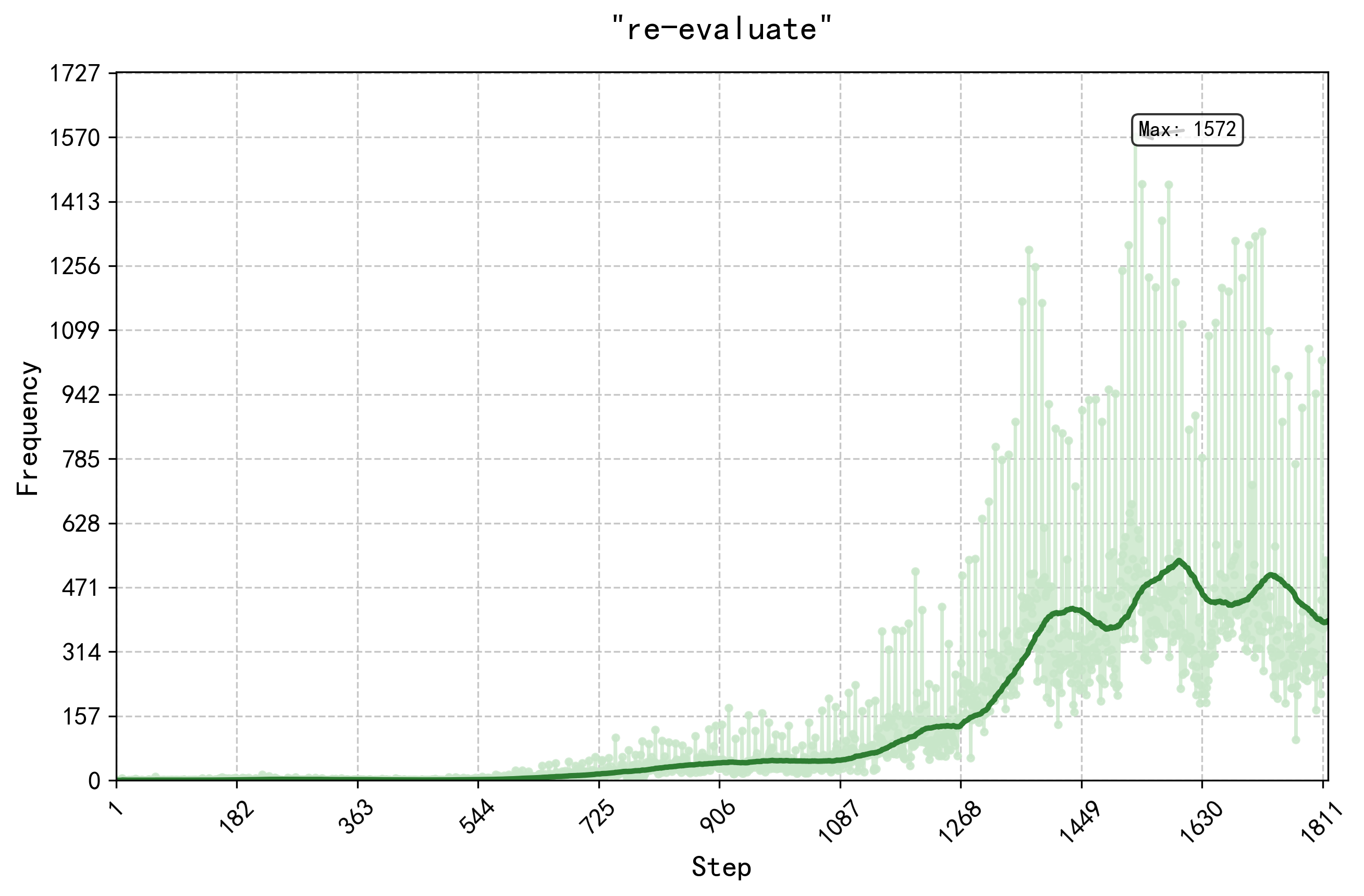

Experiment Figures

Generalization performance on Super OOD benchmarks (AIME/AMC)

Test accuracy vs. Memorization Score for SFT vs. RL

Main Takeaways

- RL training on synthetic logic puzzles transfers significantly to math benchmarks (+125% AIME, +38% AMC), suggesting the model learns abstract reasoning schemata rather than just puzzle patterns

- SFT leads to high memorization (performance drops on perturbed questions), whereas RL yields robust generalization (performance holds on perturbed questions)

- Curriculum learning (ordering puzzles by difficulty) provides a slight advantage over random shuffling, but the difference is small compared to the overall gain from RL

- Language mixing in the thought process was observed to hurt reasoning performance, suggesting a need for language consistency penalties