📝 Paper Summary

Neural Theorem Proving

Formal Mathematics

Reasoning

DeepSeek-Prover-V2 unifies informal mathematical reasoning and formal verification by training a model to decompose complex theorems into subgoals using synthetic cold-start data and reinforcement learning.

Core Problem

LLMs excel at informal natural language reasoning but lack the syntactic rigor required for formal verification systems like Lean, often relying on heuristics that fail strict logical checks.

Why it matters:

- Formal verification systems permit no ambiguity or implicit assumptions, making direct translation from informal LLM reasoning difficult

- Existing formal reasoning training signals are sparse because most proof attempts fail and provide no reward

- Bridging the gap between high-level human-like proof sketches and low-level formal tactics is a longstanding challenge in neural theorem proving

Concrete Example:

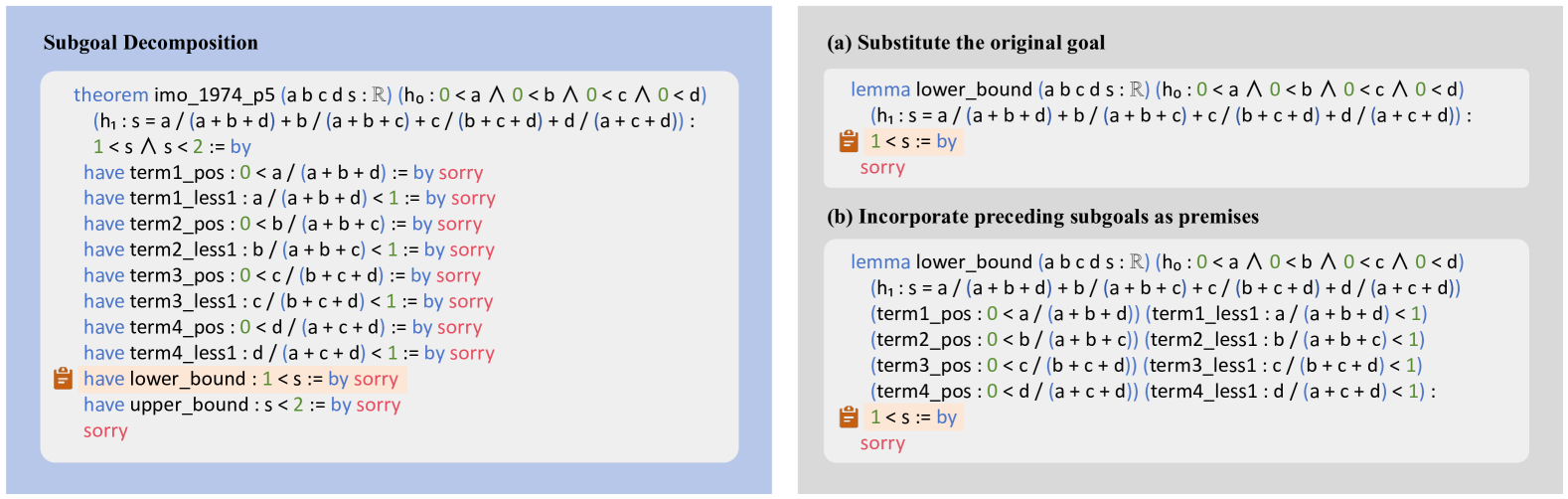

A general-purpose LLM might correctly outline a proof for an algebra problem in English but fail to generate valid Lean code because it misses specific syntax or intermediate lemmas. DeepSeek-Prover-V2 prompts the model to generate 'have' statements with 'sorry' (subgoals), which are then recursively solved, ensuring the high-level plan translates to valid code.

Key Novelty

Subgoal-based Recursive Proving with Cold-Start RL

- Synthesize 'cold-start' training data by prompting a strong model (DeepSeek-V3) to decompose proofs into Lean subgoals, then recursively solving those subgoals with a smaller prover

- Train a single model to perform both high-level informal reasoning (Chain-of-Thought) and low-level formal tactic generation

- Apply Reinforcement Learning (GRPO) with a consistency reward that penalizes deviations from the planned subgoal structure during early training

Architecture

Conceptual flow of the subgoal decomposition and recursive proving strategy.

Evaluation Highlights

- 88.9% Pass ratio on MiniF2F-test (Pass@8192), a state-of-the-art result for neural theorem proving

- Solves 47 out of 658 problems on PutnamBench, a challenging collegiate-level mathematics benchmark

- Solves 6 out of 15 highly challenging AIME 2024-2025 problems in Lean 4 formal language

Breakthrough Assessment

9/10

Establishes a new SOTA on standard benchmarks (MiniF2F) by a significant margin and demonstrates capability on competition-level problems (AIME, Putnam) previously out of reach for formal provers.