📝 Paper Summary

Mathematical Reasoning

Data Efficiency in LLMs

LIMO demonstrates that strong mathematical reasoning can be elicited from foundation models using only 800 high-quality, long-chain examples rather than massive datasets, challenging the need for extensive training data.

Core Problem

Current approaches for teaching reasoning to Large Language Models (LLMs) rely on massive datasets (tens/hundreds of thousands of examples), assuming complex reasoning requires extensive supervision.

Why it matters:

- Training on massive datasets is computationally expensive and data-inefficient

- Large-scale Supervised Fine-Tuning (SFT) often leads to memorization rather than true generalization

- It is unclear if models are actually learning to reason or just retrieving memorized solution patterns

Concrete Example:

When solving a complex American Invitational Mathematics Examination (AIME) problem, a standard model trained on 100k generic math pairs might apply a shallow heuristic and fail. LIMO, trained on just 800 examples, activates pre-trained knowledge to generate a long, self-verifying chain of thought (e.g., 'Let me check this intermediate step...') to reach the correct solution.

Key Novelty

Less-Is-More Reasoning (LIMO) Hypothesis

- Posits that sophisticated reasoning is not 'learned' from scratch but 'elicited' from the pre-trained knowledge base using minimal examples

- Identifies two elicitation factors: the model's latent knowledge and the quality of examples acting as 'cognitive templates' to trigger extended inference-time computation

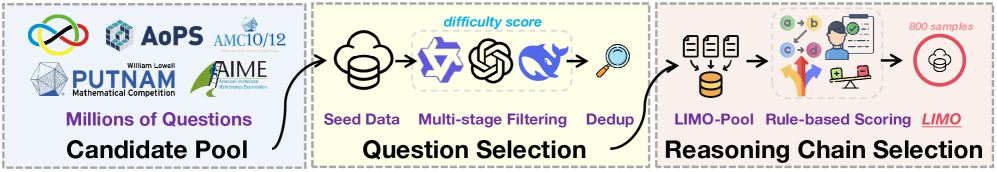

- Employs a strict multi-stage filtering pipeline to select only 800 highly difficult yet solvable problems with elaborate, self-verifying reasoning chains

Architecture

The LIMO Data Curation Pipeline

Evaluation Highlights

- Achieves 63.3% accuracy on AIME24, surpassing previous fine-tuned models (6.5%) using only 1% of the training data

- Scores 95.6% on MATH500, outperforming the baseline of 59.2% by a massive margin

- Demonstrates strong Out-Of-Distribution (OOD) generalization, achieving 45.8% absolute improvement across diverse benchmarks compared to models trained on 100x more data

Breakthrough Assessment

9/10

Challenge fundamental assumptions about the data scale required for reasoning. Achieving SOTA-level performance with only 800 examples suggests a major paradigm shift from knowledge injection to capability elicitation.