📝 Paper Summary

Prompt Engineering

Chain-of-Thought (CoT)

CoT-Sep improves LLM reasoning by inserting simple text separators between few-shot exemplars in prompts, effectively chunking information to reduce cognitive overload.

Core Problem

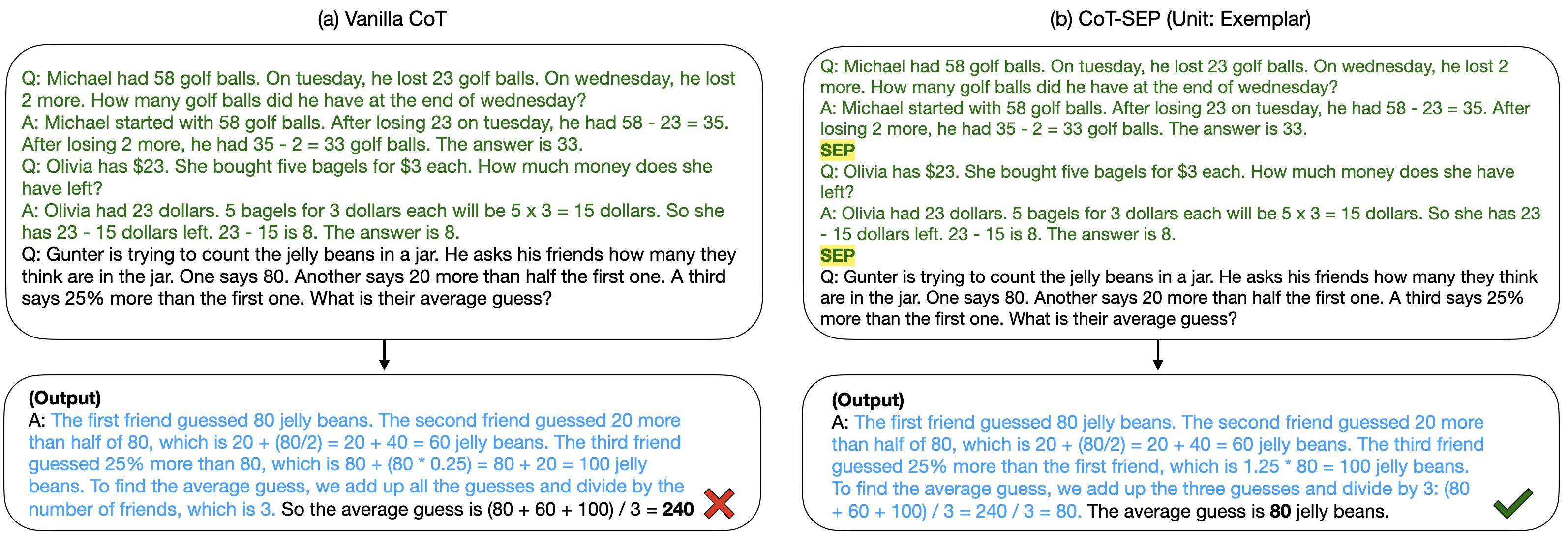

Standard Chain-of-Thought (CoT) prompts pack few-shot exemplars into dense blocks of text, causing 'cognitive overload' for LLMs and making it difficult to distinguish and process individual reasoning steps.

Why it matters:

- Densely formatted prompts limit the model's ability to analyze information efficiently, mimicking human limitations in processing unchunked data.

- Existing methods to improve CoT often require expensive iterative calls or complex external modules, whereas formatting changes are computationally free.

- Optimizing the structural presentation of prompts is a low-resource way to unlock latent reasoning capabilities in existing models.

Concrete Example:

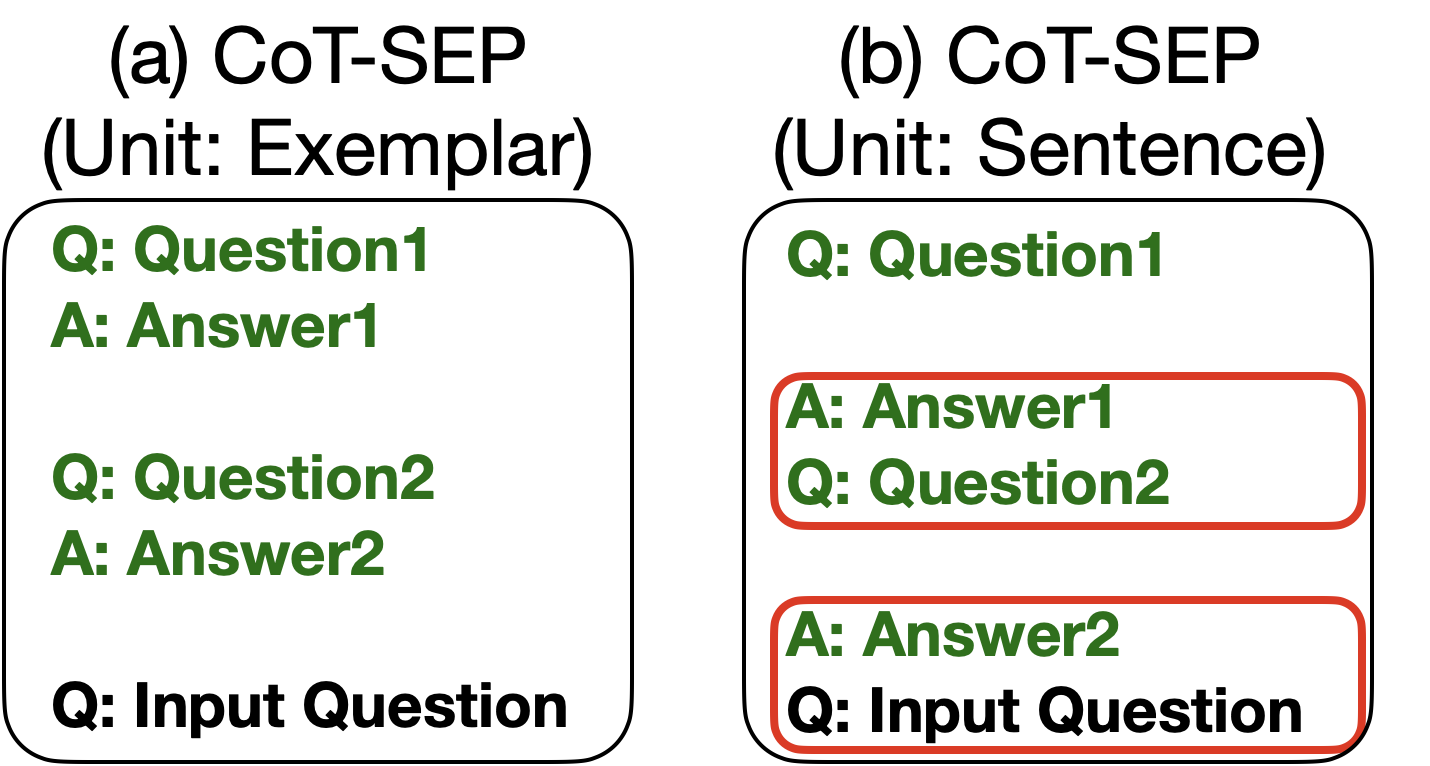

In a standard CoT prompt, the answer to Question 1 runs immediately into Question 2 without a visual break. This can confuse the model (e.g., mixing the previous answer with the next question), whereas CoT-Sep inserts '###' or '\n\n\n' to clearly demarcate where one example ends and the next begins.

Key Novelty

CoT-Sep (Separated Chain-of-Thought)

- Strategically inserts text separators (like newlines, hashes, or HTML tags) at the end of each few-shot exemplar in the prompt.

- Leverages the psychological concept of 'chunking' to help LLMs segment information into manageable portions, enhancing comprehension and reasoning accuracy.

Architecture

Conceptual comparison between Vanilla CoT (densely structured) and CoT-Sep (structured with separators).

Evaluation Highlights

- +5.1% accuracy improvement on GSM8K (math reasoning) using GPT-4-Turbo with TripleSkip separators compared to vanilla CoT.

- +2.8% accuracy improvement on AQuA (complex math) using GPT-3.5-Turbo with TripleSkip separators.

- Consistently outperforms vanilla CoT across LLaMA-2-7B, GPT-3.5, and GPT-4, particularly on more challenging datasets like AQuA.

Breakthrough Assessment

4/10

A simple but effective prompting heuristic. While not a fundamental architectural shift, it offers significant performance gains (up to 5%) with zero computational overhead, highlighting the importance of prompt formatting.