📝 Paper Summary

Prompt Engineering

Automated Reasoning

Chain-of-Thought (CoT)

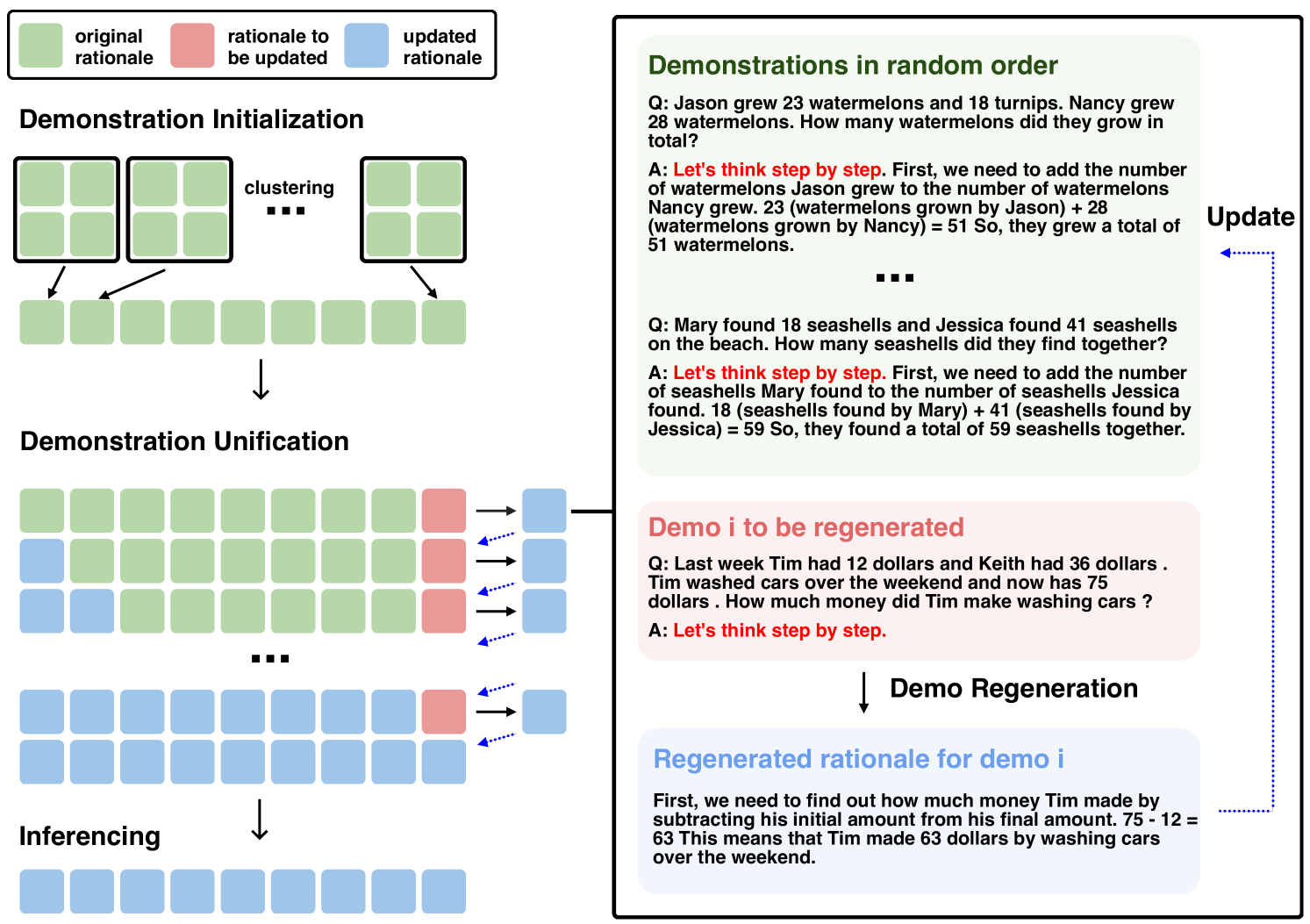

ECHO improves automated chain-of-thought prompting by iteratively refining a diverse set of self-generated demonstrations using one another as context to converge on a unified, high-quality reasoning pattern.

Core Problem

Automated Chain-of-Thought (Auto-CoT) methods generate diverse demonstrations to avoid misleading the model, but this diversity often introduces inconsistent, irrelevant, or incorrect reasoning patterns.

Why it matters:

- Manual creation of few-shot demonstrations is labor-intensive and expensive across different domains

- Existing automated methods (Auto-CoT) suffer from 'misleading by similarity' or ineffective diversity, where retrieved examples are too dissimilar to help

- Cognitive Load Theory suggests that inconsistent or varied solution patterns increase processing difficulty for models, hampering learning

Concrete Example:

In Auto-CoT, if a cluster of math problems is solved using varied methods (some algebraic, some arithmetic, some incorrect), the model struggles to generalize. ECHO unifies these by rewriting them into a consistent format, like transforming a set of disparate math solutions into a standard step-by-step algebraic format.

Key Novelty

Self-Harmonized Chain of Thought (ECHO)

- Iterative Unification: Instead of using raw zero-shot generated rationales, ECHO repeatedly regenerates each rationale using the other sampled rationales as few-shot examples.

- Cognitive Load Reduction: By forcing demonstrations to rewrite each other, the set converges to a single, consistent reasoning pattern (style transfer), making it easier for the model to follow during inference.

- Oversampling & Compression: Clusters more questions than needed (oversampling) and distills their diverse patterns into a refined set, acting as information compression.

Architecture

The three-step pipeline of ECHO: Question Clustering, Demonstration Sampling, and Demonstration Unification.

Evaluation Highlights

- Outperforms Auto-CoT by an average of 2.8% across 10 reasoning datasets (arithmetic, commonsense, symbolic)

- Achieves 83.3% on GSM8K (GPT-3.5-Turbo), surpassing Auto-CoT's 81.6%

- Substantially improves symbolic reasoning: +12.0% on Coin Flip compared to Auto-CoT

Breakthrough Assessment

7/10

A clever, effective refinement of Auto-CoT that addresses the 'quality vs. diversity' trade-off via iterative self-correction. While an incremental improvement over Auto-CoT, the consistency argument is well-grounded and empirically validated.