📝 Paper Summary

Prompt Engineering

Chain-of-Thought Reasoning

In-Context Learning

Contrastive Chain-of-Thought enhances LLM reasoning by including both valid and automatically generated invalid reasoning demonstrations in the prompt, teaching models to avoid common logic errors.

Core Problem

Conventional Chain-of-Thought (CoT) provides only correct examples, failing to inform models about potential mistakes; surprisingly, models are often robust to invalid CoT, suggesting they may ignore the reasoning process entirely.

Why it matters:

- Mistakes in intermediate reasoning steps can compound, leading to incorrect final answers and hallucinations

- Prior work shows LLMs pay little attention to the validity of reasoning chains, undermining the trustworthiness of the generated explanations

- Standard prompts do not explicitly guide the model on what logic faults to avoid, missing the human-like learning process of learning from negative examples

Concrete Example:

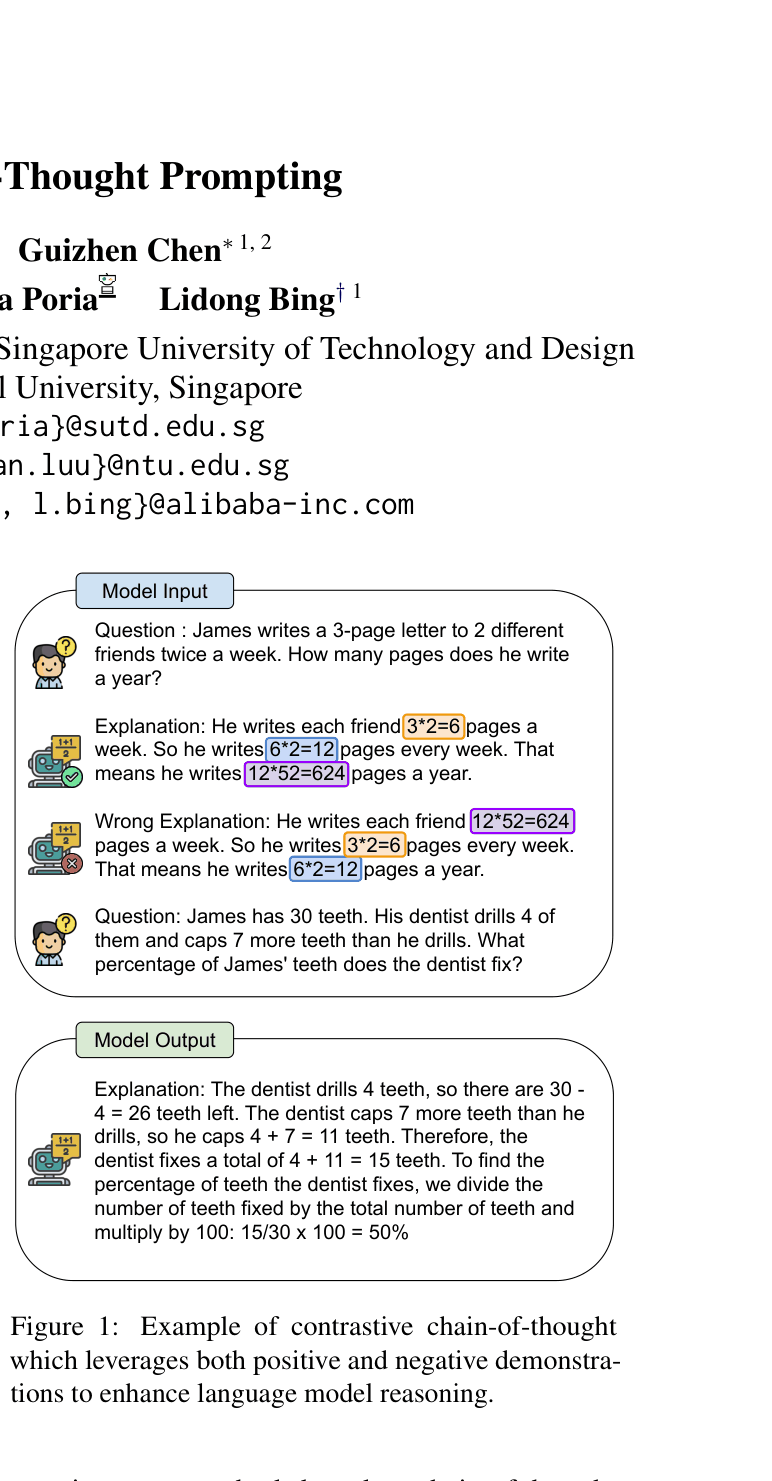

In a math problem about dentist visits, a standard CoT prompt might only show the correct subtraction. A model might then hallucinate a different number. Contrastive CoT explicitly shows a 'Wrong Explanation' where the subtraction is done incorrectly (e.g., subtracting from the wrong total), teaching the model to distinguish correct from incorrect operations.

Key Novelty

Contrastive Chain-of-Thought (Contrastive CoT)

- Augments few-shot prompts with 'contrastive' pairs: a correct reasoning chain followed by an incorrect one for the same question

- Introduces an automatic method to generate negative demonstrations by extracting entities (bridging objects) from valid chains and shuffling them to create incoherent reasoning

Architecture

A comparison of the prompt structure for Contrastive Chain-of-Thought versus conventional methods

Evaluation Highlights

- +16.0% accuracy improvement on Bamboogle (factual QA) using GPT-3.5-Turbo compared to conventional Chain-of-Thought

- +9.8% accuracy improvement on GSM8K (arithmetic reasoning) using GPT-3.5-Turbo compared to conventional Chain-of-Thought

- When combined with Self-Consistency, gains increase further (e.g., +17.6% on Bamboogle over CoT-SC)

Breakthrough Assessment

7/10

Simple yet highly effective prompting strategy that generalizes well across tasks. The automatic generation of negative examples makes it practical and scalable without manual annotation.