📝 Paper Summary

Chain of Thought (CoT) Reasoning

Latent Space Planning

Supervised Fine-Tuning

iCLP enables LLMs to reason more accurately by first generating compact, discrete latent plans (learned via a vector-quantized autoencoder) that guide subsequent natural language thought generation.

Core Problem

Explicit textual plans for LLM reasoning are often prone to hallucinations, lack generalization across diverse tasks, and are difficult to generate accurately.

Why it matters:

- Current methods relying on explicit plans (like ReACT) struggle because specific textual instructions often fail to generalize to new problems.

- Generating detailed explicit plans introduces errors and hallucinations that derail the subsequent reasoning process.

- Human cognition uses subconscious, implicit patterns rather than always verbalizing explicit steps, a mechanism current LLMs lack.

Concrete Example:

When solving a math problem, an explicit planner might generate a rigid text step like 'Use the Pythagorean theorem' which could be slightly off-context or hallucinated. In contrast, iCLP generates a latent token sequence representing a high-level abstract strategy (e.g., 'geometric decomposition') that flexibly guides the reasoning without committing to brittle text.

Key Novelty

Implicit Cognition Latent Planning (iCLP)

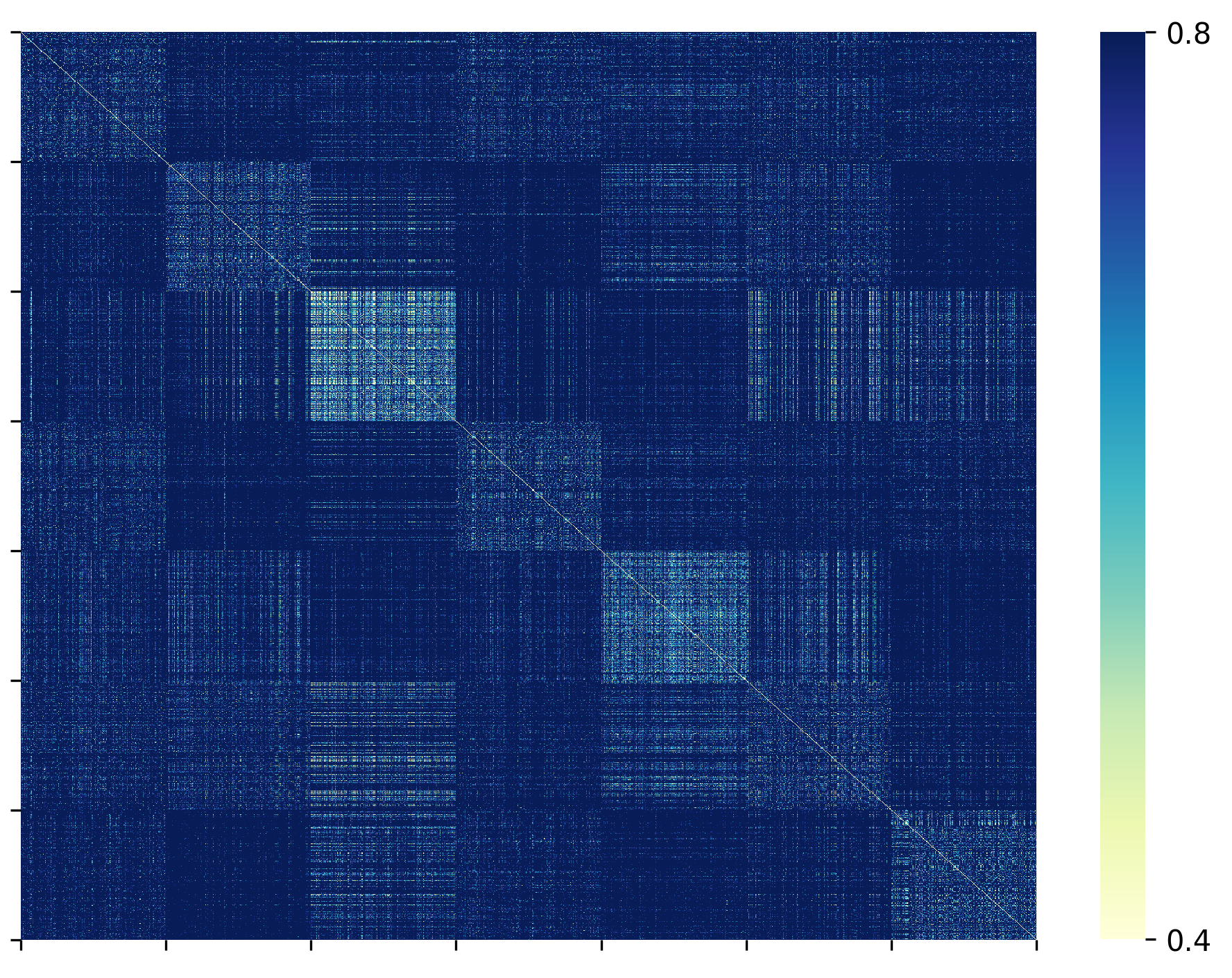

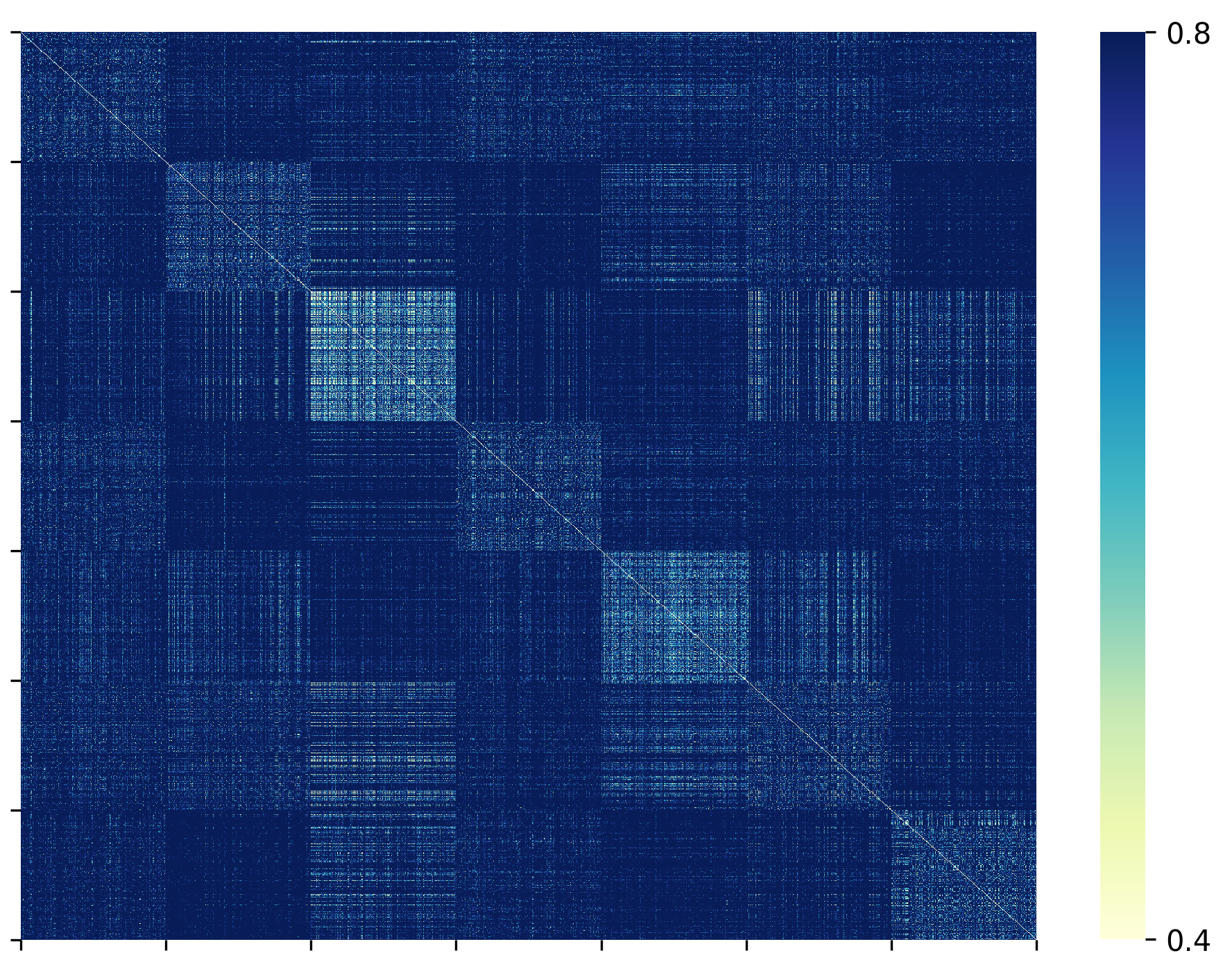

- Distills explicit plans from correct reasoning traces, then compresses them into discrete latent codes using a vector-quantized autoencoder (VQ-VAE).

- Treats planning as a 'subconscious' process: the LLM predicts these latent plan tokens first, which then condition the generation of the explicit chain-of-thought.

- Decouples planning (latent space) from reasoning (language space), allowing the model to learn generalizable, compact reasoning patterns.

Architecture

The complete iCLP pipeline: (1) Explicit Plan Distillation from CoT, (2) Latent Plan Space Learning via VQ-VAE, and (3) Fine-tuning the LLM with Latent Plans.

Evaluation Highlights

- Achieves competitive performance with GRPO (reinforcement learning) on MATH and CodeAlpaca using only supervised fine-tuning on small models like Qwen2.5-7B.

- +10% average accuracy improvement over base models on out-of-domain datasets (AIME 2024, MATH-500) via cross-dataset generalization.

- Reduces token cost by 10% on average compared to zero-shot CoT prompting while improving accuracy.

Breakthrough Assessment

8/10

Strong conceptual novelty in moving planning to a discrete latent space while keeping reasoning explicit. demonstrably improves generalization and efficiency without complex RL, offering a potent alternative to purely text-based planning.