📝 Paper Summary

Synthetic Data Generation

Reasoning

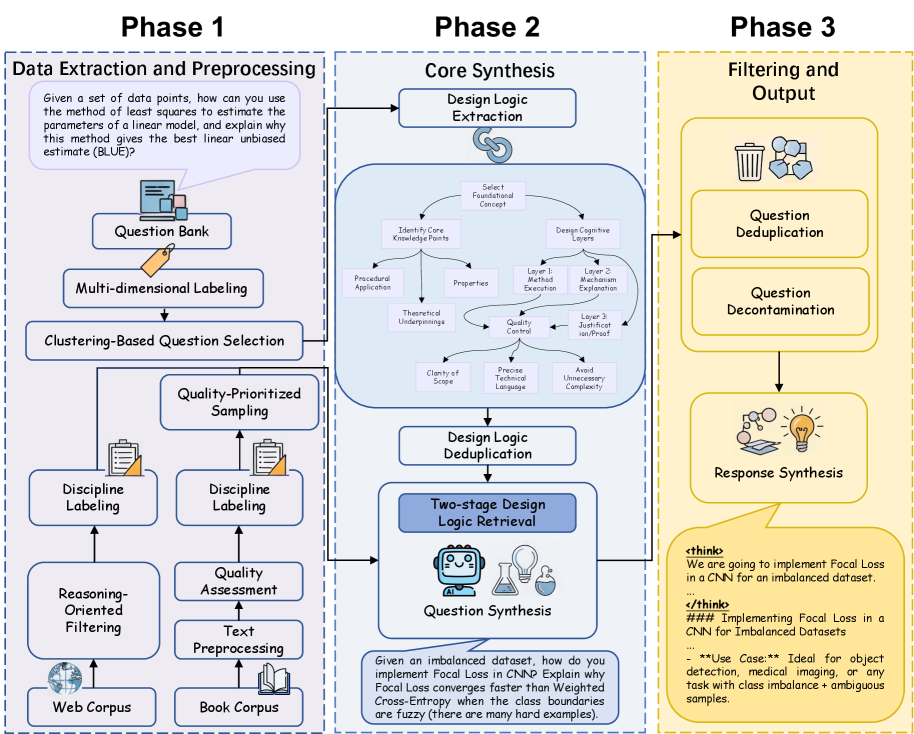

DESIGNER synthesizes complex multidisciplinary reasoning questions by reverse-engineering 'Design Logic' from expert exams and applying these structured templates to new raw texts.

Core Problem

Existing synthetic data methods struggle to generate complex, multi-step reasoning questions across diverse disciplines, often defaulting to simple factual recall or being limited by seed question pools.

Why it matters:

- LLMs lag behind human experts in university-level, discipline-specific reasoning due to scarce high-quality training data beyond math and code

- Document-centric synthesis methods (using raw text) ensure coverage but lack control over difficulty and reasoning depth

- Query-centric methods (rewriting seeds) are limited by the bias and coverage of the initial seed pool

Concrete Example:

When generating questions from a history textbook, standard methods might ask 'When did the war start?' (factual recall). A human expert, however, would design a question requiring analysis of causes and effects. Without explicit guidance, LLMs fail to spontaneously generate these complex structures from raw text.

Key Novelty

Design-Logic-Guided Data Synthesis

- Reverse-engineers 'Design Logic' from difficult human exam questions—abstract meta-knowledge describing the step-by-step process of constructing a complex question (e.g., set objective → build context → add distractors)

- Decouples reasoning structure from content: applies these abstract Design Logics to entirely new source documents (books/web) to synthesize questions that retain the structural complexity of exams but cover new knowledge

Architecture

The overall DESIGNER pipeline: Data Curation → Design Logic Extraction → Question Synthesis.

Evaluation Highlights

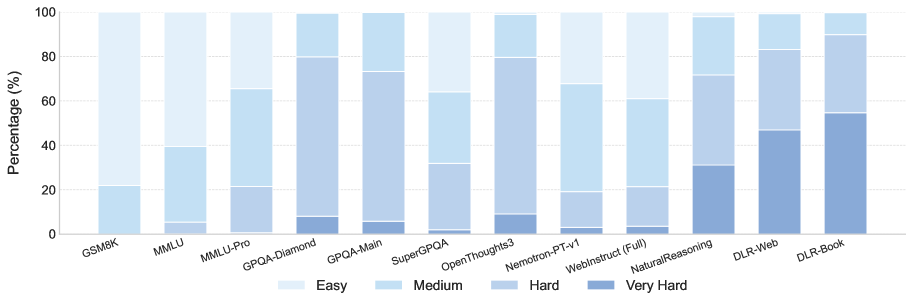

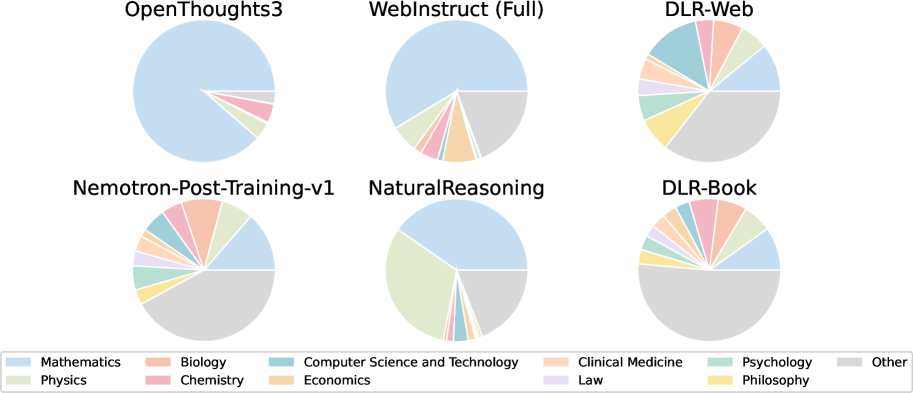

- Synthesized 4.7 million questions (DLR-Book and DLR-Web) across 75 disciplines, with substantially higher difficulty than baselines (e.g., significantly more 'Very Hard' questions)

- Qwen3-7B-Instruct fine-tuned on DESIGNER data outperforms its official post-trained version on GPQA-Diamond (32.8 vs 29.8) and MMLU-Pro (48.4 vs 47.9)

- Llama-3.1-8B-Instruct fine-tuned on DESIGNER data achieves +7.2 accuracy gain on MMLU-Pro compared to the base model, surpassing the official Instruct version

Breakthrough Assessment

8/10

Proposes a novel 'Design Logic' abstraction that effectively bridges the gap between scalable but shallow document-based generation and high-quality but scarce human exam data. Strong empirical results on difficult reasoning benchmarks.