📝 Paper Summary

Neurosymbolic AI

Program Synthesis

Abstract Reasoning

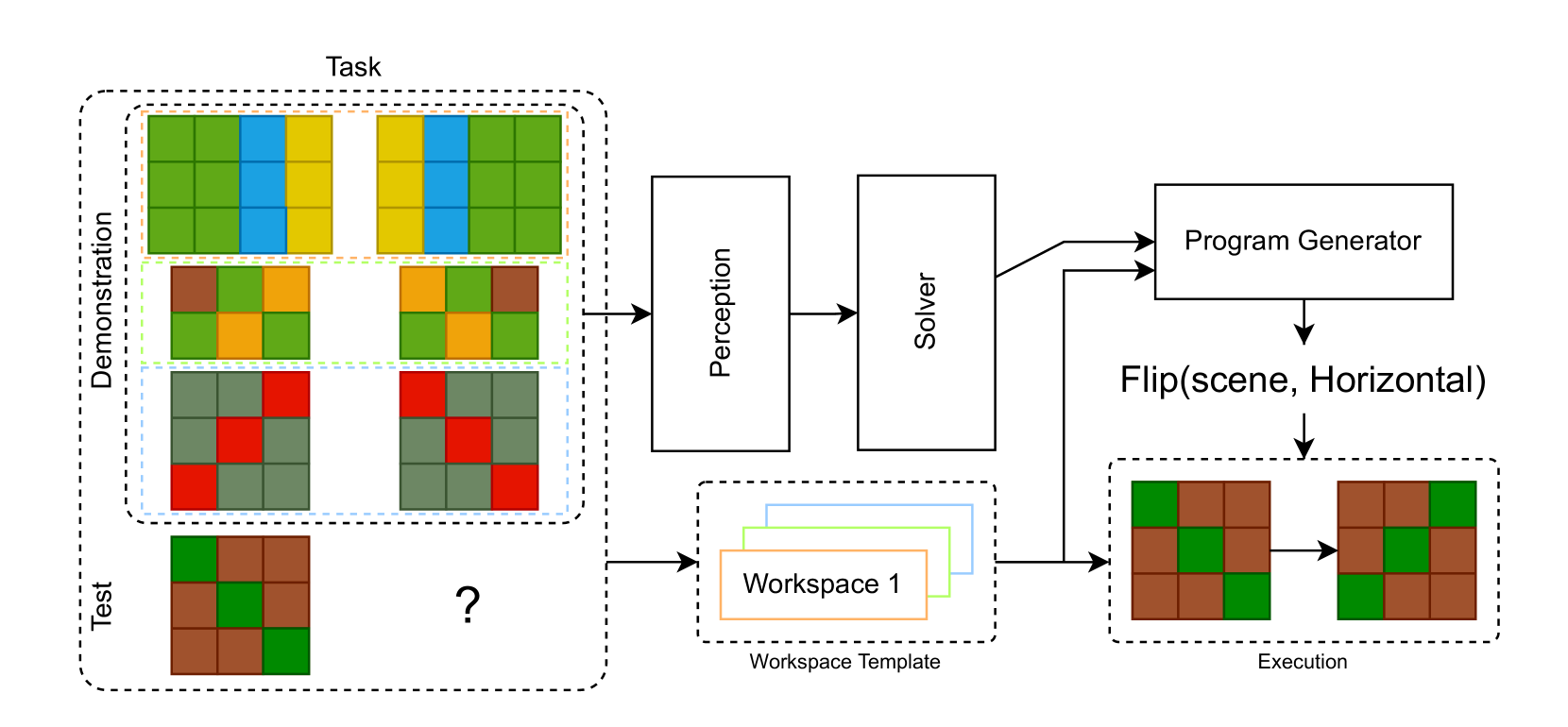

TransCoder solves abstract reasoning tasks by training a neural network to synthesize programs in a domain-specific language, improving itself by generating new synthetic training tasks from its own failed program attempts.

Core Problem

Abstract reasoning tasks like ARC require inferring complex symbolic rules from few visual examples, which is difficult for pure neural networks due to lack of data and hard for symbolic methods due to perception challenges.

Why it matters:

- Current deep learning models struggle with reasoning by analogy and adapting to novel problems with very few examples (few-shot learning).

- The ARC benchmark is considered extremely hard; the best contest entry solved only ~20% of evaluation tasks, highlighting a major gap in AI's abstraction capabilities.

- Existing methods relying on brute-force search are computationally expensive and lack the learnable flexibility of neural approaches.

Concrete Example:

In an ARC task where objects must be counted and then the background color changed based on the count, a standard network might struggle to separate 'counting' from 'coloring'. TransCoder synthesizes a program combining `Count` and `Paint` operations explicitly.

Key Novelty

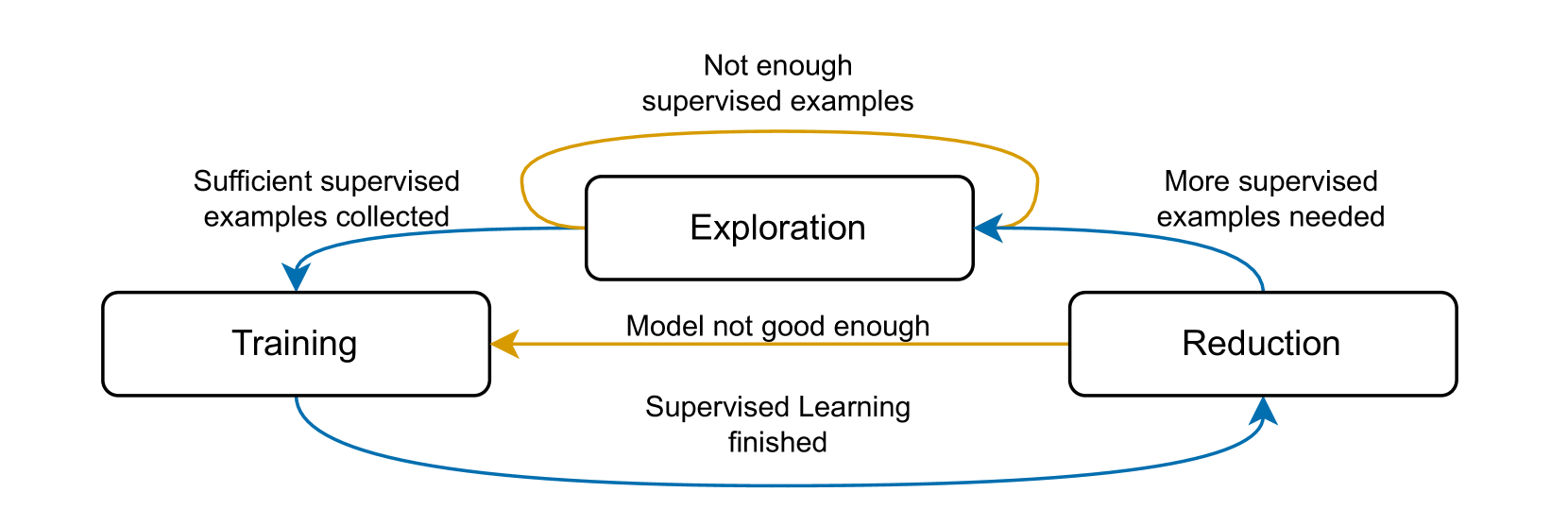

Neurosymbolic TransCoder with 'Learning from Mistakes'

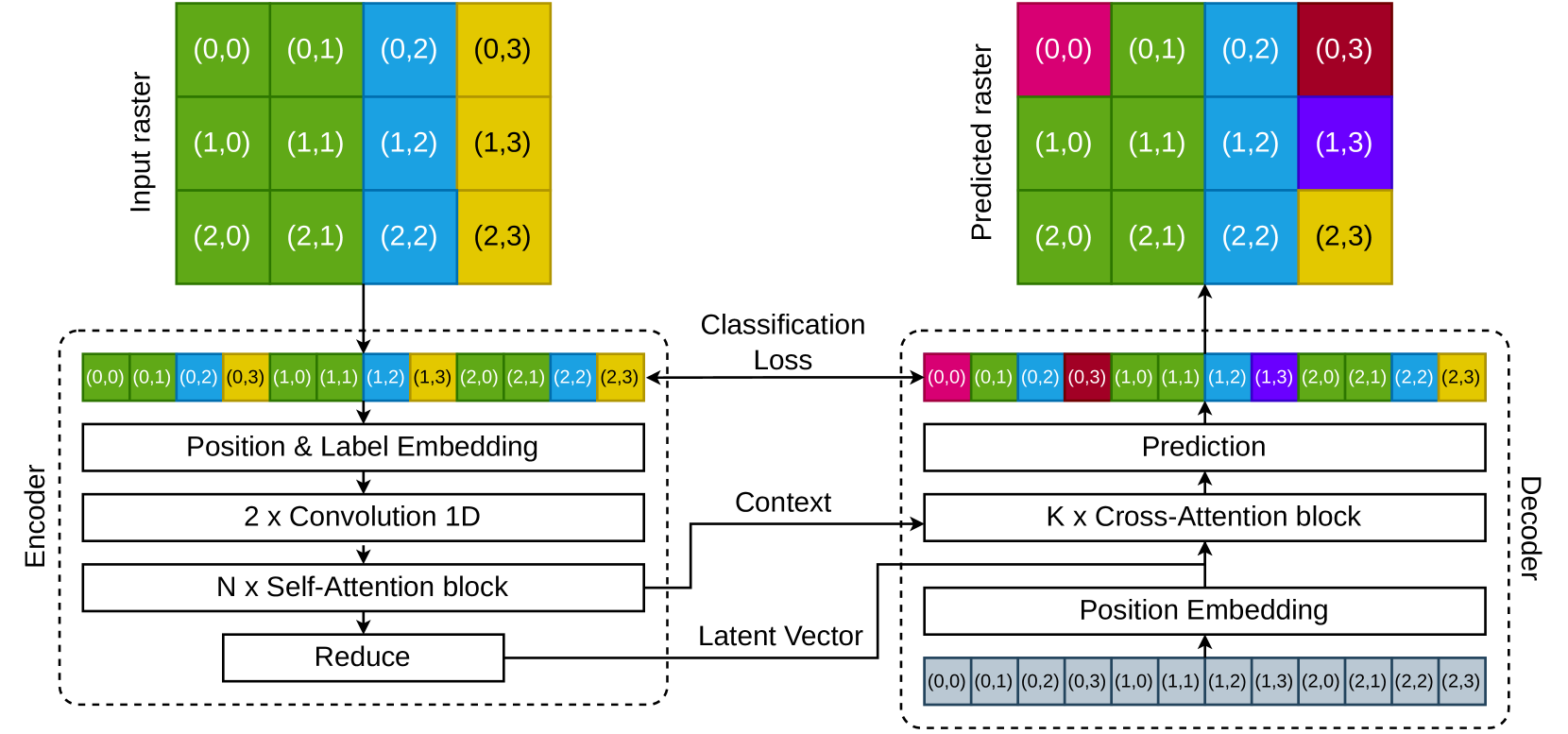

- Synthesizes explicit programs (ASTs) rather than direct outputs, using a neural 'Solver' to map visual tasks to program latents and a 'Generator' to produce code.

- Self-improves via a 'learning from mistakes' loop: when the model generates a program that fails a target task, it treats that failed program as the *correct* solution to a new, easier synthetic task created from the program's actual output.

- Uses a sophisticated perception module that tags pixels with coordinates (positional embeddings) to handle variable-sized rasters and extract symbolic primitives for the program generator.

Architecture

The complete TransCoder architecture pipeline from input demonstrations to program execution.

Evaluation Highlights

- Achieves 99.98% per-pixel reconstruction accuracy in the pre-trained raster encoder, effectively compressing visual information.

- Generates tens of thousands of synthetic problems with known solutions to bootstrap training where data is scarce.

- Demonstrates a complete neurosymbolic pipeline that produces syntactically correct programs by construction.

Breakthrough Assessment

5/10

Proposes an interesting 'learning from mistakes' data augmentation strategy and a clean neurosymbolic architecture. However, the paper lacks final end-to-end performance metrics on the ARC public leaderboard compared to SOTA.