📝 Paper Summary

Neurosymbolic AI

Deductive Reasoning

Autoformalization

The paper improves the reliability of smaller language models in logical reasoning by decomposing the translation process into predicate generation and formula construction, augmented by a verifier.

Core Problem

Small language models struggle to translate natural language into well-formatted First-Order Logic (FOL) due to cascading errors in greedy decoding and a lack of self-correction capabilities found in larger models.

Why it matters:

- Small models are more accessible and cost-effective than large proprietary models but currently lack the reliability needed for symbolic reasoning tasks

- Existing self-correction methods rely on interpreting complex solver feedback, a capability small models lack

- Single-step generation in small models often leads to repetitive loops or syntax errors that break external solvers

Concrete Example:

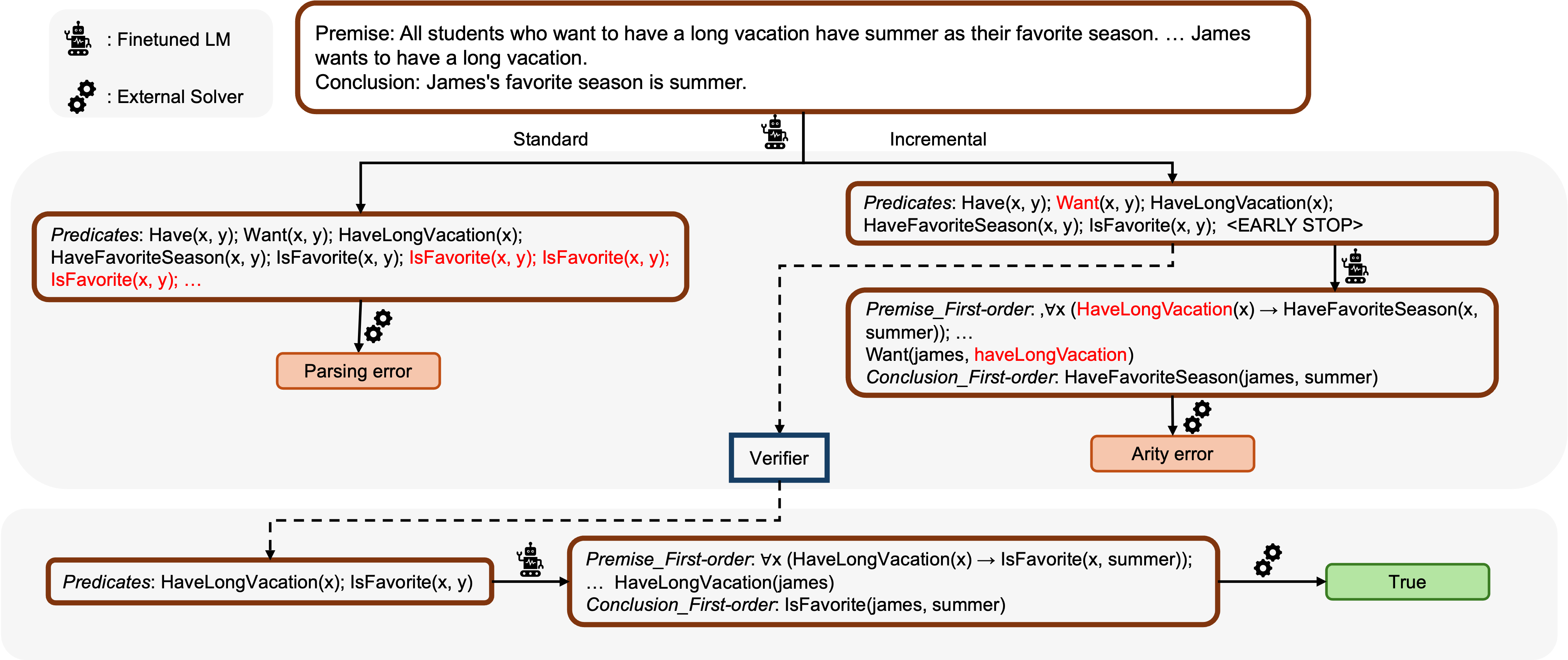

When translating a statement under standard inference, a model might get stuck in a repetitive loop generating 'IsFavorite(x, y)' indefinitely due to a single token-level error cascading through the generation, as shown in the paper's Figure 1.

Key Novelty

Incremental Inference with Predicate Verification

- Decomposes the NL-to-FOL translation into two distinct stages: first generating a list of predicates, then generating the full logic formulas

- Introduces an intermediate verification step that corrects predicate arity (number of arguments) before the final translation, preventing syntax errors from propagating

- Uses a tool-based synthetic data generation pipeline to create high-quality training data for smaller models, overcoming data scarcity

Architecture

Contrast between Standard Inference (prone to loops) and Incremental Inference

Breakthrough Assessment

6/10

A practical architectural improvement for neurosymbolic reasoning with small models. While not a fundamental paradigm shift, it addresses specific reliability bottlenecks (formatting, arity) that hinder the deployment of smaller LMs.