📝 Paper Summary

Neurosymbolic AI

LLM Reasoning

This paper reframes instruction-tuned LLMs as symbolic systems grounded in vector space and proposes an iterative learning algorithm that updates prompts via natural language critiques rather than updating model weights.

Core Problem

Neurosymbolic AI struggles to unify discrete symbolic knowledge with continuous vector representations (the symbol grounding problem), while LLMs often fail at logical consistency and adapting to new concepts outside their training distribution.

Why it matters:

- LLMs lack verifiable reasoning and struggle with tasks requiring strict logical adherence

- Traditional neurosymbolic approaches face mathematical challenges in bridging the gap between explicit symbols and implicit continuous vector spaces

- Current methods often require fine-tuning weights, which is computationally expensive compared to inference-time context adjustments

Concrete Example:

If a model incorrectly infers a penguin can fly based on the rule 'birds fly', a traditional system might fail or require retraining. In this proposed system, a judge provides the text feedback 'Penguins cannot fly', which is injected into the context (prompt) to reshape the vector space alignment for subsequent attempts, correcting the error without weight updates.

Key Novelty

Model-Grounded Symbolic Learning Framework

- Reinterprets natural language words as symbols that are 'grounded' directly in the LLM's internal high-dimensional vector space (model grounding) rather than needing external referents

- Replaces gradient-based weight updates with an iterative 'symbolic learning' loop: generating outputs, receiving natural language critiques from a judge, and updating the prompt context to correct reasoning

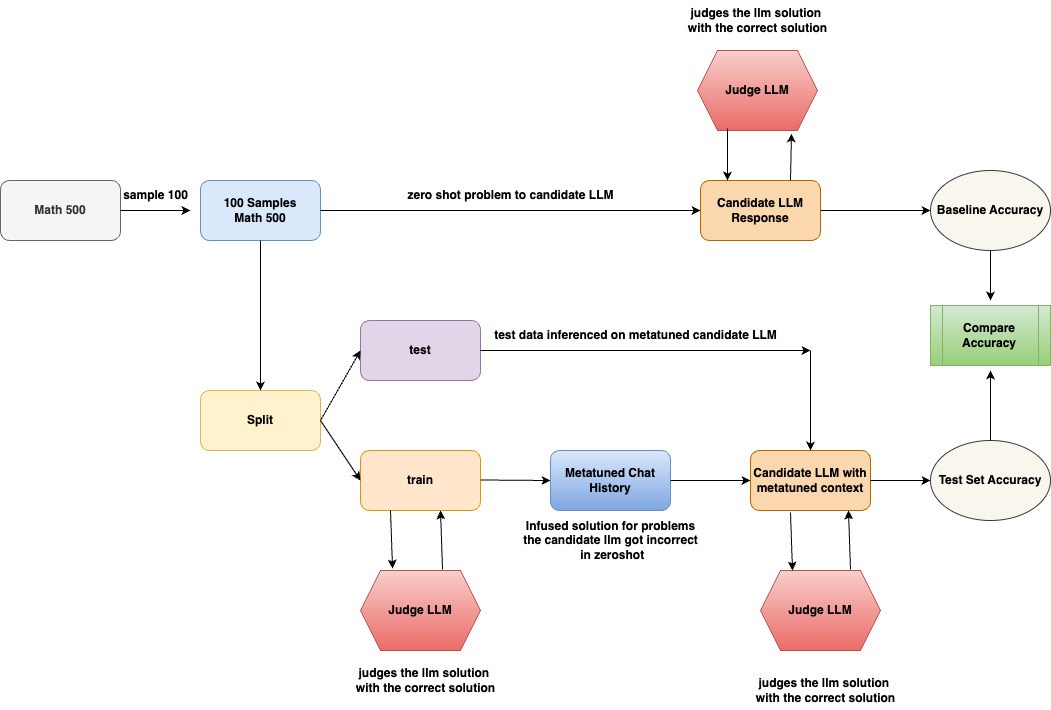

Architecture

The iterative learning loop for model-grounded symbolic systems

Breakthrough Assessment

5/10

Presents a strong theoretical reframing of LLMs as symbolic systems and a logical algorithm for prompt-based learning, but the provided text lacks the quantitative experimental results to prove effectiveness.