📝 Paper Summary

Efficient Reasoning

Adaptive Inference

Early Exit

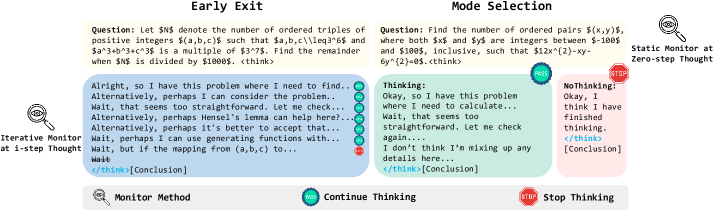

Mode Selection is formalized as a harder variant of Early Exit called 'Zero-Step Thinking', where models must decide whether to reason or answer directly based solely on the prompt without generating any initial thoughts.

Core Problem

Large Reasoning Models (LRMs) often overthink simple problems, wasting computation. Existing Mode Selection methods must decide between Long-CoT and Short-CoT before reasoning begins, lacking the trajectory information used by dynamic Early Exit methods.

Why it matters:

- Models like DeepSeek-R1 and OpenAI o1 incur high inference costs by engaging in long chain-of-thought processes even for trivial queries.

- Overthinking can degrade performance on simple tasks where extended reasoning introduces errors or hallucinations.

- Current adaptive methods often rely on iterative checks (Early Exit), but deciding *before* generation starts (Mode Selection) is more efficient but significantly harder due to information scarcity.

Concrete Example:

When asked a simple math question, a reasoning model might generate a long chain of thought (<think>...</think>) before answering. Mode Selection aims to insert a 'fake thought' (<think>Okay, I think I have finished thinking.</think>) to force an immediate answer (NoThinking mode), but prompt-based classifiers often fail to correctly identify which questions are simple enough for this mode.

Key Novelty

Unified Framework for Mode Selection and Early Exit

- Formalizes Mode Selection as a specific case of Early Exit that happens at 'Step Zero' (Zero-Step Thinking), using pre-defined fake thoughts instead of generated reasoning traces.

- Investigates whether internal model states (confidence, entropy) can predict the need for reasoning *before* generation starts, effectively treating the input prompt as a sufficient signal for difficulty estimation.

Architecture

Contrast between standard Early Exit (dynamic) and Mode Selection (static/zero-step)

Evaluation Highlights

- Prompt-based methods like FlashThink fail completely at Zero-Step Thinking (0% NoThinking Ratio), unable to decide to skip reasoning without intermediate traces.

- Internal state methods (ProbeConf, DEER) perform better: DEER achieves superior performance on the 32B model, reducing token usage while preserving accuracy.

- PromptConf reduces token usage by 36.0% on AIME25 with a 6.7 accuracy improvement for the 1.5B model, though effectiveness degrades on larger models.

Breakthrough Assessment

4/10

Primarily an empirical study and formalization rather than a new method. It highlights the difficulty of Zero-Step Thinking and benchmarks existing methods, showing that current solutions are insufficient for this 'hard' version of early exit.