📊 Experiments & Results

Evaluation Setup

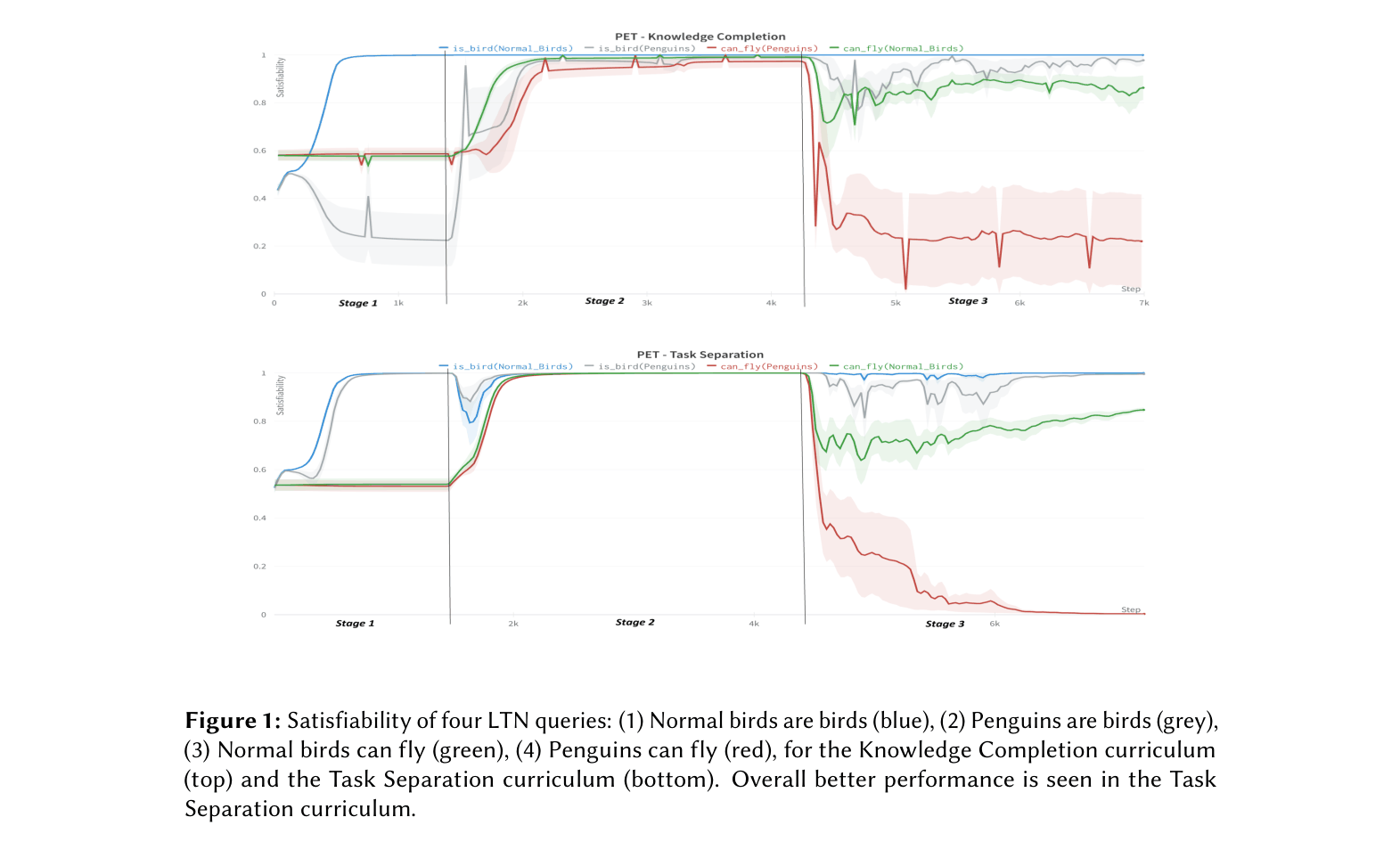

Satisfiability of specific logical queries after training sequence

Benchmarks:

- Penguin Exception Task (PET) (Non-monotonic reasoning / Logic puzzle)

- Smokers and Friends (Statistical Relational Learning)

- bAbI Task 1 (Reasoning QA)

Metrics:

- Satisfiability (truth value) of target rules

- Classification accuracy

- Statistical methodology: Not explicitly reported in the paper

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| In the Penguin Exception Task, the goal is to correctly classify that Penguins do NOT fly (rule 4) while Normal Birds DO fly (rule 3), despite Penguins being Birds. Task Separation (TS) succeeds best. | ||||

| Penguin Exception Task (PET) | Satisfiability: not(can_fly(Penguins)) | 0.628 | 0.997 | +0.369 |

| Penguin Exception Task (PET) | Satisfiability: can_fly(Birds) | 0.962 | 0.995 | +0.033 |

| Penguin Exception Task (PET) | Satisfiability: is_bird(Penguins) | 0.618 | 0.999 | +0.381 |

| Smokers and Friends task comparison shows Knowledge Completion (KC) curriculum improving satisfiability of key causal rules compared to Baseline. | ||||

| Smokers and Friends | Satisfiability: Smokes(x) => Cancer(x) | 0.715 | 0.978 | +0.263 |

| Smokers and Friends | Satisfiability: not(Smokes(x)) => not(Cancer(x)) | 0.917 | 0.991 | +0.074 |

Experiment Figures

Satisfiability curves for four key queries (Normal birds are birds, Penguins are birds, Normal birds fly, Penguins fly) over training stages for Knowledge Completion vs. Task Separation curricula.

Main Takeaways

- Curriculum matters significantly: Task Separation (grouping by predicate) yields the most robust results for non-monotonic tasks like PET, preventing the averaging of contradictory rules.

- Rehearsal is critical: Recalling previously learned facts (e.g., 'Normal birds can fly') allows the model to adjust beliefs when new contradictory info ('Penguins don't fly') is introduced, recovering from temporary dips in satisfiability.

- Even Random curricula outperform the single-stage Baseline on average, suggesting that breaking the problem into any sequence is better than simultaneous learning for these logical contradictions.

- Knowledge Completion (learning facts then rules) is effective for statistical relational tasks (Smokers & Friends) but less robust for pure non-monotonic reasoning compared to Task Separation.