📝 Paper Summary

Code-Enhanced Reasoning

Reasoning-Driven Code Generation

LLM Planning and Self-Correction

This survey systematizes the 'Möbius strip' relationship in LLMs, where code structure grounds abstract reasoning in verifiable execution, while enhanced reasoning capabilities enable autonomous software agents to handle complex coding tasks.

Core Problem

LLM reasoning and code generation are often treated as separate capabilities, ignoring the 'Möbius strip' effect where improvements in one domain reinforce the other.

Why it matters:

- Pure natural language reasoning lacks verification, often leading to calculation errors or logic hallucinations in complex tasks

- Simple code completion models lack the planning and self-correction abilities required for real-world software engineering

- Understanding this bidirectional synergy is crucial for developing end-to-end autonomous agents capable of rigorous deduction and complex system design

Concrete Example:

In mathematical reasoning, a pure text LLM may halluncinate arithmetic steps. By contrast, a 'Code to Think' approach (like PoT) generates a Python script to perform the calculation, ensuring precision via the interpreter. Conversely, in 'Think to Code', an agent plans a software architecture in natural language before writing the implementation.

Key Novelty

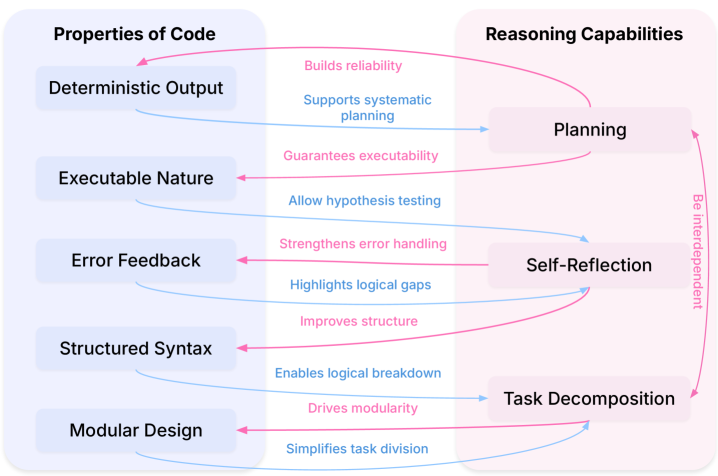

The 'Code-Reasoning Möbius Strip' Taxonomy

- Categorizes the field into two reinforcing flows: 'Code to Think' (using code's strict syntax and execution for general reasoning) and 'Think to Code' (using planning and logical deduction to improve code generation).

- Identifies code as a 'structured medium' that provides verifiable execution paths and logical decomposition for non-coding tasks.

Architecture

Conceptual diagram of the 'Code-Reasoning Möbius Strip'

Evaluation Highlights

- The survey reviews the CodePlan dataset, which contains 2,000,000 standard prompt-response-code plan triplets to enhance planning capabilities

- Highlights findings that adding code data during pre-training boosts general reasoning, while instruction tuning with code refines adherence to human instructions

Breakthrough Assessment

8/10

A timely and high-utility survey that formalizes the symbiotic relationship between code and reasoning, a critical trend in modern LLM development (e.g., OpenAI o1, DeepSeek-R1).