📝 Paper Summary

LLM-guided Reinforcement Learning

Exploration in Sparse Rewards

The authors propose integrating LLM-generated planning hints directly into RL observations, creating soft constraints that allow agents to learn when to follow or ignore guidance during sparse-reward exploration.

Core Problem

RL agents struggle to discover successful strategies in sparse-reward, long-horizon tasks, while existing LLM-guided methods create rigid dependencies where incorrect LLM advice degrades performance.

Why it matters:

- Traditional exploration (random/epsilon-greedy) is highly inefficient when only specific, long sequences of actions yield rewards

- Prior methods using LLMs as direct policies or reward shapers create 'hard constraints,' making the system brittle if the LLM hallucinates or misunderstands the state

Concrete Example:

In the `PickupLoc` task, an agent must navigate to a specific location to pick up an object. A standard RL agent flails randomly and rarely finds the object. A rigid LLM planner might hallucinate a path through a wall, causing the agent to get stuck. The proposed method lets the agent see the 'wall path' hint but learn to ignore it via trial and error.

Key Novelty

LLM Hints as Augmented Observations (Soft Constraints)

- Instead of forcing the agent to follow the LLM (hard constraint), the system appends the LLM's suggested action and an 'availability' flag to the agent's observation vector

- This treats planning guidance like a sensor reading: the RL policy learns a weight for this input, allowing it to follow the hint when helpful and ignore it when the LLM is wrong

Architecture

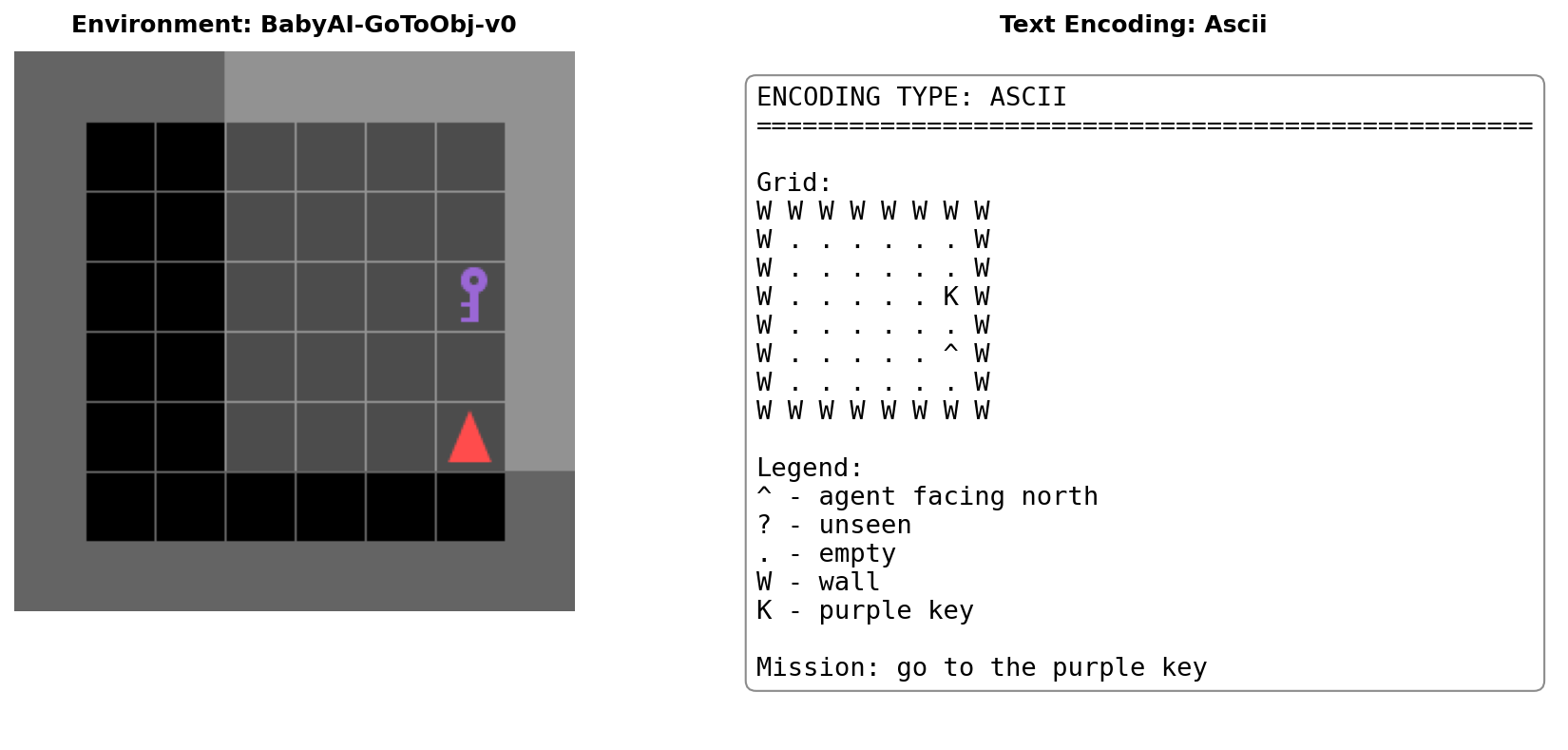

Conceptual diagram of the Hint Generation and Observation Augmentation pipeline.

Evaluation Highlights

- 71% relative improvement in success rate over PPO baseline on the hardest environment (PickupLoc), rising from 17.2% to 29.5%

- 9x faster learning on GoToObj task: LLM-guided agents reach 50% success in 120K frames versus 1.08M frames for baseline

- Consistent performance gains using hint frequency k=5 compared to k=10, validating that frequent guidance aids early exploration

Breakthrough Assessment

7/10

A clever, elegant architectural simplifiction (hints as observations) that solves the 'LLM reliability' problem in RL. Strong results on BabyAI, though evaluation is limited to gridworlds so far.